Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Show Vacuum operation result (files deleted) witho...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-06-2022 05:09 PM

Hi, I'm runing some scheduled vacuum jobs and would like to know how many files were deleted without making all the computation twice, with and without DRY RUN, is there a way to accomplish this?

Thanks!

Labels:

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-07-2022 02:44 AM

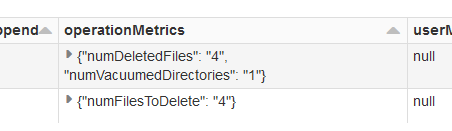

SELECT * FROM (DESCRIBE HISTORY table)x WHERE operation IN ('VACUUM END', 'VACUUM START');that gives us required information:

4 REPLIES 4

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-06-2022 09:51 PM

Hi @Alejandro Martinez :

I don't think we have any such command to get the statistics before vacuum and after vacuum.

Atleast I haven't come across any.

If you want to capture more details, may be you can write a function to capture the statistics as below.

Data files size:

Data files count:

Before:

var getDataFileSize = 0

val getDataFileCount = dbutils.fs.ls(<Your Table Path>").toList.size

dbutils.fs.ls(<Your Table Path>)

.foreach

{

file =>

getDataFileSize = getDataFileSize + file.size

}

After:

Repeat above

Lets see if other community members have better ideas on this.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-07-2022 02:44 AM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-07-2022 06:13 AM

Thank you! Not the solution I was looking for, but it seems nothing better exists...yet so going for that.

Thanks!!!

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-07-2022 06:22 AM

We have to enable logging to capture the logs for vacuum.

spark.conf.set("spark.databricks.delta.vacuum.logging.enabled","true")

Announcements

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- Create a catalog with external location from different Metastore in Data Governance

- Spacy Retraining failure in Machine Learning

- Permission denied using patchelf in Administration & Architecture

- Delete Azure Databricks Workspace resource but reference remains in Account Console in Administration & Architecture

- Pyspark operations slowness in CLuster 14.3LTS as compared to 13.3 LTS in Data Engineering