Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- How to set retention period for a delta table lowe...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-15-2022 08:33 AM

I am trying to set retention period for a delta by using following commands.

deltaTable = DeltaTable.forPath(spark,delta_path)

spark.conf.set("spark.databricks.delta.retentionDurationCheck.enabled", "false")

deltaTable.logRetentionDuration = "interval 1 days"

deltaTable.deletedFileRetentionDuration = "interval 1 days"

These commands are not working for me, I mean, they aren't removing any files for the given interval..where am I going wrong?

Labels:

- Labels:

-

Delta table

-

Retention Period

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-15-2022 08:56 AM

There are two ways:

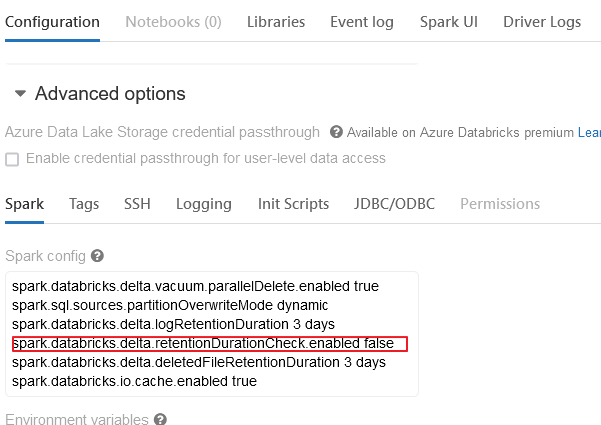

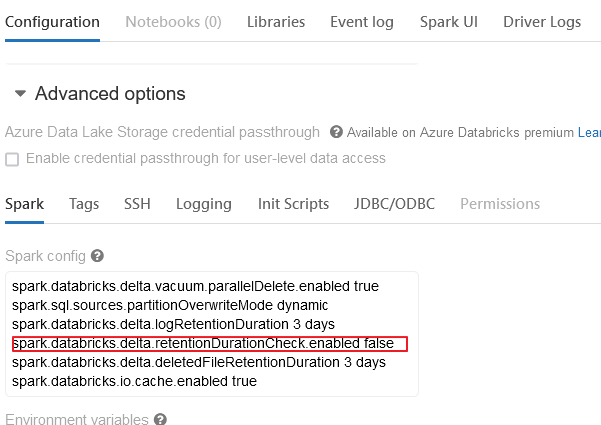

1) Please set in cluster (Clusters -> edit -> Spark -> Spark config):

spark.databricks.delta.retentionDurationCheck.enabled false

2) or just before DeltaTable.forPath set (I think you need to change order in your code):

spark.conf.set("spark.databricks.delta.retentionDurationCheck.enabled", "false")

5 REPLIES 5

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-15-2022 08:56 AM

There are two ways:

1) Please set in cluster (Clusters -> edit -> Spark -> Spark config):

spark.databricks.delta.retentionDurationCheck.enabled false

2) or just before DeltaTable.forPath set (I think you need to change order in your code):

spark.conf.set("spark.databricks.delta.retentionDurationCheck.enabled", "false")Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-16-2022 07:58 AM

Hi @Manasa Kalluri , It seems @Hubert Dudek has given a comprehensive solution. Were you able to solve your problem?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-16-2022 11:39 PM

Hi @Kaniz Fatma , Yes I was able to solve the issue! Thanks

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-16-2022 11:37 PM

Hi @Hubert Dudek , thanks for you response!

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-17-2022 12:47 AM

Hi @Manasa Kalluri , Thank you for the update. Would you like to mark @Hubert Dudek 's answer as "Best" which would help our community members hereafter 😊 ?

Announcements

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- I have to run the notebook in concurrently using process pool executor in python in Data Engineering

- Trying to run databricks academy labs, but execution fails due to method to clearcache not whilelist in Data Engineering

- java.lang.ClassNotFoundException: com.johnsnowlabs.nlp.DocumentAssembler in Machine Learning

- calculate the number of parallel tasks that can be executed in a Databricks PySpark cluster in Data Engineering

- One-time backfill for DLT streaming table before apply_changes in Data Engineering