Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Is it possible to use Autoloader with a daily upda...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-08-2022 09:10 AM

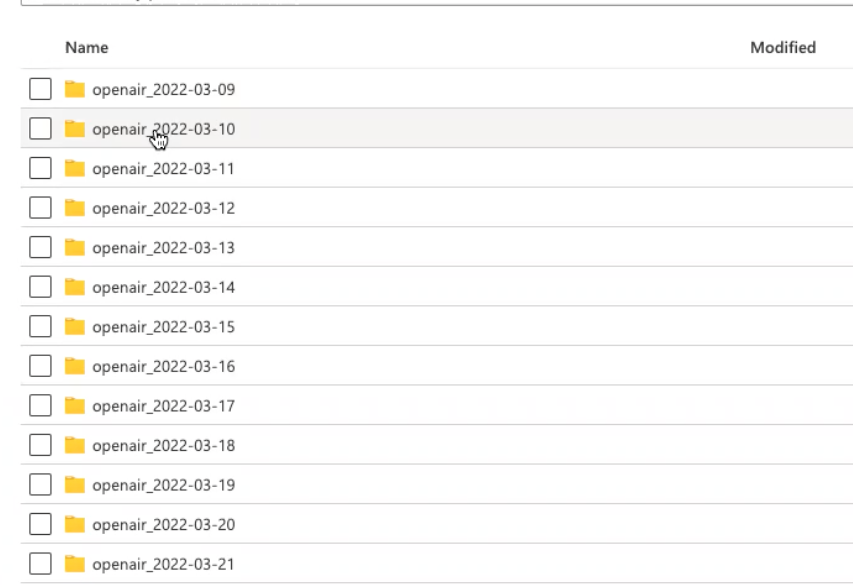

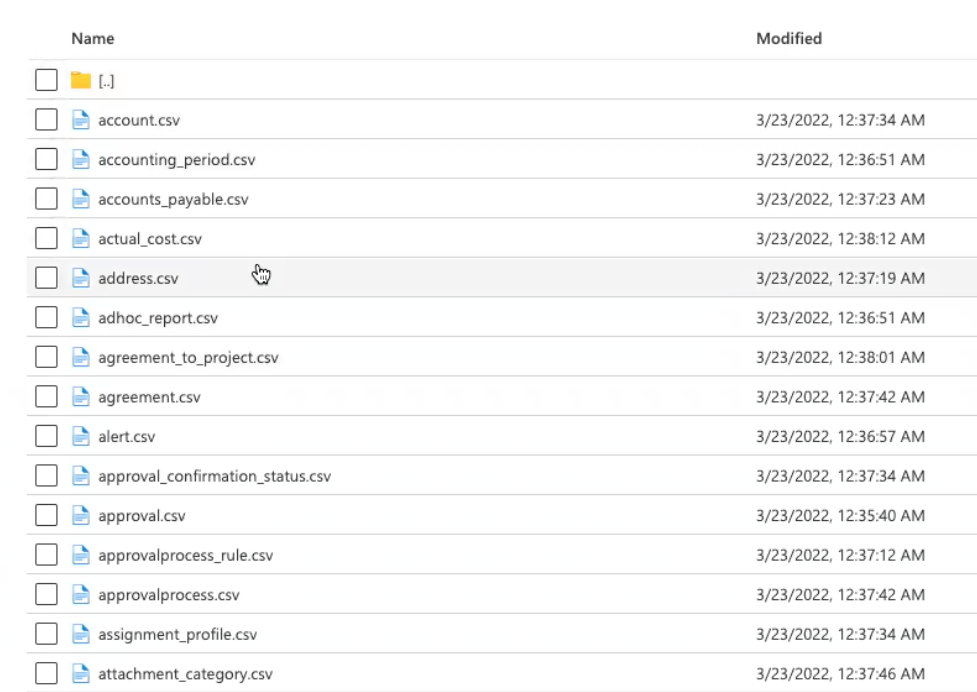

We get new files from a third-p@rty each day. The files could be the same or different. However, each day all csv files arrive in the same dated folder. Is it possible to use autoloader on this structure?

Labels:

- Labels:

-

Autoloader

-

Files

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-08-2022 09:25 AM

@Stephanie Rivera , You can use pathGlobfilter, but you will need a separate autoloader for which type of file.

df_alert = spark.readStream.format("cloudFiles") \

.option("cloudFiles.format", "binaryFile") \

.option("pathGlobfilter", alert.csv") \

.load(<base_path>)

I think I prefer to set some copy activity first (in Azure Data Factory, for example) to group all files in the same folder on Data Lake. So, for example, alerts.csv is copied to the alert folder and renamed to date, so alerts/2022-04-08.csv (or maybe parquet instead). Then folder I would register in databricks metastore so it will be queryable like SELECT * FROM Alerts, or as Data Live Table to convert it. Then, in the copy activity in Azure Data Factory, you can set it to detect only new files and copy them.

2 REPLIES 2

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-08-2022 09:25 AM

@Stephanie Rivera , You can use pathGlobfilter, but you will need a separate autoloader for which type of file.

df_alert = spark.readStream.format("cloudFiles") \

.option("cloudFiles.format", "binaryFile") \

.option("pathGlobfilter", alert.csv") \

.load(<base_path>)

I think I prefer to set some copy activity first (in Azure Data Factory, for example) to group all files in the same folder on Data Lake. So, for example, alerts.csv is copied to the alert folder and renamed to date, so alerts/2022-04-08.csv (or maybe parquet instead). Then folder I would register in databricks metastore so it will be queryable like SELECT * FROM Alerts, or as Data Live Table to convert it. Then, in the copy activity in Azure Data Factory, you can set it to detect only new files and copy them.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2022 04:10 AM

Hi @Stephanie Rivera , Just a friendly follow-up. Do you still need help, or @Hubert Dudek (Customer) 's response help you to find the solution? Please let us know.

Announcements

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- Databricks connecting SQL Azure DW - Confused between Polybase and Copy Into in Data Engineering

- Ingesting Files - Same file name, modified content in Data Engineering

- Trigger a job on file update in Data Engineering

- Autoloader ingestion same top level directory different files corresponding to different tables in Data Engineering

- Unable to create a record_id column via DLT - Autoloader in Data Engineering