Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Databricks Runtime 10.4 LTS - AnalysisException: ...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-12-2022 10:15 AM

Hello,

We are working to migrate to databricks runtime 10.4 LTS from 9.1 LTS but we're running into weird behavioral issues. Our existing code works up until runtime 10.3 and in 10.4 it stopped working.

Problem:

We have a nested json file that we are flattening into a spark data frame using the code below:

adaccountsdf = df.withColumn('Exp_Organizations', F.explode(F.col('organizations.organization')))\

.withColumn('Exp_AdAccounts', F.explode(F.col('Exp_Organizations.ad_accounts')))\

.select(F.col('Exp_Organizations.id').alias('organizationId'),

F.col('Exp_Organizations.name').alias('organizationName'),

F.col('Exp_AdAccounts.id').alias('adAccountId'),

F.col('Exp_AdAccounts.name').alias('adAccountName'),

F.col('Exp_AdAccounts.timezone').alias('timezone'))Now when we query the dataframe it works when we do the following selects (hid results due to confidentiality):

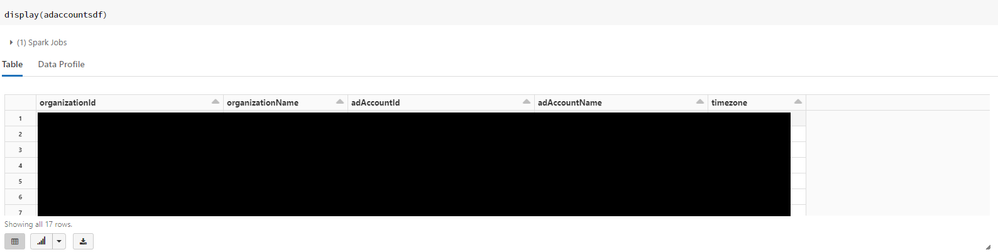

display(adaccountsdf.select("*"))

OR

display(adaccountsdf)

root

|-- organizationId: string (nullable = true)

|-- organizationName: string (nullable = true)

|-- adAccountId: string (nullable = true)

|-- adAccountName: string (nullable = true)

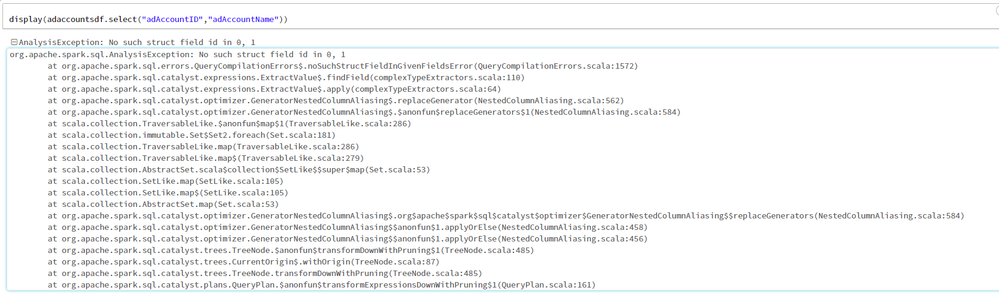

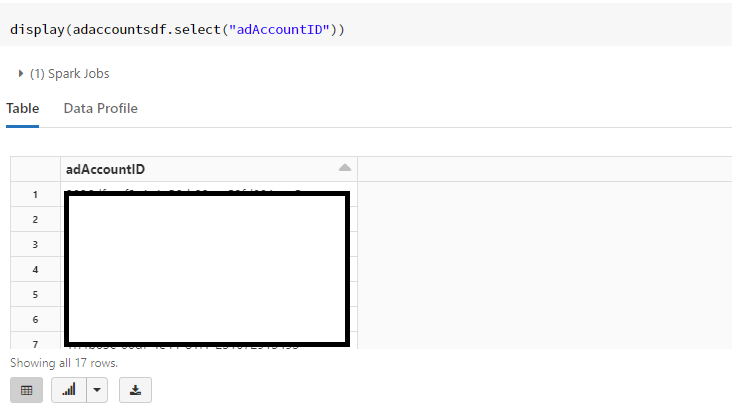

|-- timezone: string (nullable = true)so everything looks like it should. The moment we start selecting the last 3 fields(adAccountId, adAccountName and timezone) we get the following error:

Does anyone know why this is happening? It's a very strange error that only shows up in databricks runtime 10.4. All previous runtimes incl 10.3, 10.2,10.1 and 9.1 LTS work fine. The issue seems to be caused by using the explode function on an already exploded column in the dataframe.

UPDATE:

For some reason when I run adaccountsdf.cache() before I run my select statements the issue disappears. Would still like to know what's causing this issue in runtime 10.4 but not the other ones.

Labels:

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-20-2022 08:59 AM

It seems like the issue was miraculously resolved. I did not make any code changes but everything is now running as expected.

Maybe the latest runtime 10.4 fix released on April 19th also resolved this issue unintentionally.

11 REPLIES 11

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-13-2022 11:42 AM

Hi @Emiel Smeenk ,

This guide helps you migrate your Azure Databricks workloads to the latest version of Databricks Runtime 10.x.

Databricks recommends that you migrate your workloads to a supported Databricks Runtime LTS version from that version’s most recent supported LTS version.

Therefore, this article focuses on migrating workloads from Databricks Runtime 9.1 LTS to Databricks Runtime 10.4 LTS.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-20-2022 08:59 AM

It seems like the issue was miraculously resolved. I did not make any code changes but everything is now running as expected.

Maybe the latest runtime 10.4 fix released on April 19th also resolved this issue unintentionally.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-21-2022 03:55 AM

@Emiel Smeenk

We were facing the same issue and suddenly 2022-Apr-20 onwards it resolved itself.

Question:- Is there any website where I can see/track these "patches"?

Edit: Added Question.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-21-2022 11:34 PM

Hi @Nirupam Nishant and @Emiel Smeenk , This page lists maintenance updates issued for Databricks Runtime releases.

April 19, 2022 - Maintenance updates

- We upgraded Java AWS SDK from version 1.11.655 to 1.12.1899.

- We fixed an issue with notebook-scoped libraries not working in batch streaming jobs.

- [SPARK-38616][SQL] Keep track of SQL query text in Catalyst TreeNode

- Operating system security updates.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2022 03:48 AM

Hi @Nirupam Nishant , Just a friendly follow-up. Do you still need help, or does my response help you to find the solution? Please let us know.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2022 11:45 AM

@Kaniz Fatma

Your answer suffices my query. Thanks!

In addition, for fellow developers, I later noticed that these release notes are also available on the home screen of your Databricks workspace.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2022 12:45 PM

Hi @Nirupam Nishant , Thank you for the update and the valuable message for our community members. Since my answer suffices your query, would you like to mark my answer as the best?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2022 12:54 PM

@Kaniz Fatma I did not ask the original question.

@Emiel Smeenk had asked and answered his own question stating that the issue was fixed on its own (probably due to latest patch).

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2022 01:03 PM

No worries @Nirupam Nishant . Either of you can mark the best answer. As the initial question was answered by @Emiel Smeenk himself, you can mark his answer as the best.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2022 01:16 PM

Issue resolved on its own so selected that as the best answer for this post.

Thanks,

Emiel

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2022 01:17 PM

Awesome. Thank you @Emiel Smeenk 😊 .

Announcements

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- Help Refactor T-SQL Code to Databricks SQL in Data Engineering

- value is null after loading a saved df when using specific type in schema in Data Engineering

- Accessing data from a legacy hive metastore workspace on a new Unity Catalog workspace in Data Engineering

- Delta Live Table - Cannot redefine dataset in Data Engineering

- Unleashing UCX v0.15.0: A Game-Changer for Upgrading to Unity Catalog in Data Governance