Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Databricks write to Azure Data Explorer writes su...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-01-2022 12:37 AM

Now, I write to Azure Data explorer using Spark streaming. one day, writes suddenly become slower. restart is no effect.

I have a questions about Spark Streaming to Azure Data explorer.

Q1: What should I do to get performance to reply?

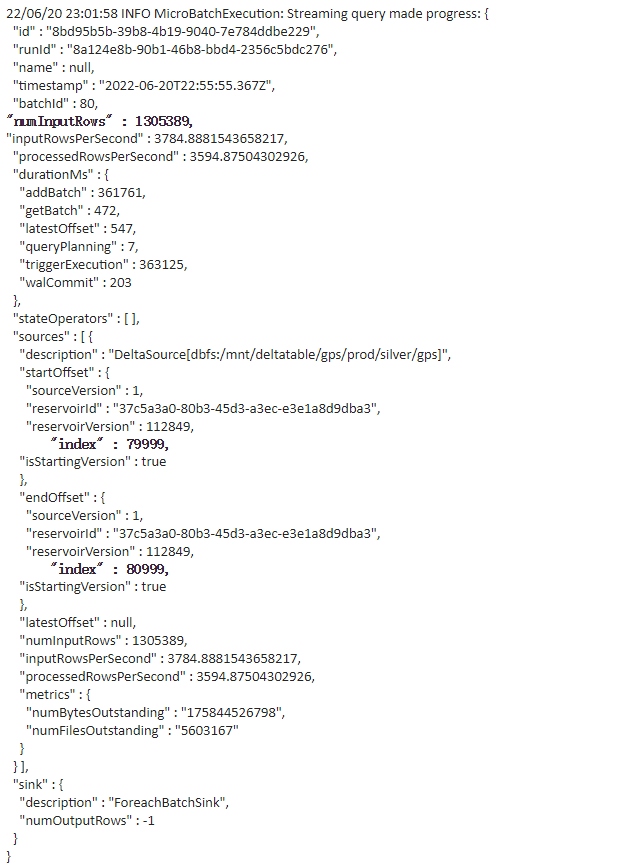

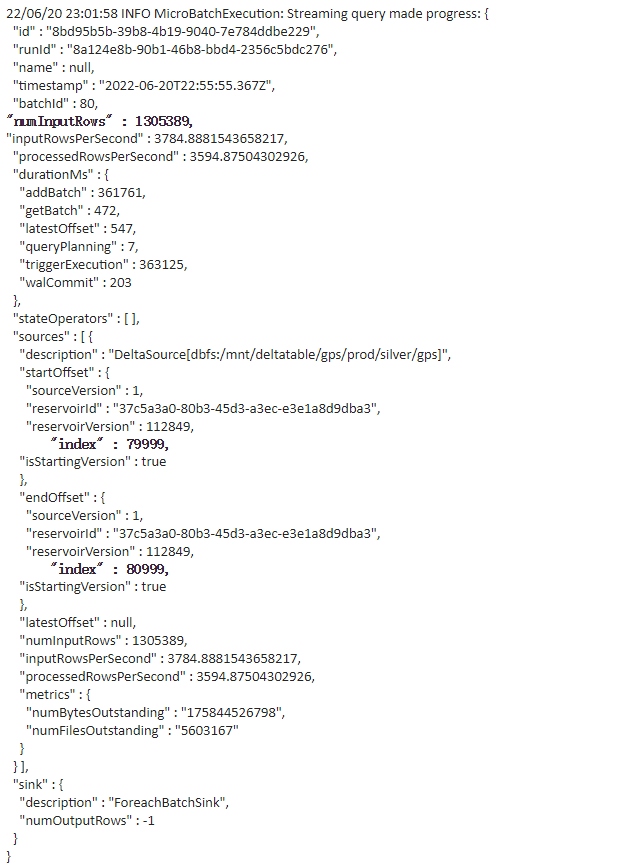

Figure 1 shows the performance of writing in the current table.

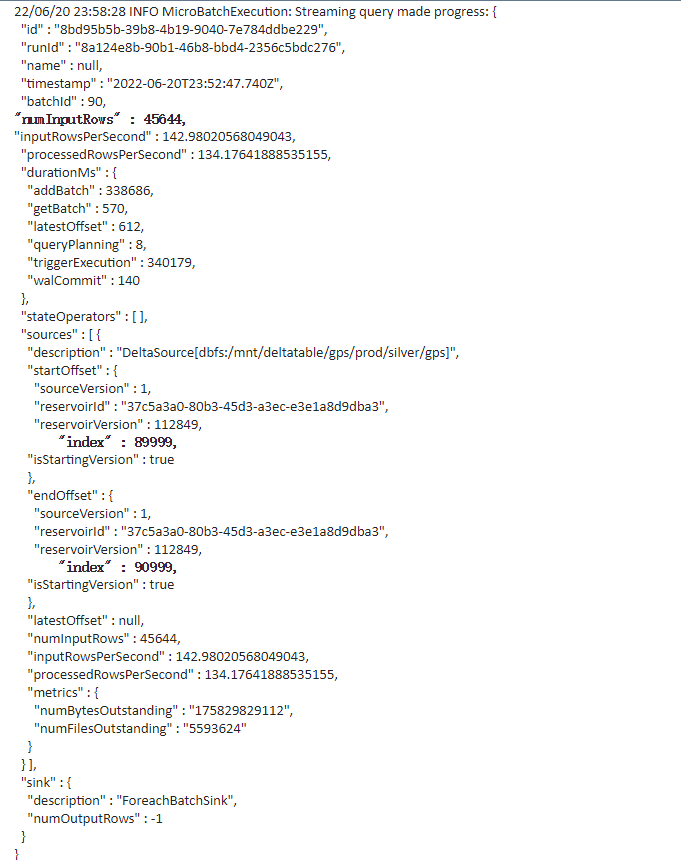

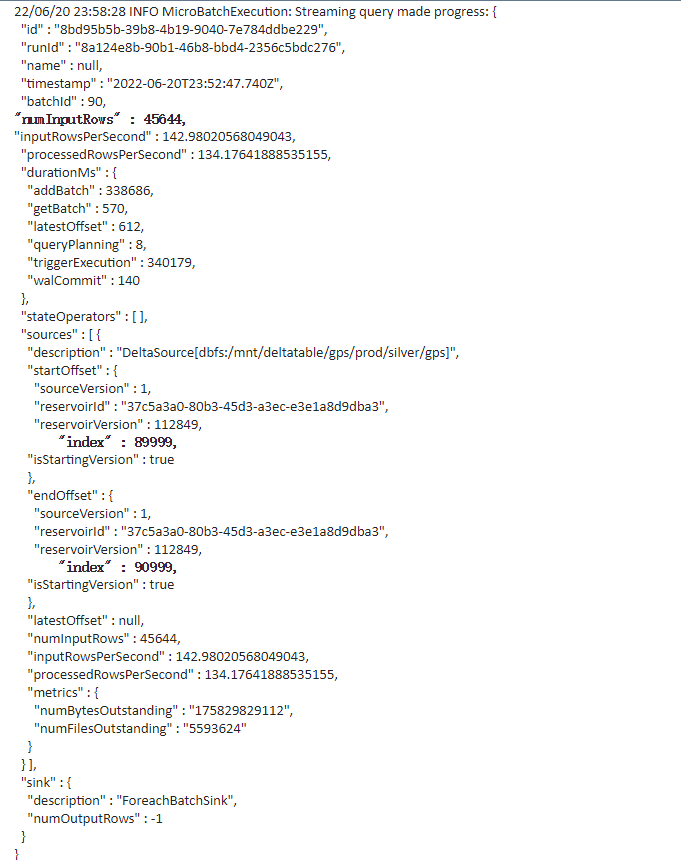

Figure 2 the performance of writing in the now table.

Figure 3 the performance of writing in the current table, but the checkpoint location is new.

Could it be that checkpoint location caused it?

Labels:

- Labels:

-

Azure

-

Data Explorer

-

Spark streaming

-

Write

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-27-2023 07:18 PM

I'm so sorry, I just thought the issue wasn't resolved

Solution

- Set maxFilesPerTrigger and maxBytesPerTrigger

- Enable autpoptimize

Reason

for the first day, it processes larger files and then eventually process smaller files。

Detailed reason

Before performance drops:

1305389 = numInputRows

avg records per files is 1305389/1000 = 1305.389

After performance drops:

45644= numInputRows

avg records per files is 45644/1000 = 45

From the comparison of (1) and (2), it can be seen that the number of files read by each batch before and after the performance drop (23:30) remains unchanged at 1000, but after 23:30 the number of 1000 total files changes. Less, it is most likely that the file size has become smaller, resulting in a smaller file, so the total number of read items has decreased. That is, for the first day, it processes larger files and then eventually processes smaller files.

Suggestion:

https://docs.microsoft.com/en-gb/azure/databricks/delta/delta-streaming#limit-input-rate

https://docs.microsoft.com/en-us/azure/databricks/delta/optimizations/auto-optimize

Finally, a big thank you to the Databricks team and the Microsoft team for their technical support.

11 REPLIES 11

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-01-2022 05:47 AM

if the checkpoint location is in another region or has another 'level' (think premium vs standard storage) that could be the case.

Can you check that?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-02-2022 12:35 AM

Thank you for your answer.

I check it.

data source(AdxDF) and checkpoint location is the same container, Only the path is different.

Azure Data Explorer and data source is the same region.

I have a new discovery.

if I write to the new table. It's fast at first, and after a few hours of running, it suddenly slows down.

I'll add a screenshot later

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-02-2022 05:25 AM

Thank you @Rengar Lee for your update.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-05-2022 08:36 PM

Is it Blob storage or ADLS Storage account where your data and checkpoint files are stored?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-05-2022 11:20 PM

It's Azure Data Lake Storage Gen2.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-07-2022 04:21 AM

could it be the stream query that gets slow? Maybe checkpoint more often?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-27-2023 07:22 PM

I'm very sorry to reply you now, this problem has been resolved, the specific reason is in another answer.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-07-2022 06:42 PM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-28-2022 05:35 PM

Do you have any more data to be process? check the driver logs for any errors messages? this suddenly drop might point to another issue happening here

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-27-2023 07:22 PM

I'm very sorry to reply you now, this problem has been resolved, the specific reason is in another answer.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-27-2023 07:18 PM

I'm so sorry, I just thought the issue wasn't resolved

Solution

- Set maxFilesPerTrigger and maxBytesPerTrigger

- Enable autpoptimize

Reason

for the first day, it processes larger files and then eventually process smaller files。

Detailed reason

Before performance drops:

1305389 = numInputRows

avg records per files is 1305389/1000 = 1305.389

After performance drops:

45644= numInputRows

avg records per files is 45644/1000 = 45

From the comparison of (1) and (2), it can be seen that the number of files read by each batch before and after the performance drop (23:30) remains unchanged at 1000, but after 23:30 the number of 1000 total files changes. Less, it is most likely that the file size has become smaller, resulting in a smaller file, so the total number of read items has decreased. That is, for the first day, it processes larger files and then eventually processes smaller files.

Suggestion:

https://docs.microsoft.com/en-gb/azure/databricks/delta/delta-streaming#limit-input-rate

https://docs.microsoft.com/en-us/azure/databricks/delta/optimizations/auto-optimize

Finally, a big thank you to the Databricks team and the Microsoft team for their technical support.

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- pdb debugger on databricks in Machine Learning

- Machine Type for VACUUM operation in Data Engineering

- Exam got suspended without any reason in Data Engineering

- Bug in unity catalog registering external database that is case sensitive in Data Engineering

- Issue setting metastore in GCP Databricks in Warehousing & Analytics