Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Python Read csv - Don't consider comma when its wi...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Python Read csv - Don't consider comma when its within the quotes, even if the quotes are not immediate to the separator

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-09-2022 02:39 PM

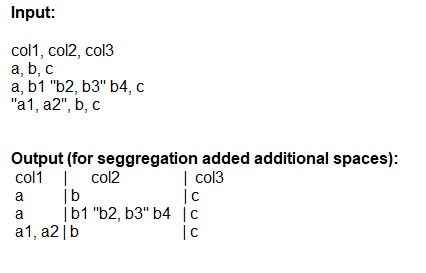

I have data like, below and when reading as CSV, I don't want to consider comma when its within the quotes even if the quotes are not immediate to the separator (like record #2). 1 and 3 records are good if we use separator, but failing on 2nd record

Input:

col1, col2, col3

a, b, c

a, b1 "b2, b3" b4, c

"a1, a2", b, c

Output:

5 REPLIES 5

Anonymous

Not applicable

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-09-2022 04:39 PM

https://spark.apache.org/docs/latest/sql-data-sources-csv.html#data-source-option

Escape quotes is the config you're looking for.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-10-2022 06:36 AM

Hi Joseph... I tried but a, b1 "b2, b3" b4, c row needs to convert to 3 columns as below (Expected output), but b series data are divided into 2 columns instead of single column - requirement is to ignore the comma inside quotes in 2nd column.

Expected output:

1) a

2) b1 "b2, b3" b4

3) c

Actual output:

1) a

2) b1 "b2

3) b3" b4

Thanks,

Satya

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-29-2022 03:19 PM

Following approach can be taken -

- Replace your delimiter from comma to something else like pipe , semicolon

- Provide escapeQuote option as true when you use spark.read

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-29-2022 11:40 AM

Hi @SATYANARAYANA ALAMANDA,

Just a friendly follow-up. Did any of the responses help you to resolve your question? if it did, please mark it as best. Otherwise, please let us know if you still need help.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-04-2022 07:06 AM

Hi, I think you can use this option for the csvReadee

spark.read.options(header = True, sep = ",", unescapedQuoteHandling = "BACK_TO_DELIMITER").csv("your_file.csv")especially the unescapedQuoteHandling. You can search for the other options at this link

https://spark.apache.org/docs/latest/sql-data-sources-csv.html

Announcements

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- reading a tab separated CSV quietly drops empty rows in Data Engineering

- How to call a table created with create_table using dlt in a separate notebook? in Data Engineering

- I am new to data bricks. setting up Workspace for NON-prod environment Separate workspaces for DEV, QA or Just one work space for NON-prod ? in Data Engineering

- Hello, I want to make 2 visualizations on the same query. Can I define separate filters for each visualization? in Data Engineering

- GET_COLUMNS fails with Unexpected character (\\'t\\' (code 116)): was expecting comma to separate Object entries - how to fix? in Data Engineering