Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- How to write Spark dataframe to Oracle database fr...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-21-2022 10:10 PM

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-21-2022 10:18 PM

we can use JDBC driver to write dataframe to Oracle tables.

Download Oracle ojdbc6.jar JDBC Driver

You need an Oracle jdbc driver to connect to the Oracle server. The latest version of the Oracle jdbc driver is ojdbc6.jar file. You can download the driver version as per your JDK version.

You can download this driver from official website. Go ahead and create Oracle account to download if you do not have. Or can download from maven as dependent library in cluster or job directly

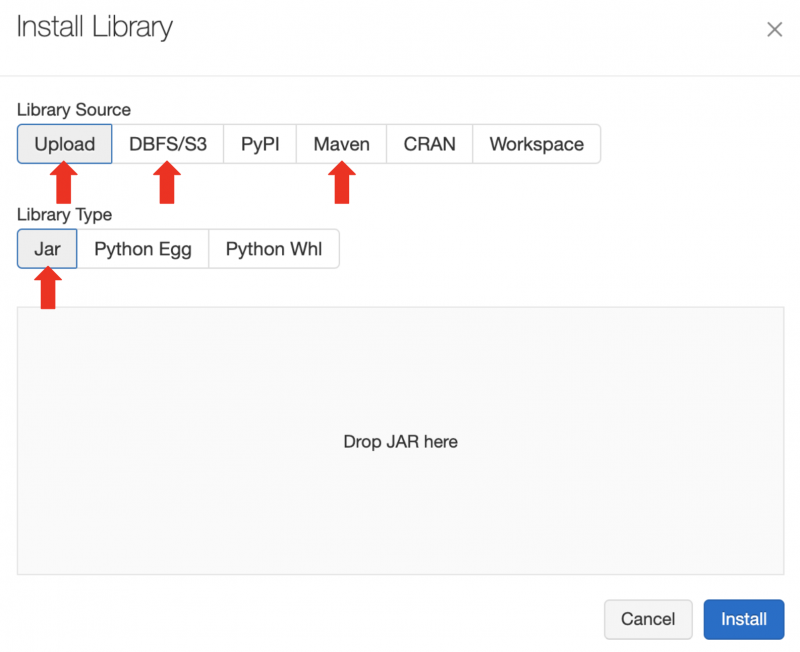

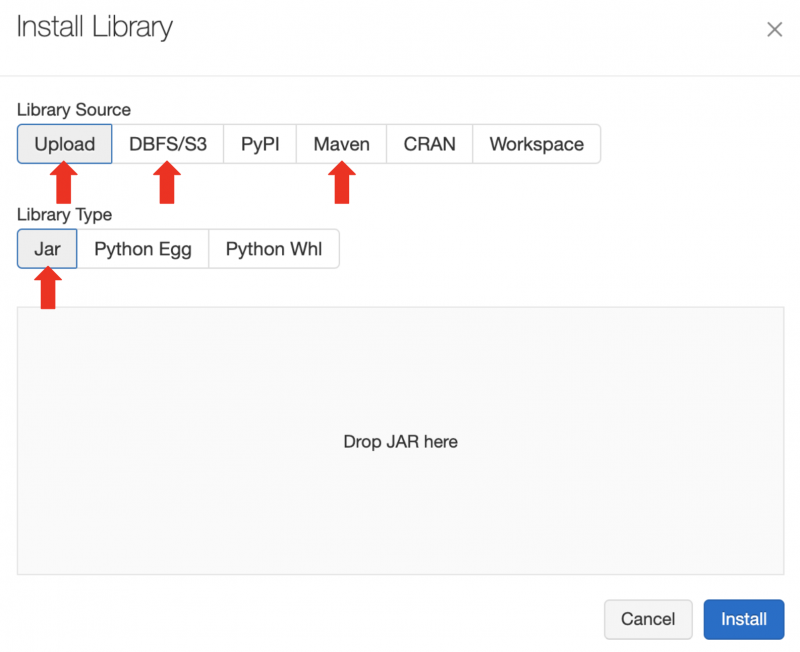

In the Databricks Clusters UI, install your third-party library .jar or Maven artifact with Library Source

Upload DBFS, DBFS/S3 or Maven. Alternatively, use the Databricks libraries API.

Load Spark DataFrame to Oracle Table Example

Now the environment is se. we can use dataframe.write method to load dataframe into Oracle tables.

For example, the following piece of code will establish JDBC connection with the Oracle database and copy dataframe content into mentioned table.

Df.write.format('jdbc').options(

url='jdbc:oracle:thin:@192.168.11.100:1521:ORCL',

driver='oracle.jdbc.driver.OracleDriver',

dbtable='testschema.test',

user='testschema',

password='password').mode('append').save()

3 REPLIES 3

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-21-2022 10:18 PM

we can use JDBC driver to write dataframe to Oracle tables.

Download Oracle ojdbc6.jar JDBC Driver

You need an Oracle jdbc driver to connect to the Oracle server. The latest version of the Oracle jdbc driver is ojdbc6.jar file. You can download the driver version as per your JDK version.

You can download this driver from official website. Go ahead and create Oracle account to download if you do not have. Or can download from maven as dependent library in cluster or job directly

In the Databricks Clusters UI, install your third-party library .jar or Maven artifact with Library Source

Upload DBFS, DBFS/S3 or Maven. Alternatively, use the Databricks libraries API.

Load Spark DataFrame to Oracle Table Example

Now the environment is se. we can use dataframe.write method to load dataframe into Oracle tables.

For example, the following piece of code will establish JDBC connection with the Oracle database and copy dataframe content into mentioned table.

Df.write.format('jdbc').options(

url='jdbc:oracle:thin:@192.168.11.100:1521:ORCL',

driver='oracle.jdbc.driver.OracleDriver',

dbtable='testschema.test',

user='testschema',

password='password').mode('append').save()

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-21-2022 10:19 PM

Thanks for detailed explanation and example.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-21-2022 11:10 PM

Hi @Prasanna Lakshmi you can use JDBC API to read and write the data from databricks.

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- Performance Issue with XML Processing in Spark Databricks in Data Engineering

- Unit Testing with the new Databricks Connect in Python in Data Engineering

- Differences between Spark SQL and Databricks in Data Engineering

- Databricks-connect OpenSSL Handshake failed on WSL2 in Data Engineering

- How to enable CDF when saveAsTable from pyspark code? in Data Engineering