Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Using current_timestamp as a default value in a de...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-17-2023 11:50 AM

I want to add a column to an existing delta table with a timestamp for when the data was inserted. I know I can do this by including current_timestamp with my SQL statement that inserts into the table.

Is it possible to add a column to an existing delta table with a default value of current_timestamp so I don't have to include the timestamp when writing data to the table? I have tried doing it but it doesn't seem to populate the column when I insert into the table.

Here is what I have tried so far:

ALTER TABLE tableName

ADD COLUMN InsertUTC timestamp DEFAULT current_timestamp

Labels:

- Labels:

-

Default Value

-

Delta table

-

SQL

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-10-2023 01:38 AM

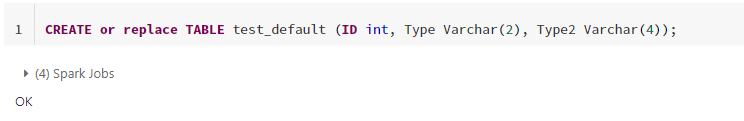

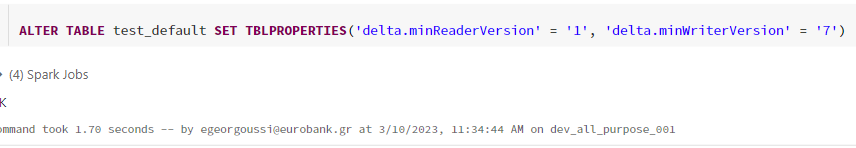

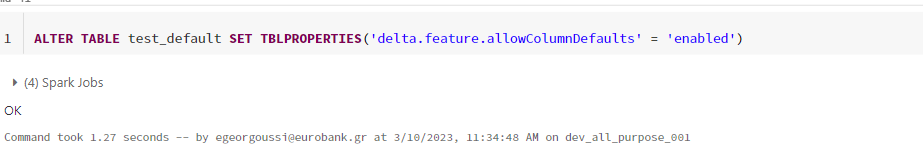

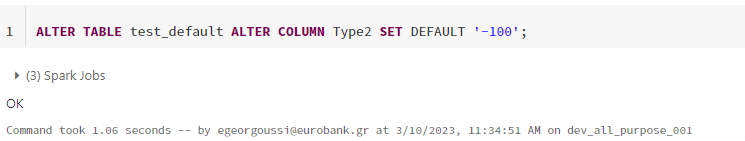

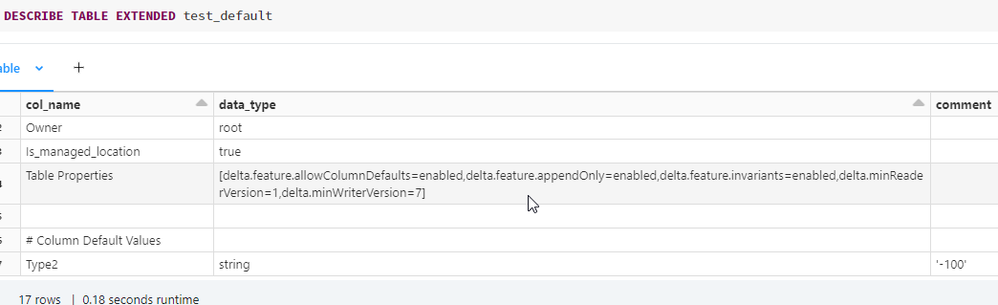

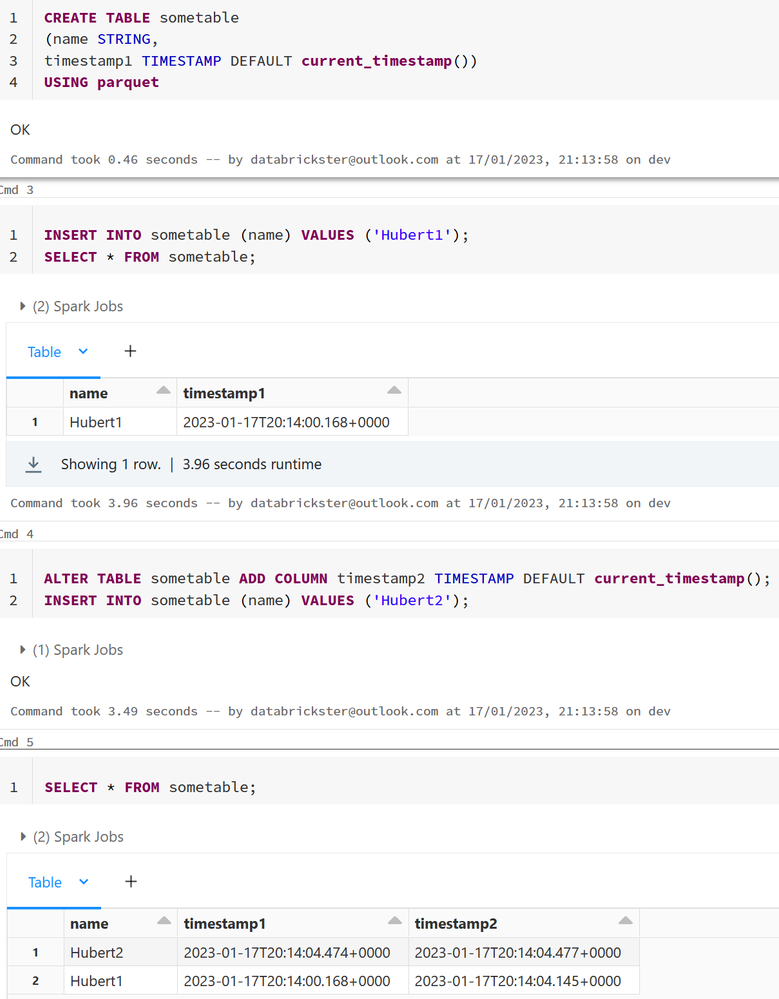

Hello - Just an update from the latest office hours. They mentioned that default value is available for Delta tables as well starting from release 12.2 Databricks Runtime 11.3 LTS | Databricks on AWS. However, I was able to set it up only in the following way. It wasn't allowed in the Create statement directly.

10 REPLIES 10

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-17-2023 12:15 PM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-17-2023 12:46 PM

Thanks for the response Hubert, hopefully the functionality for delta tables will be added soon.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-10-2023 01:38 AM

Hello - Just an update from the latest office hours. They mentioned that default value is available for Delta tables as well starting from release 12.2 Databricks Runtime 11.3 LTS | Databricks on AWS. However, I was able to set it up only in the following way. It wasn't allowed in the Create statement directly.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-10-2023 10:11 AM

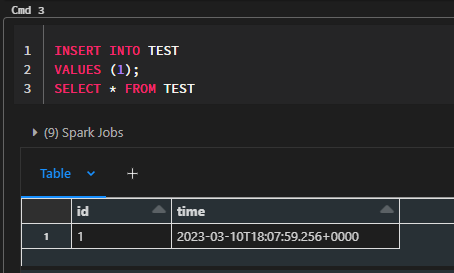

Thanks for this, I tried it out on my end with a cluster running DBR 12.2 LTS and I was able to get it to work.

CREATE TABLE test (id int, time timestamp);

ALTER TABLE test

SET TBLPROPERTIES('delta.minReaderVersion' = '1', 'delta.minWriterVersion'='7');

ALTER TABLE test SET TBLPROPERTIES('delta.feature.allowColumnDefaults' = 'enabled');

ALTER TABLE test ALTER COLUMN time SET DEFAULT CURRENT_TIMESTAMP()Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-28-2023 09:05 AM

My time column would have the default value of current_timestamp() if I went to the table properties after doing the identical action in the same DBR 12.2 LTS runtime.

However, it would fail if I attempted to add data to a target table that had one more column (the default time column) than the values I was adding to it : Cannot write to 'spark_catalog.gold.xxxxxxx', not enough data columns; target table has 19 column(s) but the inserted data has 18 column(s).

Do you know why this might be occurring?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-28-2023 01:05 PM

How are you adding values to the table? I have encountered that issue previously when I didn't specify columns in an INSERT statement where the table I was writing to had an identity column that is automatically generated.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-27-2023 03:14 AM - edited 12-27-2023 03:16 AM

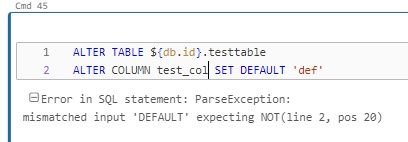

Hi All,

I am unable to run this

ALTER TABLE TABLE_NAME alter column column_name set default 'value'

as shown in the solution.

I was able to run the previous queries. But here, I am getting an error.

Can someone suggest a solution, I have a table with 20+ columns and I want to set '0' as default for majority of them.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-10-2023 02:38 AM

-- Alter the table to use the GENERATED ALWAYS functionality for the created_at column

ALTER TABLE example_table

ADD COLUMN created_at TIMESTAMP GENERATED ALWAYS AS CURRENT_TIMESTAMP();@Michael Burch Hi , Did you try using GENERATED ALWAYS feature. That can do satisfy your requirements I believe. With this, you don't need to insert a separate value for this column every time you load data into the table.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-10-2023 09:55 AM

That doesn't work for me, I get the following error:

[PARSE_SYNTAX_ERROR] Syntax error at or near 'GENERATED'(line 2, pos 32).

It sounds like delta lake generated columns have to be based on other columns in the table:

Delta Lake supports generated columns which are a special type of column whose values are automatically generated based on a user-specified function over other columns in the Delta table.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-28-2023 10:39 PM

Did this work for you in a databricks notebook?

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- One-time backfill for DLT streaming table before apply_changes in Data Engineering

- DLT refresh unexpectedly failing in Data Engineering

- Databricks autocomplete uses hive_metastore catalog although we have other default catalog in Data Governance

- Read Structured Streaming state information in Warehousing & Analytics

- @dlt.table throws error AttributeError: module 'dlt' has no attribute 'table' in Data Engineering