Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Is it possible to disable jdbc/odbc connection to ...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 06:19 AM

Hi,

I wanna know if it is possible to disable jdbc/odbc connection to (azure) databrick cluster.

So know (download) tools could connect this way ?

Thz in adv,

Oscar

Labels:

- Labels:

-

Odbc Connection

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 07:58 AM

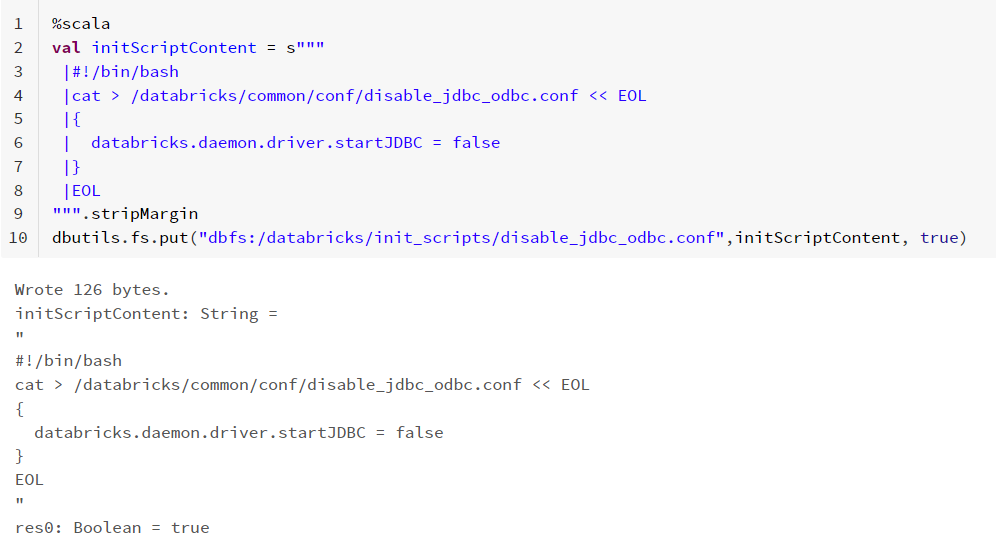

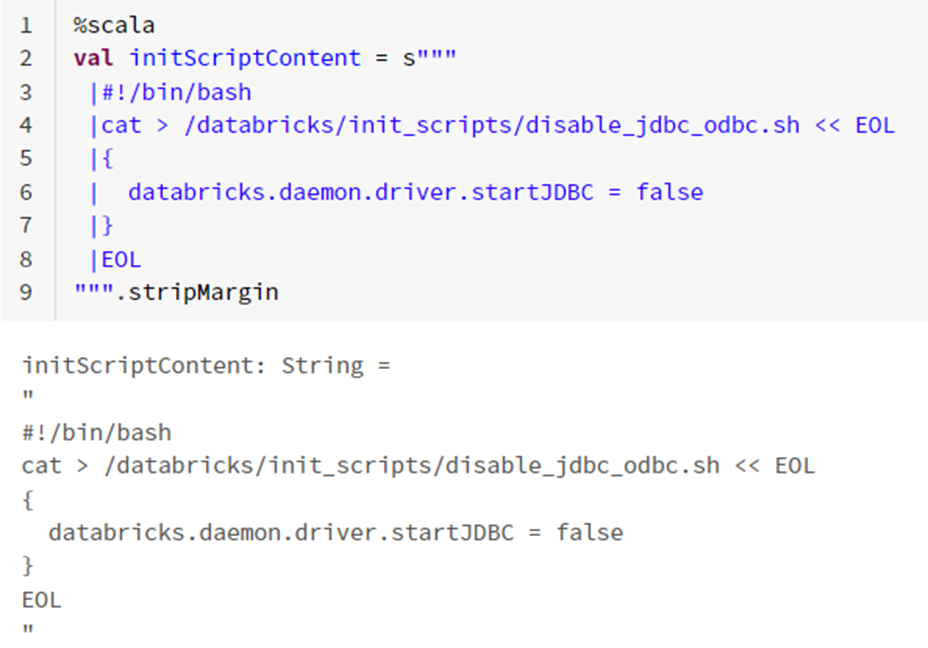

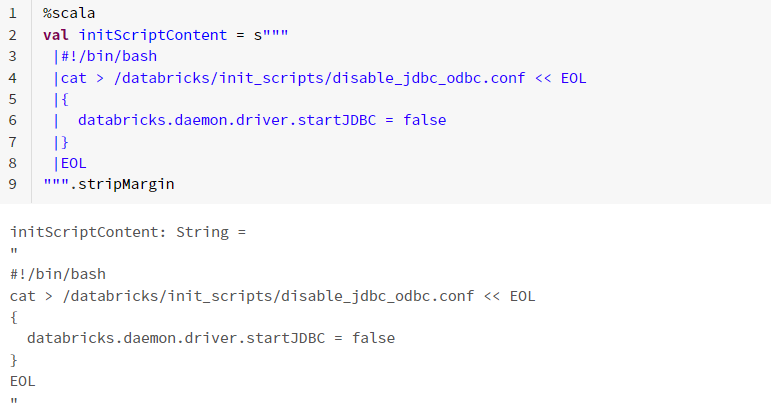

@Oscar van Zelst apart from using configuration settings to restrict access, you can also use an init script to disable connections on a cluster. The script is:

%scala

val initScriptContent = s"""

|#!/bin/bash

|cat > /databricks/common/conf/disable_jdbc_odbc.conf << EOL

|{

| databricks.daemon.driver.startJDBC = false

|}

|EOL

""".stripMargin

dbutils.fs.put("dbfs:/databricks/init_scripts/disable_jdbc_odbc.conf",initScriptContent, true)

13 REPLIES 13

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 07:58 AM

@Oscar van Zelst apart from using configuration settings to restrict access, you can also use an init script to disable connections on a cluster. The script is:

%scala

val initScriptContent = s"""

|#!/bin/bash

|cat > /databricks/common/conf/disable_jdbc_odbc.conf << EOL

|{

| databricks.daemon.driver.startJDBC = false

|}

|EOL

""".stripMargin

dbutils.fs.put("dbfs:/databricks/init_scripts/disable_jdbc_odbc.conf",initScriptContent, true)Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 09:38 AM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 10:09 AM

Hi @Oscar van Zelst ,

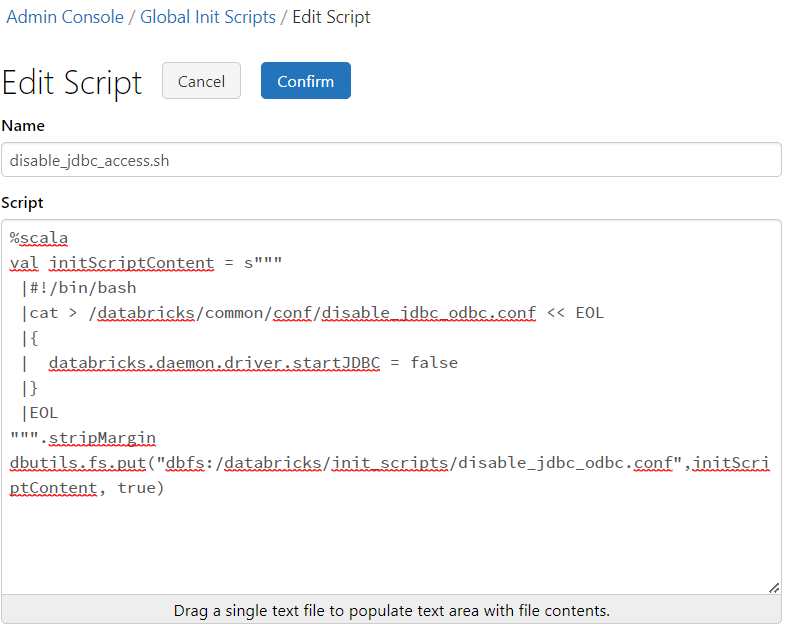

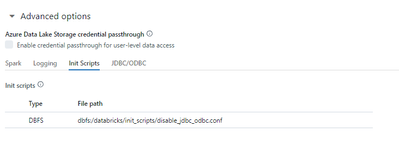

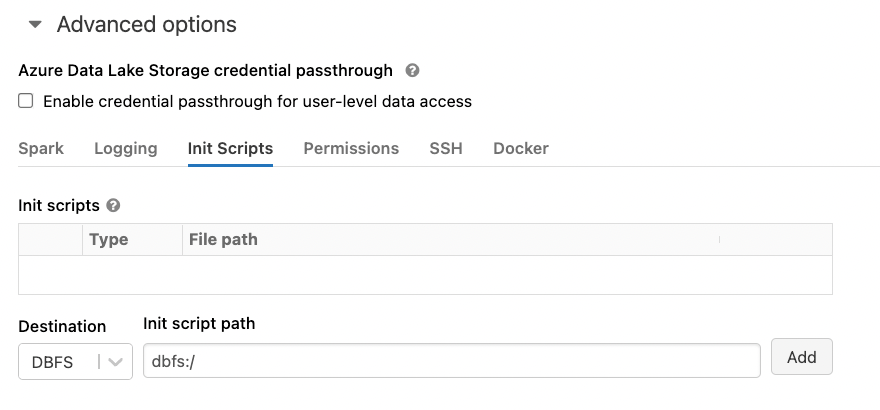

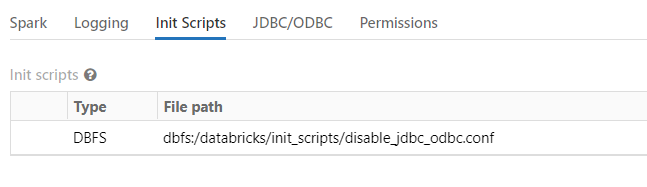

You can use Cluster-scoped init scripts in the cluster configuration as seen in the screenshot or these docs.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 10:11 AM

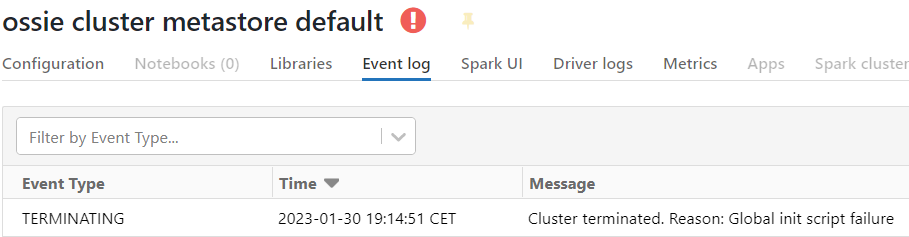

The error I get if I put this as global inti script is that the cluster won't start

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 10:12 AM

Can you try using cluster-scoped init scripts?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 10:15 AM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 10:21 AM

@Oscar van Zelst Try using a cluster-scoped init script

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2023 11:58 PM

Hi,

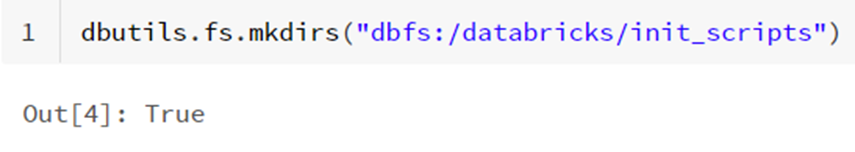

I have following you advise but no luck. This steps I have made;

total 4

-rw-r--r-- 1 root root 43 Jan 31 07:43 20230131_074358_00_disable_jdbc_odbc.sh.stdout.log

-rw-r--r-- 1 root root 0 Jan 31 07:43 20230131_074358_00_disable_jdbc_odbc.sh.stderr.log

root@0123-123553-quwe076y-10-215-1-4:/databricks/init_scripts# more 20230131_074358_00_disable_jdbc_odbc.sh.stdout.log

databricks.daemon.driver.startJDBC = false

But still I am able to connect by jdbc what I ‘am doing wrong ?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-31-2023 01:00 AM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-31-2023 04:30 AM

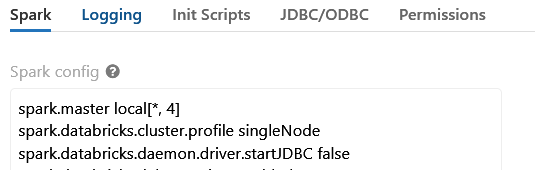

Also in /tmp/custom-spark.conf you can see its set ;

root@0126-125139-sxrs37zl-10-215-1-5:/databricks/driver# cat /tmp/custom-spark.conf

...

spark.master local[*, 4]

spark.databricks.passthrough.enabled true

spark.databricks.cloudfetch.hasRegionSupport true

spark.databricks.daemon.driver.startJDBC false

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-31-2023 07:46 AM

@Oscar van Zelst Are you saving the code as a .conf file, as seen in the code snippet?

dbutils.fs.put("dbfs:/databricks/init_scripts/disable_jdbc_odbc.conf",initScriptContent, true)Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-31-2023 10:18 AM

Hi ,

I have done this

root@0123-123553-quwe076y-10-215-1-4:/databricks/init_scripts# ls -ltr

total 4

-rw-r--r-- 1 root root 0 Jan 31 16:04 20230131_160437_00_disable_jdbc_odbc.conf.stdout.log

-rw-r--r-- 1 root root 0 Jan 31 16:04 20230131_160437_00_disable_jdbc_odbc.conf.stderr.log

-rw-r--r-- 1 root root 49 Jan 31 16:04 disable_jdbc_odbc.conf

root@0123-123553-quwe076y-10-215-1-4:/databricks/init_scripts# cat disable_jdbc_odbc.conf

{

databricks.daemon.driver.startJDBC = false

}

but still accesable by jdbc/odbc .connection it there other methodes to disable access also fine

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-31-2024 07:06 AM - edited 01-31-2024 07:13 AM

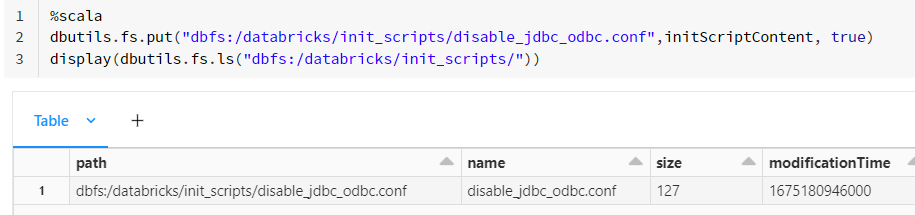

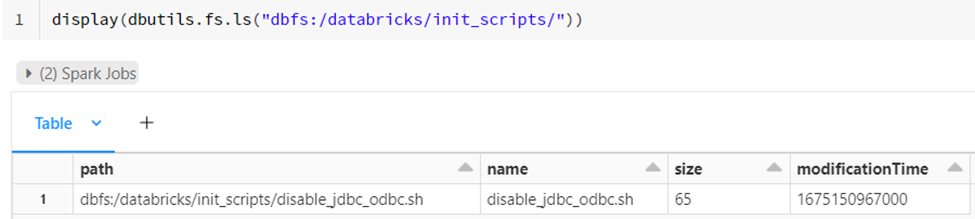

Hi @OvZ & @LandanG, as a point of clarification, the script provided is intended to be run in a notebook first. After running the below in a notebook, it creates the init script at the location "dbfs:/databricks/init_scripts/disable_jdbc_odbc.conf"

%scala

val initScriptContent = s"""

|#!/bin/bash

|cat > /databricks/common/conf/disable_jdbc_odbc.conf << EOL

|{

| databricks.daemon.driver.startJDBC = false

|}

|EOL

""".stripMargin

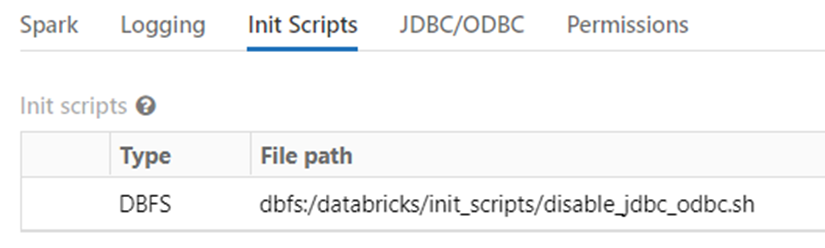

dbutils.fs.put("dbfs:/databricks/init_scripts/disable_jdbc_odbc.conf",initScriptContent, true)From there, it can be added as a cluster-scoped init script under Advanced options:

After cluster startup, you will notice that the JDBC/ODBC tab does not appear in the Spark UI:

JDBC/ODBC connections will also fail with a 502 error:

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- Unit Testing with the new Databricks Connect in Python in Data Engineering

- Databricks connecting SQL Azure DW - Confused between Polybase and Copy Into in Data Engineering

- Connecting from Databricks to Network Path in Data Engineering

- Databricks Connection to Redash in Data Engineering

- Databricks-connect OpenSSL Handshake failed on WSL2 in Data Engineering