Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Information about stoping JDBC connection

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Information about stoping JDBC connection

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-08-2023 08:22 AM

Hi everyone!

I would like to know how spark stops the connection when reading from a sql database using the JDBC format.

Also, if there is a way to check when the connection is active or manually stop it, I also would like to know.

Thank you in advance!

Labels:

- Labels:

-

%sh

-

Connection

-

INFORMATION

-

Jdbc

-

Spark

3 REPLIES 3

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-09-2023 09:27 PM

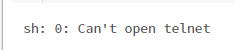

Hi, the connection will be active, it connects through JDBC hostname/IP and port number. you can check the connectivity by running #[%sh] sh telnet <jdbcHostname> <jdbcPort>

Please refer to: https://docs.databricks.com/external-data/jdbc.html

Please let us know if this helps.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-10-2023 01:40 AM

Hi, thank you for your help! I got that error

Anonymous

Not applicable

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-08-2023 12:30 AM

Hi @João Peixoto

Hope everything is going great.

Just wanted to check in if you were able to resolve your issue. If yes, would you be happy to mark an answer as best so that other members can find the solution more quickly? If not, please tell us so we can help you.

Cheers!

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- Unable to access Databricks cluster through ODBC in R in Warehousing & Analytics

- Hostname could not be verified in Data Engineering

- If I come via Databricks Partner connect and subscribe a partner product then how is the billing done and what api is used for publishing usage information to databricks? in Administration & Architecture

- Issue with running multiprocessing on databricks: Python kernel is unresponsive error in Machine Learning

- Databricks-connect configured with service principal token but unable to retrieve information to local machine in Data Engineering