- 3883 Views

- 3 replies

- 3 kudos

Feature request: Ability to delete local branches in git folders

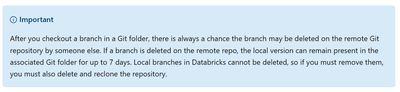

According to the documentation https://learn.microsoft.com/en-us/azure/databricks/repos/git-operations-with-repos "Local branches in Databricks cannot be deleted, so if you must remove them, you must also delete and reclone the repository."Creating a...

- 3883 Views

- 3 replies

- 3 kudos

- 3 kudos

Also, rather than switching between dev branches, you can create another git folder for the other branches. Users can create a git folder for each dev branch they work on. Those git folders can be deleted after the branches are merged

- 3 kudos

- 5414 Views

- 3 replies

- 1 kudos

Resolved! How to check a specific table for it's VACUUM retention period

I'm looking for a way to query for the VACUUM retention period for a specific table. This does not show up with DESSCRIBE DETAIL <table_name>;

- 5414 Views

- 3 replies

- 1 kudos

- 1 kudos

Hi @WWoman ,the default retention period is 7 days and as per documentation it is regulated by 'delta.deletedFileRetentionDuration' table property: If there is no delta.deletedFileRetentionDuration table property it means it uses the default, so 7 ...

- 1 kudos

- 5978 Views

- 2 replies

- 1 kudos

Removal of account admin

Hi, I'm having issues with removing account admin (probably the first one, to which databricks account was related to). Under user management, when I hit the delete user button, it prompts:Either missing permissions to delete <user_email> or deleting...

- 5978 Views

- 2 replies

- 1 kudos

- 1 kudos

This error typically occurs when attempting to remove the 'account owner.' The account owner is the user who originally set up the Databricks account. This entitlement is attached to a user so that there is always at least one account admin who can a...

- 1 kudos

- 2687 Views

- 2 replies

- 0 kudos

Resolved! How to optimize Worklfow job startup time

We want to create a workflow pipeline in which we trigger a Databricks workflow job from AWS. However, the startup time of Databricks workflow jobs on job compute is over 10 minutes, which is causing issues.We would like to either avoid this startup ...

- 2687 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @Rahul14Gupta, One option is to use serverless job clusters, which can significantly reduce the startup time. Serverless clusters are designed to start quickly and can be a good fit for workloads that require fast initialization. But you can actua...

- 0 kudos

- 5250 Views

- 1 replies

- 0 kudos

Databricks Bundles - Terraform state management

Hello,I had a look at DABs today and it seems they are using Terraform under the hood. The state is stored in Databricks Workspace, in the bundle deployment directory. Is it possible to use just the state management functionality that DABs must have ...

- 5250 Views

- 1 replies

- 0 kudos

- 0 kudos

While it may be possible to use the state management functionality provided by DABs using Terraform, it would require additional effort to synchronize your code and manage state consistently. The choice would depend on the use case and we should keep...

- 0 kudos

- 2442 Views

- 3 replies

- 3 kudos

How to tag databricks workspace to keep track of AWS resources.

How to add tags to a databricks workspace so that tag propagates to all cloud resources created for or by workspace to keep track of their costs.

- 2442 Views

- 3 replies

- 3 kudos

- 3 kudos

Hello!To add tags to a Databricks workspace and ensure they propagate to all cloud resources created for or by the workspace, log in to your Databricks workspace and navigate to the workspace settings or administration console. Use the tag interface ...

- 3 kudos

- 8236 Views

- 11 replies

- 3 kudos

"Azure Container Does Not Exist" when cloning repositories in Azure Databricks

Good Morning, I need some help with the following issue:I created a new Azure Databricks resource using the vnet-injection procedure. (here) I then proceeded to link my Azure Devops account using a personal account token. If I try to clone a reposito...

- 8236 Views

- 11 replies

- 3 kudos

- 3 kudos

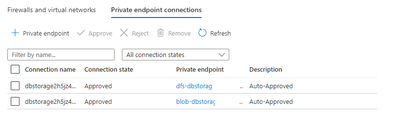

Yes, the container is indeed the container in the storage account deployed by the Databricks instance into the managed resource group. This storage account is part of the managed resource group associated with your Azure Databricks workspace. If the ...

- 3 kudos

- 8267 Views

- 1 replies

- 3 kudos

Databricks Cluster Failed to Start - ADD_NODES_FAILED (Solution)

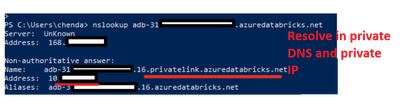

Lately we encountered the issue that the classic compute clusters could not start. With the help of Databricks team to troubleshoot, we found the issue and get it fixed. So I think writing it here could help other people who would encounter the same ...

- 8267 Views

- 1 replies

- 3 kudos

- 3 kudos

Thanks for sharing. We had the same problem. I missed to add private endpoints to the workspace storage account in the managed resource group. I will also add NCC rules in the Databricks account. Then you don't need the subnets in the firewall.

- 3 kudos

- 2165 Views

- 3 replies

- 3 kudos

private endpoint to non-storage azure resource

I'm trying to set up a ncc and private endpoint for a container app environment in azure. However I get the following error:Error occurred when creating private endpoint rule: : BAD_REQUEST: Can not create Private Link Endpoint with name databricks-x...

- 2165 Views

- 3 replies

- 3 kudos

- 3 kudos

All the azure subscriptions have this registered. Could this not be a azure subscription within the databricks tenant?

- 3 kudos

- 1048 Views

- 1 replies

- 1 kudos

How to find the billing of each cell in a notebook?

Suppose I have run ten different statements/tasks/cells in a notebook, and I want to know how many DBUs each of these ten tasks used. Is this possible?

- 1048 Views

- 1 replies

- 1 kudos

- 1 kudos

Hey,I really think this it’s not possible to directly determine the cost of a single cell in Databricks.However, you can approach this in two ways, depending on the type of cluster you’re using, as different cluster types have different pricing model...

- 1 kudos

- 1516 Views

- 2 replies

- 2 kudos

Resolved! Driver log storage location

What directory would the driver log normally be stored in? Is it DBFS?

- 1516 Views

- 2 replies

- 2 kudos

- 1123 Views

- 3 replies

- 0 kudos

GPU accelerator not matching with desired memory.

Hello, We have opted for Standard_NC8as_T4_v3 which claims to have 56GB memory. But, when I am doing nvidia-smi in the notebook, its showing only ~16 GB, Why?Please let me know what is happening here? Jay

- 1123 Views

- 3 replies

- 0 kudos

- 0 kudos

Please refer to: https://learn.microsoft.com/en-us/azure/databricks/compute/gpu

- 0 kudos

- 569 Views

- 0 replies

- 0 kudos

Error "Gateway authentication failed for 'Microsoft.Network'" While Creating Azure Databricks

Hi All,I'm encountering an issue while trying to create a Databricks service in Azure. During the setup process, I get the following error:"Gateway authentication failed for 'Microsoft.Network'"I've checked the basic configurations, but I'm not sure ...

- 569 Views

- 0 replies

- 0 kudos

- 3012 Views

- 5 replies

- 5 kudos

Resolved! Databricks cluster pool deployed through Terraform does not have UC enabled

Hello everyone,we have a workspace with UC enabled, we already have a couple of catalogs attached and when using our personal compute we are able to read/write tables in those catalogs.However for our jobs we deployed a cluster pool using Terraform b...

- 3012 Views

- 5 replies

- 5 kudos

- 923 Views

- 1 replies

- 0 kudos

GCP Databricks | Workspace Creation Error: Storage Credentials Limit Reached

Hi Team,We are encountering an issue while trying to create a Databricks Workspace in the GCP region us-central1. Below is the error message:Error Message:Workspace Status: FailedDetails: Workspace failed to launch.Error: BAD REQUEST: Cannot create 1...

- 923 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @karthiknuvepro, Do you have an active support plan? Over a ticket with us we can request the increase of this limit.

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

83 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |