- 1563 Views

- 1 replies

- 0 kudos

I want to create custom tag in cluster policy so that clusters created using that policy get those

"I want to create custom tags in a cluster policy so that clusters created using this policy will automatically include those tags for billing purposes. Consider the following example:"cluster_type": {"type": "fixed","value": "all-purpose"},"custom_t...

- 1563 Views

- 1 replies

- 0 kudos

- 0 kudos

Are you having any issue while running this code in the policy?

- 0 kudos

- 2161 Views

- 1 replies

- 0 kudos

Databricks Serverless best practices

Hi All, We are configuring a Databricks serverless that adjusts according to the workload type,like choosing different cluster sizes such as extra small ,small ,large etc, and auto scale option.We're also looking at the average time it takes to compl...

- 2161 Views

- 1 replies

- 0 kudos

- 0 kudos

You can refer to our Serverless Compute Best practices: https://docs.databricks.com/en/compute/serverless/best-practices.htmlIf you refer to the Serverless Warehouses you can refer to https://docs.databricks.com/en/compute/sql-warehouse/warehouse-beh...

- 0 kudos

- 1005 Views

- 1 replies

- 0 kudos

Manage Account option

HiI have created a premium databricks workspace on my azure free trial account and also have the global administrator role on my azure acount. I have setup all the necessary configurations like by providing the role of storage data blob contributor t...

- 1005 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @SALAHUDDINKHAN, If you are unable to see the "Manage Account" option, it is likely that you do not have the necessary account admin privileges. Please ensure you have the required permissions indicated here: https://learn.microsoft.com/en-us/a...

- 0 kudos

- 13396 Views

- 2 replies

- 1 kudos

Resolved! Any way to move the unity catalog to a new external storage location?

Dear Databricks CommunityThe question is about changing an existing unity catalog to a new storage location. For example: With an existing unity catalog (i.e. catalog1) includeing schemas and volumes. The catalog is based on an external location (i....

- 13396 Views

- 2 replies

- 1 kudos

- 1 kudos

https://docs.databricks.com/ja/sql/language-manual/sql-ref-syntax-ddl-alter-location.html

- 1 kudos

- 5723 Views

- 5 replies

- 2 kudos

Ingress/Egress private endpoint

Hello ,We have configured our Databricks environment with private endpoint connections injected into our VNET, which includes two subnets (public and private). We have disabled public IPs and are using Network Security Groups (NSGs) on the subnet, as...

- 5723 Views

- 5 replies

- 2 kudos

- 2 kudos

@Fkebbati First, traffic cost in Azure are not reported as a separate Resource Type, but appended to main resource causing the traffic. If you want to distinguish them use for instance Service Name. In this case traffic cost is appended to Databricks...

- 2 kudos

- 3025 Views

- 3 replies

- 0 kudos

Databricks (GCP) Cluster not resolving Hostname into IP address

we have #mongodb hosts that must be resolved to private internal loadbalancer ips ( of another cluster ), and that we are unable to add host aliases in the Databricks GKE cluster in order for the spark to be able to connect to a mongodb and resolve t...

- 3025 Views

- 3 replies

- 0 kudos

- 0 kudos

Also found this - https://community.databricks.com/t5/data-engineering/why-i-m-getting-connection-timeout-when-connecting-to-mongodb/m-p/14868

- 0 kudos

- 3824 Views

- 3 replies

- 0 kudos

Data leakage risk happened when we use the Azure Databricks workspace

Context:We are utilizing an Azure Databricks workspace for data management and model serving within our project, with delegated VNet and subnets configured specifically for this workspace. However, we are consistently observing malicious flow entries...

- 3824 Views

- 3 replies

- 0 kudos

- 0 kudos

Hello everyone! We have worked with our security team, Microsoft, and other customers who have seen similar log messages. This log message is very misleading, as it appears to state that the malicious URI was detected within your network — this would...

- 0 kudos

- 1290 Views

- 1 replies

- 0 kudos

Unable to add a databricks permission to existing policy

Hi, We're using databricks provider v1.49.1 to manager our Azure databricks cluster and other resources. Having an issue setting permissions with the databricks terraform resource "databricks_permissions" where the error indicates that the clust...

- 1290 Views

- 1 replies

- 0 kudos

- 0 kudos

Is this cluster policy a custom policy? If you try for testing purposes to modify it in the UI does it allows you to

- 0 kudos

- 3234 Views

- 1 replies

- 0 kudos

Compute terminated. Reason: Control Plane Request Failure

Hi,I have started to get this error: Failed to get instance bootstrap steps from the Databricks Control Plane. Please check that instances have connectivity to the Databricks Control Plane. and I am suspecting it has to do with networking.I am just a...

- 3234 Views

- 1 replies

- 0 kudos

- 0 kudos

Do you still facing issues? I would suggest to check the Securitygroups and make sure they match with:https://docs.databricks.com/en/security/network/classic/customer-managed-vpc.html#security-groupsAdditionally check if the inbound and outbound addr...

- 0 kudos

- 3898 Views

- 1 replies

- 0 kudos

Updating databricks git repo from github action - how to

HiMy company is migrating from azuredevops to github and we have a pipeline in azuredevops which updates/syncs databricks repos whenever a pull request is made to the development branch. The azure devops pipeline (which works) looks like this: trigge...

- 3898 Views

- 1 replies

- 0 kudos

- 0 kudos

It seems like the issue you're encountering is related to missing Git provider credentials when trying to update the Databricks repo via GitHub Actions. Based on the context provided, here are a few steps you can take to resolve this issue: Verify...

- 0 kudos

- 1654 Views

- 1 replies

- 0 kudos

Customer Facing Integration

Is Databricks intended to be used in customer facing application architectures? I have heard that Databricks is primarily intended to be internally facing. Is this true?If you are using it for customer facing ML applications, what tool stack are yo...

- 1654 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @hucklebarryrees ,Databricks is indeed primarily designed as an analytical platform rather than a transactional system. It’s optimized for data processing, machine learning, and analytics rather than handling high-frequency, parallel transactional...

- 0 kudos

- 2265 Views

- 4 replies

- 0 kudos

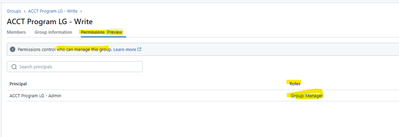

Unity Group management, Group: Manager role

We would like to have the ability to assign an individual and/or group to the "Group: Manager" role, providing them with the ability to add/remove users without the need to be an account or workspace administrator. Ideally this would be an option fo...

- 2265 Views

- 4 replies

- 0 kudos

- 0 kudos

thanks @NandiniN , we have looked through that documentation and still have not been able to get anything to work without the user also being an account or workspace admin. The way i'm interpreting the documentation (screenshot) is the API currently...

- 0 kudos

- 3829 Views

- 2 replies

- 0 kudos

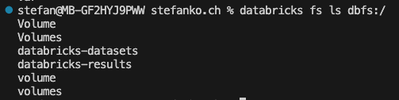

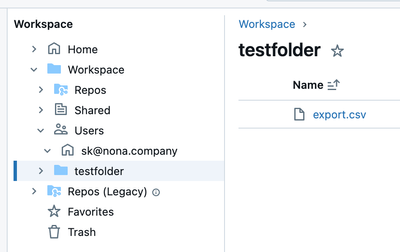

List files in Databricks Workspace with Databricks CLI

I want to list all files in my Workspace with the CLIThere's a command for it: databricks fs ls dbfs:/When I run this, I get this result: I can then list the content of databricks-datasets, but no other directory. How can I list the content of the Wo...

- 3829 Views

- 2 replies

- 0 kudos

- 0 kudos

I know it's possible with Databricks SDK, but I want to solve it with the CLI on the Terminal.

- 0 kudos

- 1809 Views

- 1 replies

- 0 kudos

Enabling Object Lock for the S3 bucket that is delivering audit logs

Hello Community,I am trying to enable Object Lock on the S3 bucket to which the audit log is delivered, but the following error occurs if Object Lock is enabled when the delivery settings are enabled.> {"error_code":"PERMISSION_DENIED","message":"Fai...

- 1809 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @hiro12 Enabling Object Lock on an S3 bucket after configuring the delivery settings should not affect the ongoing delivery of audit logs. But I would say, it is better to understand the root cause of the error. The error you encountered when ena...

- 0 kudos

- 5916 Views

- 8 replies

- 3 kudos

Open Delta Sharing and Deletion Vectors

Hi,Just experimenting with open delta sharing and running into a few technical traps. Mainly that if deletion vectors are enabled on a delta table (which they are by default now) we get errors when trying to query a table (specifically with PowerBI)...

- 5916 Views

- 8 replies

- 3 kudos

- 3 kudos

@NandiniN we are talking of PowerBI connection, so you cannot set that option.@F_Goudarzi I have just tried out with PBI Desktop Version: 2.132.1053.0 and it is running (I did not disable Deletion Vectors into my table.) I also tried with last versio...

- 3 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

82 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |