- 5175 Views

- 4 replies

- 6 kudos

Metric Views in Databricks: A Unified Approach to Business Metrics

Databricks has introduced a powerful feature—Metric Views—that transforms how organizations define, manage, and consume business metrics. Whether you're a data analyst, engineer, or business stakeholder, Metric Views offer a unified, governed, and re...

- 5175 Views

- 4 replies

- 6 kudos

- 6 kudos

@BijuThottathil : I don't have a workaround for you, but wanted to let you know that you can vote here https://community.fabric.microsoft.com/t5/Fabric-Ideas/Enable-native-Power-BI-integration-with-Databricks-Metric-View/idi-p/4823684Hopefully, Micro...

- 6 kudos

- 870 Views

- 0 replies

- 2 kudos

Zero-Copy, Zero Friction: Bridging the Gap Between Databricks and Microsoft Fabric using Delta Share

Databricks Microsoft Fabric: Zero-Copy Integration with Delta SharingManaging data across different ecosystems usually means messy ETL pipelines and high storage costs. I implemented a Zero-Copy architecture to streamline this. By leveraging Delta S...

- 870 Views

- 0 replies

- 2 kudos

- 594 Views

- 0 replies

- 1 kudos

Runtime 18 / Spark 4.1 improvements

Runtime 18 / Spark 4.1 brings Literal string coalescing everywhere, thanks to what you can make your code more readable. Useful, for example, for table comments #databricks Latest updates: read: https://databrickster.medium.com/databricks-news-week-1...

- 594 Views

- 0 replies

- 1 kudos

- 513 Views

- 0 replies

- 1 kudos

Secrets in UC

We can see new grant types in Unity Catalog. It seems that secrets are coming to UC, and I especially love the "Reference Secret" grant. #databricks Read more:- https://databrickster.medium.com/databricks-news-week-1-29-december-2025-to-4-january-202...

- 513 Views

- 0 replies

- 1 kudos

- 2054 Views

- 0 replies

- 2 kudos

DABS JSON Plan

DABS deployment from a JSON plan is one of my favourite new options. You can review the changes or even integrate the plan with your CI/CD process. #databricks Read more:- https://databrickster.medium.com/databricks-news-week-1-29-december-2025-to-4-...

- 2054 Views

- 0 replies

- 2 kudos

- 1826 Views

- 0 replies

- 1 kudos

The Agile Path to Autonomous Agents

I read about a team demo about their shiny new "autonomous AI agent” leadership. It queried databases, generated reports, sent Slack notifications—all hands-free. Impressive stuff. Two weeks later? $12K in API costs, staging data accidentally pushed ...

- 1826 Views

- 0 replies

- 1 kudos

- 355 Views

- 0 replies

- 1 kudos

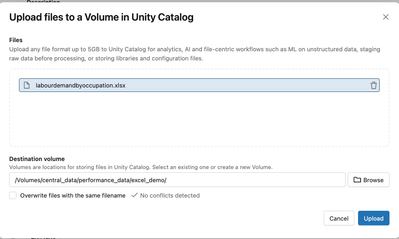

Ingest Everything, let's start with Excel

We can ingest Excel into Databricks, including natively from SharePoint. It was top news in December, but in fact is part of a big strategy which will allow us to ingest any format from anywhere in databricks. Foundation is already built as there is ...

- 355 Views

- 0 replies

- 1 kudos

- 8408 Views

- 1 replies

- 2 kudos

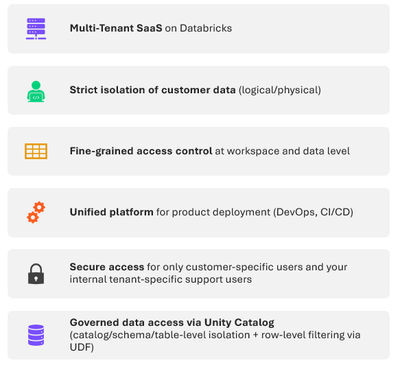

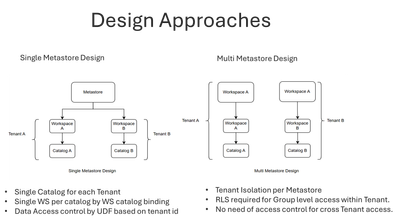

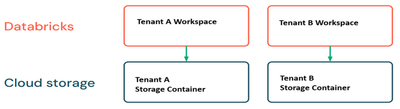

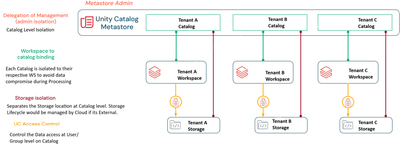

Building MultiTenant Architecture on Databricks Platform

This use case demonstrates how a SaaS product can be deployed for multiple customers or business units, ensuring data isolation at every layer through workspace separation, fine-grained access control with Unity Catalog, and secure processing using U...

- 8408 Views

- 1 replies

- 2 kudos

- 2 kudos

Good breakdown of the Databricks storage and catalog isolation patterns.One thing to keep in mind: workspace binding and Unity Catalog handle data isolation well, but the authentication layer is where tenant context gets established first. Without pr...

- 2 kudos

- 265 Views

- 0 replies

- 2 kudos

Labels and sort by Field

Dashboards now offer more flexibility, allowing us to use another field or expression to label or sort the chart. See demo at:- https://www.youtube.com/watch?v=4ngQUkdmD3o&t=893s- https://databrickster.medium.com/databricks-news-week-52-22-december-2...

- 265 Views

- 0 replies

- 2 kudos

- 1127 Views

- 1 replies

- 5 kudos

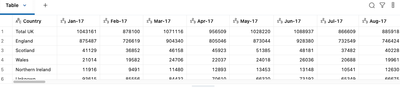

Databricks Excel Reader

We’ve recently created a new Excel reader function, and I decided to have a play around with it. I’ve used an open dataset for this tutorial, so you can follow along too. Using file available here - https://www.ons.gov.uk/employmentandlabourmarket/pe...

- 1127 Views

- 1 replies

- 5 kudos

- 326 Views

- 0 replies

- 1 kudos

Databricks Lakeflow Jobs Workflow Backfill

When something goes wrong, and your pattern involves daily MERGE operations in your jobs, backfill jobs let you reload multiple days in a single execution without writing custom scripts or manually triggering runs. Read more:- https://www.sunnydata.a...

- 326 Views

- 0 replies

- 1 kudos

- 318 Views

- 0 replies

- 1 kudos

New resources under DABS

More and more resources are available under DABS. One of the newest additions is the alerts resource.

- 318 Views

- 0 replies

- 1 kudos

- 1001 Views

- 0 replies

- 3 kudos

Goodbye community edition, Long live the free edition

I just logged in to the community edition for the last time and spun up the cluster for the last time. Today is the last day, but it's still there. Haven't logged in there for a while, as the free edition offers much more, but it is a place where man...

- 1001 Views

- 0 replies

- 3 kudos

- 1042 Views

- 1 replies

- 2 kudos

Databricks Table Protection Features

This article provides an overview of key Databricks features and best practices that protect Gold tables from accidental deletion. It also covers the implications if both the Gold and Landing layers are deleted without active retention or backup. Cor...

- 1042 Views

- 1 replies

- 2 kudos

- 2 kudos

Thanks for sharing this. Time Travel is applicable all tables in Databricks NOT restricted to gold.

- 2 kudos

- 348 Views

- 0 replies

- 2 kudos

5 Reasons You Should Be Using LakeFlow Jobs as Your Default Orchestrator

I recently saw a business case in which an external orchestrator accounted for nearly 30% of their total Databricks job costs. That's when it hit me: we're often paying a premium for complexity we don't need. Besides FinOps, I tried to gather all the...

- 348 Views

- 0 replies

- 2 kudos

-

Access Data

1 -

ADF Linked Service

1 -

ADF Pipeline

1 -

Advanced Data Engineering

3 -

agent bricks

1 -

Agentic AI

3 -

AI

1 -

AI Agents

3 -

AI Readiness

1 -

Apache spark

3 -

Apache Spark 3.0

2 -

ApacheSpark

1 -

Associate Certification

1 -

Auto-loader

1 -

Automation

1 -

AWSDatabricksCluster

1 -

Azure

1 -

Azure databricks

3 -

Azure Databricks Job

2 -

Azure Delta Lake

2 -

Azure devops integration

1 -

AzureDatabricks

2 -

BI

1 -

BI Integrations

1 -

Big data

1 -

Billing and Cost Management

2 -

Blog

1 -

Caching

2 -

CDC

1 -

CICDForDatabricksWorkflows

1 -

Cluster

1 -

Cluster Policies

1 -

Cluster Pools

1 -

Collect

1 -

Community Event

1 -

CommunityArticle

2 -

Cost Optimization Effort

2 -

CostOptimization

2 -

custom compute policy

1 -

CustomLibrary

1 -

Data

1 -

Data Analysis with Databricks

1 -

Data Architecture

1 -

Data Driven AI Roadmap

1 -

Data Engineering

7 -

Data Governance

1 -

Data Ingestion

1 -

Data Ingestion & connectivity

1 -

Data Mesh

1 -

Data Processing

1 -

Data Quality

1 -

Data warehouse

1 -

databricks

1 -

Databricks App

1 -

Databricks Assistant

2 -

Databricks Community

1 -

Databricks Dashboard

2 -

Databricks Delta Table

1 -

Databricks Demo Center

1 -

databricks genie

1 -

Databricks Job

1 -

Databricks Lakehouse

1 -

Databricks Migration

3 -

Databricks Mlflow

1 -

Databricks Notebooks

1 -

Databricks Serverless

1 -

Databricks Support

1 -

Databricks Training

1 -

Databricks Unity Catalog

2 -

Databricks Workflows

2 -

DatabricksML

1 -

DBR Versions

1 -

Declartive Pipelines

1 -

DeepLearning

1 -

Delta Lake

7 -

Delta Live Table

1 -

Delta Live Tables

1 -

Delta Time Travel

1 -

Devops

1 -

DimensionTables

1 -

DLT

2 -

DLT Pipelines

3 -

DLT-Meta

1 -

Dns

1 -

Dynamic

1 -

Free Databricks

3 -

Free Edition

1 -

GenAI agent

2 -

GenAI and LLMs

2 -

GenAIGeneration AI

2 -

Generative AI

1 -

Genie

1 -

Governance

1 -

Governed Tag

1 -

hackathon

1 -

Hive metastore

1 -

Hubert Dudek

42 -

Hybrid Lakehouse

1 -

Lakeflow Pipelines

1 -

Lakehouse

2 -

Lakehouse Migration

1 -

Lazy Evaluation

1 -

Learning

1 -

Library Installation

1 -

Llama

1 -

LLMs

1 -

mcp

2 -

Medallion Architecture

2 -

Metric Views

1 -

Microsoft Teams

1 -

Migrations

1 -

MSExcel

3 -

Multi-Table Transactions

1 -

Multiagent

3 -

Networking

2 -

NotMvpArticle

1 -

Partitioning

1 -

Partner

1 -

Performance

2 -

Performance Tuning

2 -

Private Link

1 -

Pyspark

2 -

Pyspark Code

1 -

Pyspark Databricks

1 -

Pytest

1 -

Python

1 -

Reading-excel

2 -

Scala Code

1 -

Scripting

1 -

SDK

1 -

Serverless

2 -

slack

1 -

Spark

5 -

Spark Caching

1 -

Spark Performance

1 -

SparkSQL

1 -

SQL

2 -

Sql Scripts

2 -

SQL Serverless

1 -

Students

2 -

Support Ticket

1 -

Sync

1 -

Training

1 -

Tutorial

1 -

UCSD

1 -

Unit Test

1 -

Unity Catalog

8 -

Unity Catlog

1 -

University Alliance

1 -

Variant

1 -

Warehousing

1 -

Workflow Jobs

1 -

Workflows

7 -

Zerobus

1

- « Previous

- Next »

| User | Count |

|---|---|

| 85 | |

| 72 | |

| 50 | |

| 44 | |

| 42 |