Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Re: DLT pipelines in the same job sharing compute

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

DLT pipelines in the same job sharing compute

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-26-2023 11:00 AM

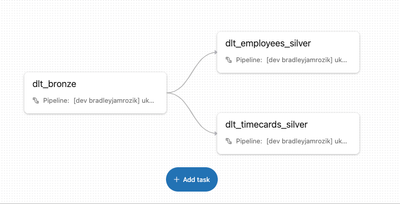

If I have a job like this that orchestrates N DLT pipelines, what setting do I need to adjust so that they use the same compute resources between steps rather than spinning up and shutting down for each individual pipeline?

5 REPLIES 5

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-07-2023 08:42 AM

Edit: I'm using databricks asset bundles to deploy both the pipelines and jobs.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-07-2023 11:08 AM

@bradleyjamrozik - under the DLT settings, notebooks can be listed all together. It will deploy a single compute resource for all the tasks.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-07-2023 11:10 AM

The different pipelines point to different catalogs/schemas so they have to be separated out.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-13-2023 10:17 AM

@bradleyjamrozik - I checked internally too. DLT pipelines cannot share resources.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-09-2025 04:03 AM

@shan_chandra Hello, I have the same issue, where I have a job that uses serverless compute and in this job I do some different tasks and then I start a DLT pipeline, which also uses serverless compute and this means that the job again have to wait for a resource to start.

Has anything changed since 2023 and if not, then is it something that will be possible in the future? For my use case it really limits the possibilities, since for the overall job there are now 10 minutes of where resources have to start, which isn't optimal, when this job has to run each hour and has to provide results quickly.

Announcements

Related Content

- DLT pipeline's compute policy when Instance pool Id used it ignores the VM series. in Data Engineering

- Cross-region S3 reads suddenly fail with 400 Bad Request — eu-west-1 metastore to af-south-1 bucket in Data Engineering

- Best Compute Option for Near-Real-Time Databricks API Ingestion Pipeline in Data Engineering

- Autoscaling with the autoloader without SDP in Data Engineering

- Is the serverless budget/usage feature officially broken for certain serverless job "types"? in Data Governance