Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- SQL Warehouse Serverless Endpoint Error

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-23-2022 04:50 PM

Our SQL Warehouse Serverless Endpoint started failing from this morning (2022-08-23 18:00:00 UTC):

“

org.apache.hadoop.hive.ql.metadata.HiveException: MetaException(message:Unable to build AWSGlueClient: com.amazonaws.SdkClientException: Unable to find a region via the region provider chain. Must provide an explicit region in the builder or setup environment to supply a region.)

“

I am 100% certain nothing changed from our ends in both AWS and Databricks.

Since all our workflows and DLTs are still running fine and all Databricks services/clusters are using the same instance profile with the same glueCatalog setting, I believe Databricks’ “Serverless Enpoints” are broken because I also fired up a “Classic” SQL Warehouses endpoint and everything worked as expected.

Labels:

- Labels:

-

Sql Warehouse

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-23-2022 08:28 PM

This appears to be occurring due to IMDSv2 enabled in the workspace.

https://docs.databricks.com/administration-guide/cloud-configurations/aws/imdsv2.html#migrate

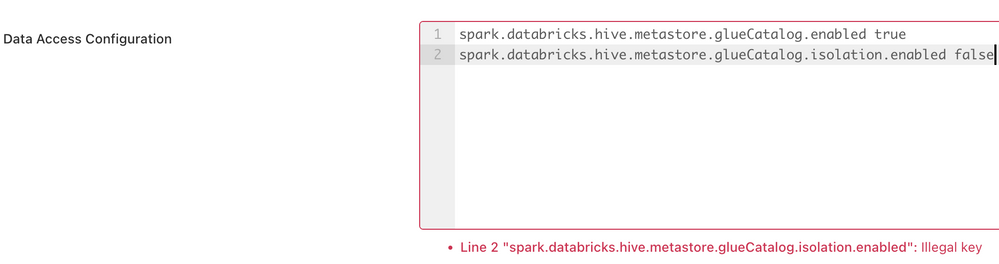

To fix this, can you please try adding the below spark config under SQL Admin Console > Data access Config where you have the glue settings. This will restart the endpoints in the workspace, so please do it off hours.

spark.databricks.hive.metastore.glueCatalog.isolation.enabled false

4 REPLIES 4

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-23-2022 08:28 PM

This appears to be occurring due to IMDSv2 enabled in the workspace.

https://docs.databricks.com/administration-guide/cloud-configurations/aws/imdsv2.html#migrate

To fix this, can you please try adding the below spark config under SQL Admin Console > Data access Config where you have the glue settings. This will restart the endpoints in the workspace, so please do it off hours.

spark.databricks.hive.metastore.glueCatalog.isolation.enabled false

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-23-2022 08:50 PM

A few points to be made clear here:

- Workspace setting of "Enforce AWS Instance Metadata Service V2 for all clusters" has always been disabled

- Again, like I described in my original post, no changes made to AWS or Databricks, Serverless SQL Warehoues just suddenly stopped working with the error

- THe spark key "spark.databricks.hive.metastore.glueCatalog.isolation.enabled" is not even recognised in "SQL Admin Console" as per the attached screenshot

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-12-2022 06:14 AM

Hi @Bo Zhu

Hope all is well! Just wanted to check in if you were able to resolve your issue and would you be happy to share the solution or mark an answer as best? Else please let us know if you need more help.

We'd love to hear from you.

Thanks!

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-07-2022 02:10 PM

It was resolved by itself the next day, and apparently it was related to some bugs which hopefully Databricks would crush them soon and improve the whole serverless service

Announcements

Related Content

- Why does the same Databricks SQL query take different time to run? in Data Engineering

- VNet Data Gateway unable to connect to Azure Databricks Serverless SQL via Private Endpoint in Administration & Architecture

- Is there a way to natively mount external Iceberg REST Catalogs (e.g., BigLake) in Unity Catalog? in Data Engineering

- Stop Refreshing. Start Querying. in Data Engineering

- SQL Warehouse fails to start — RESOURCE_EXHAUSTED Error in Warehousing & Analytics