- 1002 Views

- 2 replies

- 1 kudos

Need Help Signing up for Databricks via Google Cloud

I have purchased Databricks via Google Cloud but my order is still pending. I emailed support and they mentioned that it is pending because "You have not purchased any of the support contracts (business, enhanced, or production support) to move the c...

- 1002 Views

- 2 replies

- 1 kudos

- 1 kudos

Hi @amartinez4, based on your requirement, why don't you sign up for the new Databricks Free Edition?https://www.databricks.com/blog/introducing-databricks-free-edition If you'd rather work with the trial version, can you let us know if you've had an...

- 1 kudos

- 3217 Views

- 4 replies

- 2 kudos

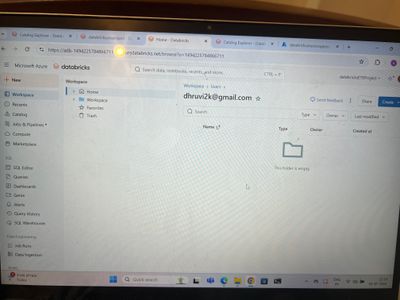

Resolved! can load/read file in databricks free edition(which is all new edition replacing community edition)

FL_DATAFRAME = spark.read.format("csv")\ .option("header", "false")\ .option("inferschema", "false")\ .opt...

- 3217 Views

- 4 replies

- 2 kudos

- 2 kudos

Hi @na_ra_7 ,In Free Edition dbfs is disabled. I doubt that above approach will work. But you should use Unity Catalog for that purpose anyway. DBFS is depracated pattern of interacting with storage.So, to use volume perform following steps:Go to Cat...

- 2 kudos

- 1646 Views

- 1 replies

- 0 kudos

Resolved! Not able to login

I’m encountering an issue when trying to log in—I'm receiving the error shown below. Previously, I was able to log in, but I noticed that none of my notebooks were appearing in the workspace.Could you please look into this and assist?You are not a me...

- 1646 Views

- 1 replies

- 0 kudos

- 0 kudos

HI @jayeshkadam_98 , If it is organization account and your access is removed from workspace, then there is a chance that you will be facing this issue. Please reach out to your organization databricks admin to help you with the same to check your us...

- 0 kudos

- 2370 Views

- 3 replies

- 3 kudos

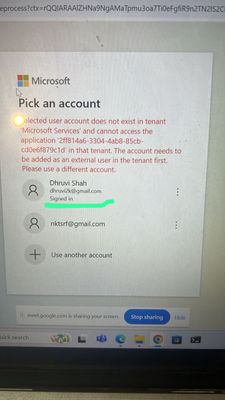

Resolved! Databricks community edition login issues with Azure portal login

Hi, I used to log in with Azure Databricks using my Azure portal username (email). I used the same email to sign up for Hi, and I used it to log in to Azure Databricks through my Azure portal username (email). I used the same email to sign up for the...

- 2370 Views

- 3 replies

- 3 kudos

- 3 kudos

Translator That's awesome, Sridhar. Sorry I missed out on reading this.

- 3 kudos

- 1667 Views

- 4 replies

- 3 kudos

*Urgent**** -Unable to login to Account console----Couln't able to do Project work

I have created a free trial subscription for DatabricksI'm unable to login to account console of databricks . Please find the attached error image . please give me the account console access so that I can implement unity catalog . I’ve logged in with...

- 1667 Views

- 4 replies

- 3 kudos

- 3 kudos

Hey @Dataenginerring it suggests the issue might be on the Microsoft/tenancy side.Could you try connecting to databricks with another browser, or through incognito on chrome?

- 3 kudos

- 1862 Views

- 1 replies

- 0 kudos

Resolved! Enable Azure Private Link back-end and front-end connections - Azure Databricks

Hi Team,Can any help me to configure data bricks in azure

- 1862 Views

- 1 replies

- 0 kudos

- 0 kudos

@legendworker Sign Up for Azure Databricks:If you do not have an Azure Databricks account, you can sign up for a free trial to get started.Set Up Your Databricks Workspace:After signing up, you need to set up your Databricks workspace. This involves ...

- 0 kudos

- 6192 Views

- 7 replies

- 1 kudos

Resolved! %run "./Includes/Classroom-Setup"

04-Working-With-Dataframes > Describe a DataFrameCode:%python%run "./Includes/Classroom-Setup" Output:/databricks/python/lib/python3.11/site-packages/IPython/core/interactiveshell.py:2870: UserWarning: Could not open file </Workspace/Users/**********...

- 6192 Views

- 7 replies

- 1 kudos

- 1 kudos

Those scripts do not work in Community Edition. When you take our training, and we supply notebooks with demos and labs those notebooks only work in the context of our training environments (e.g. Vocareum). Hope this helps, Lou.

- 1 kudos

- 7909 Views

- 7 replies

- 0 kudos

Resolved! Getting started - How to create an all purpose compute cluster or switch from SQL warehouses

Hello,Today I clicked on the Try Databricks button and set up a workspace to start running some python scripts. One problem that I've encountered is that when I click on Compute to create a cluster, the only option I have is to Create SQL warehouse....

- 7909 Views

- 7 replies

- 0 kudos

- 0 kudos

@Chris88 , Yes in free trial and Free edition only serverless cluster is available.

- 0 kudos

- 3871 Views

- 3 replies

- 3 kudos

Resolved! financial data

Hey All,I am trying to build a financial application.Is there any free mocked up financial data (pricing for securities, market data) I can be using for testing from databricks?I do not need it up to date - it can be stale or mocked up. Thanks

- 3871 Views

- 3 replies

- 3 kudos

- 3 kudos

https://marketplace.databricks.com/details/f18a4c8b-2400-4c95-a23a-f0857fd6045f/Bright-Data_Yahoo-Finance-Business-Datasethttps://marketplace.databricks.com/details/97dced04-7887-40c2-8294-43138e405fc5/Bright-Data_CrunchBase-companies-information-dat...

- 3 kudos

- 2553 Views

- 1 replies

- 0 kudos

Resolved! Dario Schiraldi Here – Excited to Connect

Hi everyone,I’m Dario Schiraldi, CEO of Travel Works, a company focused on using data-driven insights to enhance travel experiences and optimize operations for travel businesses worldwide.As someone who’s passionate about leveraging technology to sol...

- 2553 Views

- 1 replies

- 0 kudos

- 2861 Views

- 3 replies

- 0 kudos

Resolved! New To Databricks

Hello Community!!!,Im new to Databricks would like to start from scratch can anyone guide me from where to start

- 2861 Views

- 3 replies

- 0 kudos

- 0 kudos

Hello @Mahalakshmi1488 ,Here's a beginner-friendly roadmap to help you get started with learning Databricks from scratch :Start with Databricks Community Edition – It’s free and beginner-friendly.Learn the UI – Explore Notebooks, Clusters, DBFS (file...

- 0 kudos

- 1572 Views

- 1 replies

- 1 kudos

Libraries for Serverless Compute

Current Setup:I'm working on a project using the Databricks Free Trial, and I'm running my workloads on serverless compute.Issue:Every time I run a pipeline or job, it takes 30 minutes or more to complete. This delay is mainly due to the repeated ins...

- 1572 Views

- 1 replies

- 1 kudos

- 1 kudos

Hello @akshay_prabhu31! To manage dependencies on Databricks serverless compute, you can use the Environment side panel in the notebook to add the required Python libraries. You can refer to this documentation: https://docs.databricks.com/gcp/en/comp...

- 1 kudos

- 2062 Views

- 2 replies

- 0 kudos

Not Able to Access Clusters in Databricks Community Edition

I am a beginner trying to learn Databricks for data engineering practice. I recently signed up for the Databricks Community Edition using my email.However, after logging in, I keep getting redirected to the Free SQL Edition interface, where only SQL ...

- 2062 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @ha11shu ,From the screenshot, It looks like you've signed up for the Free Edition, which is the updated version of the Community Edition.Also, to let you know, Free Edition users only have access to serverless compute resources.Can you try creati...

- 0 kudos

- 1551 Views

- 1 replies

- 0 kudos

Resolved! Free Environment Databricks workspace

How can get free workspace from Databricks. last DATA + AI submit said for free environment kindly provide step by step instruction how access. it would help full

- 1551 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello Prabusankar,The steps are very straight forwards:1. Head over to login page: https://login.databricks.com/?intent=SIGN_UP&provider=DB_FREE_TIER2. Sign up using Google, Microsoft account or your email address3. Put the verification Code you get ...

- 0 kudos

- 1952 Views

- 2 replies

- 3 kudos

Not able to open all all-purpose compute

I want to create "all-purpose compute" but while clicking on Compute on lift side list, I am automatically getting "SQL warehouses". Can I have a solution on it ?

- 1952 Views

- 2 replies

- 3 kudos

- 3 kudos

@SushantNavale , Currently only serverless is available in free edition

- 3 kudos

-

Access Controls

1 -

ADLS Gen2 Using ABFSS

1 -

AML

1 -

Apache spark

1 -

Api Calls

1 -

App

1 -

Autoloader

1 -

AWSDatabricksCluster

1 -

Azure databricks

3 -

Azure Delta Lake

1 -

BI Integrations

1 -

Billing

1 -

Billing and Cost Management

1 -

Cluster

3 -

Cluster Creation

1 -

ClusterCreation

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Edition Login Issues

2 -

community workspace login

1 -

Compute

3 -

Compute Instances

2 -

Continue Community Edition

1 -

databricks

1 -

Databricks Community Edition Account

2 -

Databricks Free Edition

1 -

Databricks Issue

1 -

Databricks Notebooks

1 -

databricks one

1 -

Databricks Support

1 -

databricksapps

1 -

DB Notebook

1 -

DBFS

1 -

Delta Tables

1 -

documentation

1 -

financial data market

1 -

Free Databricks

1 -

Free Edition

1 -

Free trial

1 -

Genie

1 -

Google cloud

1 -

Hubert Dudek

1 -

link for labs

1 -

Login Issue

2 -

mcp

1 -

MlFlow

1 -

ow

1 -

Serverless

1 -

Sign Up Issues

2 -

Software Development

1 -

someone is trying to help you

1 -

Spark

1 -

URGENT

2 -

Web Application

1

- « Previous

- Next »

| User | Count |

|---|---|

| 42 | |

| 12 | |

| 10 | |

| 9 | |

| 9 |