- 1934 Views

- 1 replies

- 0 kudos

Cluster

I’m not able to have any workspace view or cluster

- 1934 Views

- 1 replies

- 0 kudos

- 0 kudos

Hey , could you be more precise with your question ?

- 0 kudos

- 4378 Views

- 2 replies

- 1 kudos

dbutils.fs.ls versus pathlib.Path

Hello community members,The dbutils.fs.ls('/') exposes the distributed file system(DBFS) on the databricks cluster. Similary, the python library pathlib can also expose 4 files in the cluster like below:from pathlib import Pathmypath = Path('/')for i...

- 4378 Views

- 2 replies

- 1 kudos

- 1 kudos

I think it will be usefull if you look at this documentation to understand difrent files and how you can interact with them:https://learn.microsoft.com/en-us/azure/databricks/files/there is not much to say then that dbutils is "databricks code" that ...

- 1 kudos

- 5236 Views

- 1 replies

- 0 kudos

How to ingest files from volume using autoloader

I am doing a test run. I am uploading files to a volume and then using autoloader to ingesgt files and creating a table. I am getting this error message:-----------------------------------------------------------com.databricks.sql.cloudfiles.errors....

- 5236 Views

- 1 replies

- 0 kudos

- 0 kudos

Hey, i think you are mixing DLT syntaxt with pyspark syntax:In DLT you should use:CREATE OR REFRESH STREAMING TABLE <table-name> AS SELECT * FROM STREAM read_files( '<path-to-source-data>', format => '<file-format>' )or in Python@dlt....

- 0 kudos

- 11170 Views

- 5 replies

- 2 kudos

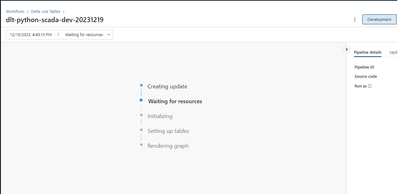

DLT Compute Resources - What Compute Is It???

Hi there, I'm wondering if someone can help me understand what compute resources DLT uses? It's not clear to me at all if it uses the last compute cluster I had been working on, or something else entirely.Can someone please help clarify this?

- 11170 Views

- 5 replies

- 2 kudos

- 2 kudos

Well, one thing they emphasize in the 'Adavanced Data Engineer' Training is that job-clusters will terminate within 5 minutes after a job is completed. So this could be in support of your theory to lower costs. I think job-cluster are actually design...

- 2 kudos

- 2386 Views

- 1 replies

- 0 kudos

python library in databricks

Hello community members,I am seeking to understand where databricks keeps all the python libraries ? For a start, I tried two lines below:import sys sys.path()This list all the paths but I cant look inside them. How is DBFS different from these paths...

- 2386 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello,all your libraries are installed on Databricks Cluster Driver node on OS Disk.DBFS is like mounted Cloude Storage account.You have veriouse ways of working with libraries but databricks only load some of libraries that comes with Cluster image....

- 0 kudos

- 3322 Views

- 1 replies

- 0 kudos

Power BI keeps SQL Warehouse Running

Hi,I have a SQL Warehouse, serverless mode, set to shut down after 5 minutes. Using the databricks web IDE, this works as expected. However, if I connect Power BI, import data to PBI and then leave the application open, the SQL Warehouse does not s...

- 3322 Views

- 1 replies

- 0 kudos

- 0 kudos

Repeating this test today, the SQL Warehouse shut down properly. Thanks for your helpful reply.

- 0 kudos

- 3250 Views

- 2 replies

- 0 kudos

Seeking Assistance with Dynamic %run Command Path

Hello Databricks Community Team,I trust this message finds you well. I am currently facing an issue while attempting to utilize a dynamic path with the %run command to execute a notebook called from another folder. I have tested the following approac...

- 3250 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi, If your config file is in the databricks file system then you should add dbfs:/Ex: f"dbfs:/Users/.../blob_conf/{conf_file}"

- 0 kudos

- 1013 Views

- 0 replies

- 0 kudos

How to Migrate specific notebooks from one Azure Repo to another Azure Repo

Team,I need to migrate only specific notebooks which has changes committed to be pulled from one repo to another repoEnvironment/Repo Setup:Master -> Dev -> Feature Branch -> Developer commits the code in Feature Branch -> Dev has the changes from D...

- 1013 Views

- 0 replies

- 0 kudos

- 1406 Views

- 1 replies

- 0 kudos

Databricks JDBC Driver 2.6.36 includes dependencies in pom.properties with vulnerabilities

Starting from Databricks JDBC Driver 2.6.36 we've got Trivy security report with vulnerabilities from pom.properties.2.6.36 adds org.apache.commons.commons-compress:1.20 and ch.qos.logback.logback-classic:1.2.3.2.6.34 doesn't include such dependencie...

- 1406 Views

- 1 replies

- 0 kudos

- 0 kudos

I didn't find where to open an issue (GitHub or Jira). Please, let me know if I need to report it somewhere else.

- 0 kudos

- 8031 Views

- 2 replies

- 2 kudos

UC Volumes - Cannot access the UC Volume path from this location. Path was

Hi, I'm trying out the new Volumes preview.I'm using external locations for everything so far. I have my storage credential, and external locations created and tested. I created a catalog, schema and in that schema a volume. In the new data browser o...

- 8031 Views

- 2 replies

- 2 kudos

- 2 kudos

Hope this helps, but this issue could be caused by the Cluster being in no-isolation shared and not in single-user or shared, both compatible with Unity Catalog

- 2 kudos

- 1471 Views

- 0 replies

- 0 kudos

Creating external location is Failing because of cross plane request

While creating Unity Catalog external location from Data Bricks UI or from a notebook using "CREATE EXTERNAL LOCATION location_name .." a connection is being made and rejected from control plane to the S3 data bucket in a PrivateLink enabled environm...

- 1471 Views

- 0 replies

- 0 kudos

- 1238 Views

- 0 replies

- 0 kudos

Source to Bronze Organization + Partition

Hi there, I hope I have what is effectively a simple question. I'd like to ask for a bit on guidance if I am structuring my source-to-bronze auto loader data properly. Here's what I have currently:/adls_storage/<data_source_name>/<category>/autoloade...

- 1238 Views

- 0 replies

- 0 kudos

- 5463 Views

- 2 replies

- 0 kudos

Install python package from private repo [CodeArtifact]

As part of my MLOps stack, I have developed a few packages which are the published to a private AWS CodeArtifact repo. How can I connect the AWS CodeArtifact repo to databricks? I want to be able to add these packages to the requirements.txt of a mod...

- 5463 Views

- 2 replies

- 0 kudos

- 0 kudos

One way to do it is to run this line before installing the dependencies:pip config set site.index-url https://aws:$CODEARTIFACT_AUTH_TOKEN@my_domain-111122223333.d.codeartifact.region.amazonaws.com/pypi/my_repo/simple/But can we add this in MLFlow?

- 0 kudos

- 1377 Views

- 0 replies

- 0 kudos

DLT pipeline access external location with abfss protocol was failed

Dear Databricks Community Members:The symptom: The DLT pipeline was failed with the error message: Failure to initialize configuration for storage account storageaccount.dfs.core.windows.net: Invalid configuration value detected for fs.azure.account...

- 1377 Views

- 0 replies

- 0 kudos

- 2810 Views

- 1 replies

- 0 kudos

SQL Warehouse cluster is always running when configure metabase conneciton

I encountered an issue while using the Metabase JDBC driver to connect to Databricks SQL Warehouse: I noticed that the SQL Warehouse cluster is always running and never stops automatically. Every few seconds, a SELECT 1 query log appears, which I sus...

- 2810 Views

- 1 replies

- 0 kudos

- 0 kudos

Will try to remove "preferredTestQuery" or add "idleConnectionTestPeriod" to avoid keep send select 1 query.

- 0 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

DevOps

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Salesforce with Databricks

1 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 144 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |