- 1906 Views

- 1 replies

- 1 kudos

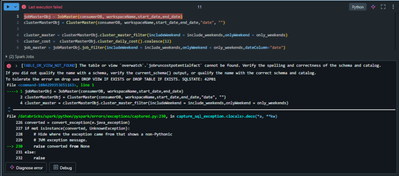

Hi there,Have a simple question. Not sure if Databricks supports this, but I'm wondering if there's a way to store the results of a sql cell into a spark dataframe? Or vice-versa, is there a way to take a sql query in python (saved as a string variab...

- 1906 Views

- 1 replies

- 1 kudos

Latest Reply

Hi @ChristianRRL, results of sql cell are automatically made available as a python dataframe using the _sqldf variable. You can read more about it here. For the second part not sure why you would need it when you can simply run the query like:spark.s...

- 10906 Views

- 0 replies

- 2 kudos

Hey Everyone, Hope you’re all doing great!A friend of mine recently went through a data engineering interview at Walmart and shared his experience with me. I thought it would be really useful to pass along what he encountered. The interview had some ...

- 10906 Views

- 0 replies

- 2 kudos