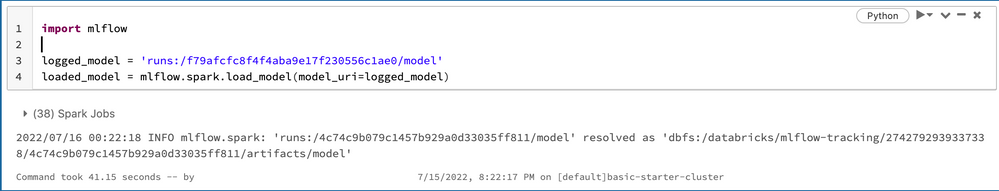

I am trying to load a simple Minmaxscaler model that was logged as a run through spark's ML Pipeline api for reuse. On average it takes 40+ seconds just to load the model with the following example:

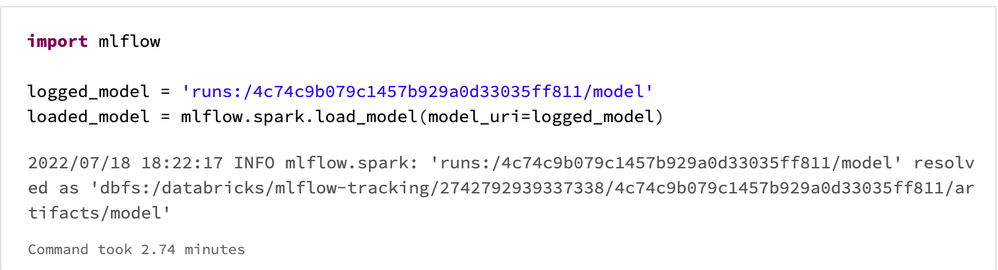

This is fine and the model transforms my data correctly, but I have a job schedule that has to run for a real-time application and randomly the simple model loading will take almost 3 minutes to load on some runs as the output below shows:

I also tried loading with pyfunc instead of spark, but it didn't help. I am running the job schedule on an all-purpose compute AWS i3 driver cluster with 4 i3 workers on 24/7, and the 3 min for loading a model will not suffice for my real time needs. Since model loading is slow, I decided to try "model serving".

Next, I clicked "register model" , then tried the model serving solution for real time needs and ran into a separate issue where the conda environment creation fails during the init because of failing to build spark. I verified that the model worked when loaded straight from the run, but the model serving fails despite following the guide and simply pressing the "enable serving" from the model registry UI. The full logs from the model serving UI are attached below, but the error is this:

"

Failed to build pyspark

...

Conda environment creation failed during pip installation! See error above.

"

I need one of these two issues to be addressed in order to meet real time needs of my application: either faster and more consistent model loading or model serving that actually works and doesn't fail to build.