- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-20-2025 08:18 AM - edited 08-20-2025 08:48 AM

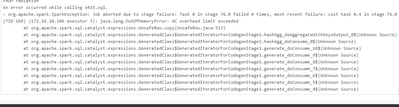

I have a databricks notebook that writes data from a parquet file with 4 million records into a new delta table. Simple script. It works fine when I run it from the Databricks notebook using the cluster with config in the screenshot below. But I run the through an ADF pipeline where we spin up a dynamic cluster with config below it fails with error below. Can you please suggest? Thanks in advance.

ADF dynamic Pyspark Cluster:

ClusterNode: Standard_D16ads_v5

ClusterDriver: Standard_D32ads_v5

ClusterVersion: 15.4.x-scala2.12

Clusterworkers: 2:20

I see the executor memory here is: 19g

offheap memory: 500 MB

Databricks Pyspark cluster:

I see the executor memory here is: 12g

offheap memory: 36GB

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-20-2025 09:28 AM

Hello @CzarR ,

From first glance it looks like offheap memory issue and thats why you would see a "GC overhead limit exceeded" error.

Can you try enabling and adjusting the offheap memory size in the linked service where you define the cluster spark configurations and apply these configs:

"spark_conf": {

"spark.memory.offHeap.enabled": "true",

"spark.memory.offHeap.size": "36g"

}Hope that helps. Let me know how it went and we can look into possible different options.

Best, Ilir

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-20-2025 10:01 AM

Hi, trying that now. Will let you know. Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-20-2025 12:19 PM

I bumped it up to 8Gb and it worked. Thank you so much for the help.