- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-03-2023 10:51 AM

For a cluster in shared-mode, you can access the notebook context via the databricks_utils library from the MLFlow git repo. You can retrieve the notebook_id, cluster_id, notebook_path, etc on the shared cluster. You will need to import Mlflow or use the ML DBR.

pip install mlflow

from mlflow.utils import databricks_utils

notebook_id = databricks_utils.get_notebook_id() #Extract the notebook ID

notebook_path = databricks_utils.get_notebook_path() #Extract the notebook path

print(notebook_id)

print(notebook_path)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-10-2023 07:26 AM

Any updates on this problem?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-18-2024 07:06 AM - edited 01-18-2024 07:12 AM

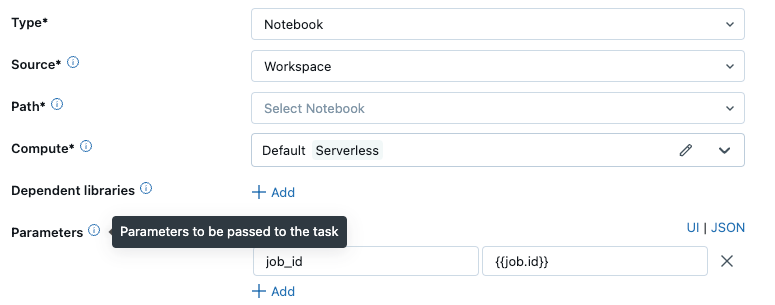

Depending on what you're trying to do with dbutils.notebook.entry_point.getDbutils().notebook().getContext().toJson() , there could be some workarounds. If all you're trying to do is get information like the job_id, run_id, time of run, etc. you can just use dynamic value references in the job. So for instance, in a notebook, you could say:

dbutils.widgets.text("job_id","")

job_id = dbutils.widgets.get("job_id")

Then in your task, when you specify params, you could use the dynamic value to pass the appropriate runtime value to the widget like so:

You can also do this as a job parameter if you need this value passed to all jobs.

Hope this helps someone who is using the context json for this simple purpose!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-29-2024 09:49 AM

I faced the same issue when switching to Shared Access cluster and found that there is a possibility to run :

dbutils.notebook.entry_point.getDbutils().notebook().getContext().safeToJson()

Hope this helps

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-10-2024 06:50 PM

this works here, thank you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-18-2024 06:15 PM

To replace dbutils.notebook.entry_point.getDbutils().notebook().getContext().toJson() and related APIs try:

from dbruntime.databricks_repl_context import get_context

token = get_context().apiToken

host = get_context().browserHostName

get_context().__dict__