Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-11-2022 10:00 AM

Hi @vinita shinde

I don't know if It meets your requirement, but you can try something like that

import pyspark.sql.functions as F

from pyspark.sql import Row

def is_different(col1, col2, cols):

if col2 in cols:

return F.col(col1) == F.col(col2)

else:

return F.col(col1).alias(col1)

def find_difference(data1, data2, id_columns):

cols = data1.columns

data_difference = data1.subtract(data2) # First you find the difference in rows

data_difference = data_difference.join(data2.select(*[F.col(i).alias(f'b_{i}') if i not in id_columns else F.col(i).alias(i) for i in cols]), on = id_columns, how = 'left')

# you join the differences with the second dataframe

return data_difference.select(*[is_different(x, f"b_{x}",data_difference.columns) for x in cols]) # then you find out which columns cause the differences

# df1_spark first dataframe

# df2_spark second datafame

# third option is a list of ids of the Row

display(find_difference(df1_spark, df2_spark, ['ID'] ))

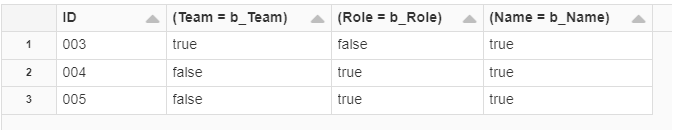

The results will be somethong like that