Endpoint deployment is very slow

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-15-2025 10:41 AM

HI team

I am testing some changes on UAT / DEV environment and noticed that the model endpoint are very slow to deploy. Since the environment is just testing and not serving any production traffic, I was wondering if there was a way to expedite this process? I dont need the most stable / secure roll-over in this scenario.

Thanks

Gurpreet

- Labels:

-

Feature Store

-

Model Serving

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-15-2025 09:01 PM

Hi @gbhatia,

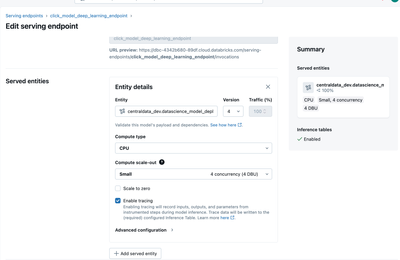

I’d need a few more details to fully understand your deployment, but in general, what can help is setting Compute type: CPU (cheaper and sufficient for testing), Compute scale-out: Small (0–4 concurrency, 0–4 DBU) since you don’t need high concurrency in DEV/UAT, and keeping Scale to zero disabled to avoid cold starts and have the endpoint always ready — noting that this increases costs slightly but makes testing much faster; for production, the recommended practice is to use larger instance sizes, more replicas, and only enable scale to zero for truly intermittent workloads.

https://docs.databricks.com/aws/en/machine-learning/model-serving/create-manage-serving-endpoints

Data Engineer | Machine Learning Engineer

LinkedIn: linkedin.com/in/wiliamrosa

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-20-2025 09:28 AM

Hi @gbhatia,

Let us know if the solution worked for you.

Data Engineer | Machine Learning Engineer

LinkedIn: linkedin.com/in/wiliamrosa

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-22-2025 07:53 AM - edited 09-22-2025 07:54 AM

Hi @WiliamRosa

Thanks for your response on this. I have been using the setting you described aboved, with the exception of `scale_to_zero`. PFA screenshot of the endpoint settings.

My deployment is a simple Pytorch Deep Learning model wrapped in a `sklearn` Pipeline wrapper. So, something like this:

```

Class OnlineModel(mlflow.pyfunc.PythonModel):

def __init__(self, deep_learning_model):

self.model = deep_learning_model # pytorch model

```

Every small changes to the model takes 20-30 mins of updating the endpoint which causes a significant delay in my testing & development. Wondering if I can decrease this wait time or this is how much databricks endpoint update take and not much i can do with it.

Gurpreet