- 2713 Views

- 3 replies

- 1 kudos

How to use Databricks secrets on MLFlow conda dependencies?

Hi!Do you know if it's correct to use the plain user and token for installing a custom dependency (an internal python package) in a mlflow registered model? (it's the only way I get it working because if not it can't install the dependency) It works,...

- 2713 Views

- 3 replies

- 1 kudos

- 1 kudos

Did someone solve this? I'm currently forced to download some libraries using an artifactory pypi mirror. However, I wouldn't want to have my secrets pasted in the conda.yaml file as plain text.

- 1 kudos

- 15686 Views

- 7 replies

- 3 kudos

Resolved! How to PREVENT mlflow's autologging from logging ALL runs?

I am logging runs from jupyter notebook. the cells which has `mlflow.sklearn.autlog()` behaves as expected. but, the cells which has .fit() method being called on sklearn's estimators are also being logged as runs without explicitly mentioning `mlflo...

- 15686 Views

- 7 replies

- 3 kudos

- 3 kudos

Good question—mlflow autologging can easily capture more runs than expected if not configured properly. Managing it carefully improves experiment tracking. Similar control and optimization are important in bussid mod workflows, where users fine-tune ...

- 3 kudos

- 285 Views

- 2 replies

- 0 kudos

Memory error in LightGBM training data processing

I am developing a LightGBM model on Databricks, and I am using the Native API because it offers the widest range of options and allows me to try various approaches.The training data is loaded from a table in the Catalog as a Spark DataFrame. However,...

- 285 Views

- 2 replies

- 0 kudos

- 905 Views

- 2 replies

- 1 kudos

Resolved! Which types of model serving endpoints have health metrics available?

I am retrieving a list of model serving endpoints for my workspace via this API: https://docs.databricks.com/api/workspace/servingendpoints/listAnd then going to retrieve health metrics for each one with: https://[DATABRICKS_HOST]/api/2.0/serving-end...

- 905 Views

- 2 replies

- 1 kudos

- 1 kudos

Your observation is correct—this behavior is expected.Endpoints with entity_type = FOUNDATION_MODEL_API do not expose health metrics via the /metrics endpoint, which is why you’re getting 404 responses. These endpoints are fully managed, multi-tenant...

- 1 kudos

- 320 Views

- 1 replies

- 0 kudos

Resolved! AWS GovCloud Feature Availability Question

Hi! I'm trying to determine if Mosaic Vector Search (or is it simply called Vector Search) is available on AWS GovCloud?This shows it is not: https://docs.databricks.com/aws/en/resources/feature-region-supportAnd it's not mentioned here: https://docs...

- 320 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @MattBuck ,It's not available on AWS GovCloud.1) The first link you attached is the authoritative source for feature availability by region. If you can't find it there it means the feature is not available in specific region2) And I think this lim...

- 0 kudos

- 326 Views

- 1 replies

- 0 kudos

Resolved! Recommended Python UDFs for On-Demand Feature Computation in Databricks

The Databricks documentation page on on-demand feature computation (https://docs.databricks.com/aws/en/machine-learning/feature-store/on-demand-features#what-are-on-demand-features) mentions using Python UDFs for computing on-demand features. What ty...

- 326 Views

- 1 replies

- 0 kudos

- 0 kudos

HI @nb92 , Only Scalar Python UDFs are allowed for on-demand feature computation. This page provides the recommended approach. Best regards,

- 0 kudos

- 1149 Views

- 7 replies

- 3 kudos

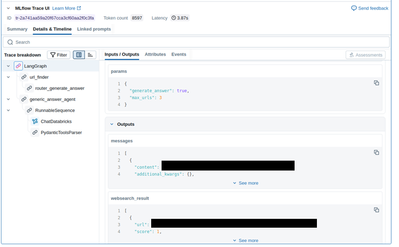

MLFlow Detailed Trace view doesn't work in some workspaces

I've created a Databricks Model Serving Endpoint which serves an MLFlow Pyfunc model. The model uses langchain and I'm using mlflow.langchain.autolog().At my company we have some production(-like) workspaces where users cannot e.g. run Notebooks and ...

- 1149 Views

- 7 replies

- 3 kudos

- 3 kudos

Funnily enough, the problem also disappeard on my end this morning Previously, I saw a networking issue in my logs, but that also went away. Let's hope it stays that way!

- 3 kudos

- 1202 Views

- 1 replies

- 0 kudos

Resolved! Using Qwen with vLLM

There are many conflict and dependency issues when trying to install VLLM and use the Qwen models (on serverless), even the v2 families.I tried following this guide https://docs.databricks.com/aws/en/machine-learning/sgc-examples/tutorials/sgc-raydat...

- 1202 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @pfzoz -- the "Model architectures failed to be inspected" error you are hitting is a well-known compatibility issue between vLLM, the transformers library, and the Qwen2/2.5-VL model family. The root cause is that vLLM's model registry subprocess...

- 0 kudos

- 904 Views

- 3 replies

- 0 kudos

Resolved! TrainingArguments fails

Hello,I am working on an ML project for text classification and I have a problem.The following piece of code stalls completely. It prints 'start' but never 'end'.from transformers import TrainingArguments print("start") args = TrainingArguments(outpu...

- 904 Views

- 3 replies

- 0 kudos

- 0 kudos

Hello @lingareddy_Alva ,Thank you for your reply. I have since been given a cluster with the ML Runtime and the code now works. So I consider the problem solved.

- 0 kudos

- 2341 Views

- 2 replies

- 0 kudos

Identity Resolution

Looking for best solutions for identity resolution. I already have deterministic matching. Exploring probabilistic solutions. Any advice for me?

- 2341 Views

- 2 replies

- 0 kudos

- 0 kudos

Check open source Zingg which runs natively within Databricks https://github.com/zinggAI/zingg

- 0 kudos

- 298 Views

- 1 replies

- 0 kudos

Job compute fails due to BQ permissions

Hello,My databricks workspace is associated to GCP project analytics.But me and my team mostly work on GCP project data-science, which contains the only BQ dataset that we have write access to.I'm trying to automate a pipeline to run on job compute a...

- 298 Views

- 1 replies

- 0 kudos

- 0 kudos

What identity is the job running as? Do you have any settings on the all-purpose cluster that you are not setting on the job-cluster? Maybe you need to provide roles/bigquery.jobUser on project analytics to the job compute service account?

- 0 kudos

- 1177 Views

- 3 replies

- 1 kudos

Resolved! Unable to Access Azure Blob Storage from Databricks Community Edition Notebook

Hi everyone,I’m currently using the Databricks Community Edition and trying to access data stored in Azure Blob Storage from my .ipynb notebook. The storage account is part of my student free Azure subscription.However, I’m not able to establish a co...

- 1177 Views

- 3 replies

- 1 kudos

- 1 kudos

Hi, I think you are referring to Databricks Free edition, in which case this doesn't support the connection to external storage such as Azure Blob storage. Thanks,Emma

- 1 kudos

- 817 Views

- 1 replies

- 1 kudos

Resolved! Issue Running Job on Serverless GPU

I have a job that runs a notebook, the notebook uses serverless GPU (A10) and it keeps failing with a "Run failed with error message Cluster 'xxxxxxxxxxx' was terminated. Reason: UNKNOWN (SUCCESS)". The base environment is 'Standard v4' and I have tr...

- 817 Views

- 1 replies

- 1 kudos

- 1 kudos

Hi @rtglorenabasul, Thanks for sharing the details. The behaviour you’re seeing is consistent with an issue in how the job is bringing up Serverless GPU compute, rather than with the notebook code itself. Having done some checks, that error usually m...

- 1 kudos

- 1630 Views

- 4 replies

- 3 kudos

Resolved! Generic Spark Connect ML error. The fitted or loaded model size is too big.

When I train models in the serverless environment V4 (Premium Plan), the system occasionally returns the error message listed below, especially after running the model training code multiple times. We have tried creating new serverless sessions, whic...

- 1630 Views

- 4 replies

- 3 kudos

- 3 kudos

Hi @jayshan, I'm sorry for the delayed response to your question. And, thanks for the extra details and for sharing your workaround. This behaviour is tied to how Spark Connect ML works in serverless mode, rather than a traditional JVM/GC leak. On se...

- 3 kudos

- 1660 Views

- 5 replies

- 3 kudos

Resolved! Vector search index initialization very slow

Hello,I am creating a vector search index and selected Compute embeddings for a delta table with 19M records. Delta table has only two columns: ID (selected as index) and Name (selected for embedding). Embedding model is databricks-gte-large-en.Ind...

- 1660 Views

- 5 replies

- 3 kudos

- 3 kudos

Why the deltaSync doesn't compute the embedding in parralel instead of sequential.That a major gap in the architecture no ?

- 3 kudos

-

Access control

3 -

Access Data

2 -

AccessKeyVault

1 -

ADB

2 -

Airflow

1 -

Amazon

2 -

Apache

1 -

Apache spark

3 -

APILimit

1 -

Artifacts

1 -

Audit

1 -

Autoloader

6 -

Autologging

2 -

Automation

2 -

Automl

44 -

Aws databricks

1 -

AWSSagemaker

1 -

Azure

32 -

Azure active directory

1 -

Azure blob storage

2 -

Azure data lake

1 -

Azure Data Lake Storage

3 -

Azure data lake store

1 -

Azure databricks

32 -

Azure event hub

1 -

Azure key vault

1 -

Azure sql database

1 -

Azure Storage

2 -

Azure synapse

1 -

Azure Unity Catalog

1 -

Azure vm

1 -

AzureML

2 -

Bar

1 -

Beta

1 -

Better Way

1 -

BI Integrations

1 -

BI Tool

1 -

Billing and Cost Management

1 -

Blob

1 -

Blog

1 -

Blog Post

1 -

Broadcast variable

1 -

Business Intelligence

1 -

CatalogDDL

1 -

Centralized Model Registry

1 -

Certification

2 -

Certification Badge

1 -

Change

1 -

Change Logs

1 -

Check

2 -

Classification Model

1 -

Cloud Storage

1 -

Cluster

10 -

Cluster policy

1 -

Cluster Start

1 -

Cluster Termination

2 -

Clustering

1 -

ClusterMemory

1 -

CNN HOF

1 -

Column names

1 -

Community Edition

1 -

Community Edition Password

1 -

Community Members

1 -

Company Email

1 -

Condition

1 -

Config

1 -

Configure

3 -

Confluent Cloud

1 -

Container

2 -

ContainerServices

1 -

Control Plane

1 -

ControlPlane

1 -

Copy

1 -

Copy into

2 -

CosmosDB

1 -

Courses

2 -

Csv files

1 -

Dashboards

1 -

Data

8 -

Data Engineer Associate

1 -

Data Engineer Certification

1 -

Data Explorer

1 -

Data Ingestion

2 -

Data Ingestion & connectivity

11 -

Data Quality

1 -

Data Quality Checks

1 -

Data Science & Engineering

2 -

databricks

5 -

Databricks Academy

3 -

Databricks Account

1 -

Databricks AutoML

9 -

Databricks Cluster

3 -

Databricks Community

5 -

Databricks community edition

4 -

Databricks connect

1 -

Databricks dbfs

1 -

Databricks Feature Store

1 -

Databricks Job

1 -

Databricks Lakehouse

1 -

Databricks Mlflow

4 -

Databricks Model

2 -

Databricks notebook

10 -

Databricks ODBC

1 -

Databricks Platform

1 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Runtime

9 -

Databricks SQL

8 -

Databricks SQL Permission Problems

1 -

Databricks Terraform

1 -

Databricks Training

2 -

Databricks Unity Catalog

1 -

Databricks V2

1 -

Databricks version

1 -

Databricks Workflow

2 -

Databricks Workflows

1 -

Databricks workspace

2 -

Databricks-connect

1 -

DatabricksContainer

1 -

DatabricksML

6 -

Dataframe

3 -

DataSharing

1 -

Datatype

1 -

DataVersioning

1 -

Date Column

1 -

Dateadd

1 -

DB Notebook

1 -

DB Runtime

1 -

DBFS

5 -

DBFS Rest Api

1 -

Dbt

1 -

Dbu

1 -

DDL

1 -

DDP

1 -

Dear Community

1 -

DecisionTree

1 -

Deep learning

4 -

Default Location

1 -

Delete

1 -

Delt Lake

4 -

Delta lake table

1 -

Delta Live

1 -

Delta Live Tables

6 -

Delta log

1 -

Delta Sharing

3 -

Delta-lake

1 -

Deploy

1 -

DESC

1 -

Details

1 -

Dev

1 -

Devops

1 -

Df

1 -

Different Notebook

1 -

Different Parameters

1 -

DimensionTables

1 -

Directory

3 -

Disable

1 -

Distribution

1 -

DLT

6 -

DLT Pipeline

3 -

Dolly

5 -

Dolly Demo

2 -

Download

2 -

EC2

1 -

Emr

2 -

Ensemble Models

1 -

Environment Variable

1 -

Epoch

1 -

Error handling

1 -

Error log

2 -

Eventhub

1 -

Example

1 -

Experiments

4 -

External Sources

1 -

Extract

1 -

Fact Tables

1 -

Failure

2 -

Feature Lookup

2 -

Feature Store

61 -

Feature Store API

2 -

Feature Store Table

1 -

Feature Table

6 -

Feature Tables

4 -

Features

2 -

FeatureStore

2 -

File Path

2 -

File Size

1 -

Fine Tune Spark Jobs

1 -

Forecasting

2 -

Forgot Password

2 -

Garbage Collection

1 -

Garbage Collection Optimization

1 -

Github

2 -

Github actions

2 -

Github Repo

2 -

Gitlab

1 -

GKE

1 -

Global Init Script

1 -

Global init scripts

4 -

Governance

1 -

Hi

1 -

Horovod

1 -

Html

1 -

Hyperopt

4 -

Hyperparameter Tuning

2 -

Iam

1 -

Image

3 -

Image Data

1 -

Inference Setup Error

1 -

INFORMATION

1 -

Input

1 -

Insert

1 -

Instance Profile

1 -

Int

2 -

Interactive cluster

1 -

Internal error

1 -

Invalid Type Code

1 -

IP

1 -

Ipython

1 -

Ipywidgets

1 -

JDBC Connections

1 -

Jira

1 -

Job

4 -

Job Parameters

1 -

Job Runs

1 -

Join

1 -

Jsonfile

1 -

Kafka consumer

1 -

Key Management

1 -

Kinesis

1 -

Lakehouse

1 -

Large Datasets

1 -

Latest Version

1 -

Learning

1 -

Limit

3 -

LLM

3 -

LLMs

3 -

Local computer

1 -

Local Machine

1 -

Log Model

2 -

Logging

1 -

Login

1 -

Logs

1 -

Long Time

2 -

Low Latency APIs

2 -

LTS ML

3 -

Machine

3 -

Machine Learning

24 -

Machine Learning Associate

1 -

Managed Table

1 -

Max Retries

1 -

Maximum Number

1 -

Medallion Architecture

1 -

Memory

3 -

Metadata

1 -

Metrics

3 -

Microsoft azure

1 -

ML Lifecycle

4 -

ML Model

4 -

ML Practioner

3 -

ML Runtime

1 -

MlFlow

75 -

MLflow API

5 -

MLflow Artifacts

2 -

MLflow Experiment

6 -

MLflow Experiments

3 -

Mlflow Model

10 -

Mlflow registry

3 -

Mlflow Run

1 -

Mlflow Server

5 -

MLFlow Tracking Server

3 -

MLModels

2 -

Model Deployment

4 -

Model Lifecycle

6 -

Model Loading

2 -

Model Monitoring

1 -

Model registry

5 -

Model Serving

28 -

Model Serving Cluster

2 -

Model Serving REST API

6 -

Model Training

2 -

Model Tuning

1 -

Models

8 -

Module

3 -

Modulenotfounderror

1 -

MongoDB

1 -

Mount Point

1 -

Mounts

1 -

Multi

1 -

Multiline

1 -

Multiple users

1 -

Nested

1 -

New Feature

1 -

New Features

1 -

New Workspace

1 -

Nlp

3 -

Note

1 -

Notebook

6 -

Notification

2 -

Object

3 -

Onboarding

1 -

Online Feature Store Table

1 -

OOM Error

1 -

Open Source MLflow

4 -

Optimization

2 -

Optimize Command

1 -

OSS

3 -

Overwatch

1 -

Overwrite

2 -

Packages

2 -

Pandas udf

4 -

Pandas_udf

1 -

Parallel

1 -

Parallel processing

1 -

Parallel Runs

1 -

Parallelism

1 -

Parameter

2 -

PARAMETER VALUE

2 -

Partner Academy

1 -

Pending State

2 -

Performance Tuning

1 -

Photon Engine

1 -

Pickle

1 -

Pickle Files

2 -

Pip

2 -

Points

1 -

Possible

1 -

Postgres

1 -

Pricing

2 -

Primary Key

1 -

Primary Key Constraint

1 -

Progress bar

2 -

Proven Practices

2 -

Public

2 -

Pymc3 Models

2 -

PyPI

1 -

Pyspark

6 -

Python

21 -

Python API

1 -

Python Code

1 -

Python Function

3 -

Python Libraries

1 -

Python Packages

1 -

Python Project

1 -

Pytorch

3 -

Reading-excel

2 -

Redis

2 -

Region

1 -

Remote RPC Client

1 -

RESTAPI

1 -

Result

1 -

Runtime update

1 -

Sagemaker

1 -

Salesforce

1 -

SAP

1 -

Scalability

1 -

Scalable Machine

2 -

Schema evolution

1 -

Script

1 -

Search

1 -

Security

2 -

Security Exception

1 -

Self Service Notebooks

1 -

Server

1 -

Serverless

1 -

Serving

1 -

Shap

2 -

Size

1 -

Sklearn

1 -

Slow

1 -

Small Scale Experimentation

1 -

Source Table

1 -

Spark config

1 -

Spark connector

1 -

Spark Error

1 -

Spark MLlib

2 -

Spark Pandas Api

1 -

Spark ui

1 -

Spark Version

2 -

Spark-submit

1 -

SparkML Models

2 -

Sparknlp

3 -

Spot

1 -

SQL

19 -

SQL Editor

1 -

SQL Queries

1 -

SQL Visualizations

1 -

Stage failure

2 -

Storage

3 -

Stream

2 -

Stream Data

1 -

Structtype

1 -

Structured streaming

2 -

Study Material

1 -

Summit23

2 -

Support

1 -

Support Team

1 -

Synapse

1 -

Synapse ML

1 -

Table

4 -

Table access control

1 -

Tableau

1 -

Task

1 -

Temporary View

1 -

Tensor flow

1 -

Test

1 -

Timeseries

1 -

Timestamps

1 -

TODAY

1 -

Training

6 -

Transaction Log

1 -

Trying

1 -

Tuning

2 -

UAT

1 -

Ui

1 -

Unexpected Error

1 -

Unity Catalog

12 -

Use Case

2 -

Use cases

1 -

Uuid

1 -

Validate ML Model

2 -

Values

1 -

Variable

1 -

Vector

1 -

Versioncontrol

1 -

Visualization

2 -

Web App Azure Databricks

1 -

Weekly Release Notes

2 -

Whl

1 -

Worker Nodes

1 -

Workflow

2 -

Workflow Jobs

1 -

Workspace

2 -

Write

1 -

Writing

1 -

Z-ordering

1 -

Zorder

1

- « Previous

- Next »

| User | Count |

|---|---|

| 90 | |

| 41 | |

| 38 | |

| 28 | |

| 25 |