- 5718 Views

- 3 replies

- 2 kudos

Table name as a parameter in SQL UDF

Hello experts,We would like to create a UDF function with input parameter a table_name. Please check the below simple example:CREATE OR REPLACE FUNCTION F_NAME(v_table_name STRING, v_w...

- 5718 Views

- 3 replies

- 2 kudos

- 2 kudos

Did you find a solutions? I'm having the same problem

- 2 kudos

- 5626 Views

- 3 replies

- 2 kudos

Resolved! Hello Community Users, We recently announced a new Large Language Models (LLM) program, the first of its kind on edX! Learn how to develop production...

Hello Community Users, We recently announced a new Large Language Models (LLM) program, the first of its kind on edX! Learn how to develop production-ready LLM applications and dive into the theory behind foundation models. Taught by industry experts...

- 5626 Views

- 3 replies

- 2 kudos

- 2 kudos

Hi @163050 You could download the Dbc file from the course, we already have the LLM course in the Customer Academy.

- 2 kudos

- 6929 Views

- 5 replies

- 1 kudos

Resolved! Concatenating strings based on previous row values

Consider the following input:ID PrevID -------- --------- 33 NULL 272 33 317 272 318 317I need to somehow get the following result:Result -------- /33 /33/272 /33/272/317 /33/272/317/318I need to do this in SQL a...

- 6929 Views

- 5 replies

- 1 kudos

- 1 kudos

https://speakerdeck.com/mitasingh https://telegra.ph/Free-Printable-cover-letter-template-01-17 https://www.imdb.com/user/ur148564602/?ref_=nv_usr_prof_2 https://www.glenewinestate.com.au/profile/coverletter50/profile https://www.wpanet.org/profile/c...

- 1 kudos

- 2843 Views

- 1 replies

- 6 kudos

docs.databricks.com

New unified Databricks navigationDatabricks plans to enable the new navigation experience (Public Preview) by default for all users. You’ll be able to opt out by clicking Disable new UI in the sidebar.The goal of the new experience is to reduce click...

- 2843 Views

- 1 replies

- 6 kudos

- 6 kudos

Hi @Priyadarshini G Thank you for providing accurate and valuable information.Best Regards

- 6 kudos

- 8120 Views

- 2 replies

- 2 kudos

Resolved! "Photon ran out of memory" while when trying to get the unique Id from sql query

I am trying to get all unique id from sql query and I always run out of memoryselect concat_ws(';',view.MATNR,view.WERKS) from hive_metastore.dqaas.temp_view as view join hive_metastore.dqaas.t_dqaas_marc as marc on marc.MATNR = view.MATNR where view...

- 8120 Views

- 2 replies

- 2 kudos

- 2 kudos

Hi @Anil Kumar Chauhan We haven't heard from you since the last response from @Werner Stinckens . Kindly share the information with us, and in return, we will provide you with the necessary solution.Thanks and Regards

- 2 kudos

- 2293 Views

- 1 replies

- 7 kudos

Train machine learning models: How can I take my ML lifecycle from experimentation to production?

Note: the following guide is primarily for Python users. For other languages, please view the following links: • Table batch reads and writes • Create a table in SQL • Visualizing data with DBSQLThis step-by-step guide will get your data...

- 2293 Views

- 1 replies

- 7 kudos

- 7 kudos

I got good knowledge by your post . It is very clear . Thank you . Keep sharing like this posts .It will be helpful

- 7 kudos

- 2133 Views

- 2 replies

- 3 kudos

www.databricks.com

Hello Dolly: Democratizing the magic of ChatGPT with open modelsDatabricks has just released a groundbreaking new blog post exploring ChatGPT, an open-source language model with the potential to transform the way we interact with technology. From cha...

- 2133 Views

- 2 replies

- 3 kudos

- 3 kudos

Lets get candid! Let me know your initial thoughts about LLM Models, ChatGpt, Dolly.

- 3 kudos

- 4832 Views

- 3 replies

- 1 kudos

Can I run a custom function that contains a trained ML model or access an API endpoint from within a SQL query in the SQL workspace?

I have a dashboard and I'd like the ability to take the data from a query and then predict a result from a trained ML model within the dashboard. I was thinking I could possibly embed the trained model within a library that I then import to the SQL w...

- 4832 Views

- 3 replies

- 1 kudos

- 1 kudos

@Erik Shilts :Yes, it is possible to use a trained ML model in a dashboard in Databricks. Here are a few approaches you could consider:Embed the model in a Python library and call it from SQL: You can train your ML model in Python and then save it a...

- 1 kudos

- 3504 Views

- 5 replies

- 1 kudos

Latest Blog PostsJanuary 13 - 20 Did you get a chance to look at the most recent blog posts? Here are some happening content from the past week that i...

Latest Blog PostsJanuary 13 - 20Did you get a chance to look at the most recent blog posts? Here are some happening content from the past week that is worth the read. What’s New With SQL User-Defined Functions In this blog, we describe several enhanc...

- 3504 Views

- 5 replies

- 1 kudos

- 1 kudos

Thanks @Sujitha Ramamoorthy , for sharing with the community these are worth reading and insightful.

- 1 kudos

- 2172 Views

- 1 replies

- 0 kudos

Weekly Release Notes Recap Here’s a quick recap of the latest release notes updates from the past one week. Databricks platform release notesJanuary 1...

Weekly Release Notes RecapHere’s a quick recap of the latest release notes updates from the past one week.Databricks platform release notesJanuary 13 - 19, 2023Cluster policies now support limiting the max number of clusters per userYou can now use c...

- 2172 Views

- 1 replies

- 0 kudos

- 4178 Views

- 1 replies

- 1 kudos

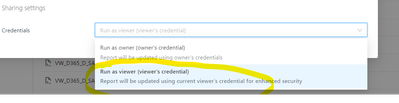

Permission errors.

I have some permission erros when I want to modify some sql queries in SQL module. We are two colleauge working on one project so we build da data model. Sometimes we need to correct each other and access the SQL code but unfortunelty we dont have a...

- 4178 Views

- 1 replies

- 1 kudos

- 1 kudos

you not worry here , give permission as a viewer only , or else they will use your creds there This doc will be helpful - https://docs.databricks.com/security/access-control/query-acl.htmlThanksAviral Bhardwaj

- 1 kudos

- 6746 Views

- 1 replies

- 4 kudos

Resolved! Insert into delta table fails

Hello experts. We are trying to execute an insert command with less columns than the target table:Insert into table_name( col1, col2, col10)Select col1, col2, col10from table_name2However the above fails with:Error in SQL statement: DeltaAnalysisExce...

- 6746 Views

- 1 replies

- 4 kudos

- 4 kudos

Hi @ELENI GEORGOUSI Yes. When you are doing an insert, your provided schema should match with the target schema else it would throw an error.But you can still insert the data using another approach. Create a dataframe with your data having less colu...

- 4 kudos

- 2832 Views

- 1 replies

- 1 kudos

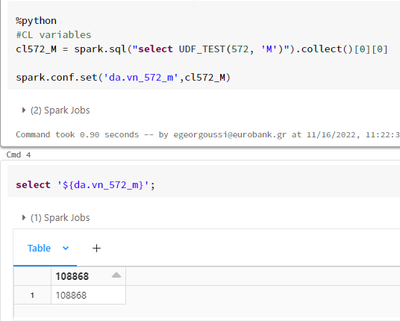

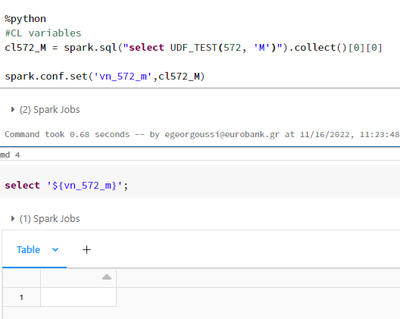

Pass parameter from python to SQL - Null result

Hello. Could someone please explain at the below example, why having the prefix "da" at the parameter name allows us to select the parameter value but not having it returns to a null value?Correct valueNull value Thank you in advance

- 2832 Views

- 1 replies

- 1 kudos

- 1987 Views

- 1 replies

- 3 kudos

Can the HTML behind a SQL visualisations be accessed?

We are using MLFlow to manage the usage of some self service notebooks. This involves logging parameters, tables and figures. Figures are logged using:mlflow.log_figure( figure=fig, artifact_file="visual/fig.html" )Usually the fig object is gener...

- 1987 Views

- 1 replies

- 3 kudos

- 3 kudos

There is no way to access the html used. You can download the images. The editor uses redash, so you can try looking at that library for more information.

- 3 kudos

- 2702 Views

- 3 replies

- 0 kudos

EOFError trying to assign a model using a custom module

I'm in a Data Science Bootcamp, and the final case study includes data preprocessing (done), using a linear regression model on the data, then porting to SQL for visualization. The model build uses custom python code provided as part of the exercise....

- 2702 Views

- 3 replies

- 0 kudos

- 0 kudos

Hi @Joe DiGiovanni Just wanted to check in if you were able to resolve your issue or do you need more help? We'd love to hear from you.Thanks!

- 0 kudos

-

Access control

3 -

Access Data

2 -

AccessKeyVault

1 -

ADB

2 -

Airflow

1 -

Amazon

2 -

Apache

1 -

Apache spark

3 -

APILimit

1 -

Artifacts

1 -

Audit

1 -

Autoloader

6 -

Autologging

2 -

Automation

2 -

Automl

44 -

Aws databricks

1 -

AWSSagemaker

1 -

Azure

32 -

Azure active directory

1 -

Azure blob storage

2 -

Azure data lake

1 -

Azure Data Lake Storage

3 -

Azure data lake store

1 -

Azure databricks

32 -

Azure event hub

1 -

Azure key vault

1 -

Azure sql database

1 -

Azure Storage

2 -

Azure synapse

1 -

Azure Unity Catalog

1 -

Azure vm

1 -

AzureML

2 -

Bar

1 -

Beta

1 -

Better Way

1 -

BI Integrations

1 -

BI Tool

1 -

Billing and Cost Management

1 -

Blob

1 -

Blog

1 -

Blog Post

1 -

Broadcast variable

1 -

Business Intelligence

1 -

CatalogDDL

1 -

Centralized Model Registry

1 -

Certification

2 -

Certification Badge

1 -

Change

1 -

Change Logs

1 -

Check

2 -

Classification Model

1 -

Cloud Storage

1 -

Cluster

10 -

Cluster policy

1 -

Cluster Start

1 -

Cluster Termination

2 -

Clustering

1 -

ClusterMemory

1 -

CNN HOF

1 -

Column names

1 -

Community Edition

1 -

Community Edition Password

1 -

Community Members

1 -

Company Email

1 -

Condition

1 -

Config

1 -

Configure

3 -

Confluent Cloud

1 -

Container

2 -

ContainerServices

1 -

Control Plane

1 -

ControlPlane

1 -

Copy

1 -

Copy into

2 -

CosmosDB

1 -

Courses

2 -

Csv files

1 -

Dashboards

1 -

Data

8 -

Data Engineer Associate

1 -

Data Engineer Certification

1 -

Data Explorer

1 -

Data Ingestion

2 -

Data Ingestion & connectivity

11 -

Data Quality

1 -

Data Quality Checks

1 -

Data Science & Engineering

2 -

databricks

5 -

Databricks Academy

3 -

Databricks Account

1 -

Databricks AutoML

9 -

Databricks Cluster

3 -

Databricks Community

5 -

Databricks community edition

4 -

Databricks connect

1 -

Databricks dbfs

1 -

Databricks Feature Store

1 -

Databricks Job

1 -

Databricks Lakehouse

1 -

Databricks Mlflow

4 -

Databricks Model

2 -

Databricks notebook

10 -

Databricks ODBC

1 -

Databricks Platform

1 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Runtime

9 -

Databricks SQL

8 -

Databricks SQL Permission Problems

1 -

Databricks Terraform

1 -

Databricks Training

2 -

Databricks Unity Catalog

1 -

Databricks V2

1 -

Databricks version

1 -

Databricks Workflow

2 -

Databricks Workflows

1 -

Databricks workspace

2 -

Databricks-connect

1 -

DatabricksContainer

1 -

DatabricksML

6 -

Dataframe

3 -

DataSharing

1 -

Datatype

1 -

DataVersioning

1 -

Date Column

1 -

Dateadd

1 -

DB Notebook

1 -

DB Runtime

1 -

DBFS

5 -

DBFS Rest Api

1 -

Dbt

1 -

Dbu

1 -

DDL

1 -

DDP

1 -

Dear Community

1 -

DecisionTree

1 -

Deep learning

4 -

Default Location

1 -

Delete

1 -

Delt Lake

4 -

Delta lake table

1 -

Delta Live

1 -

Delta Live Tables

6 -

Delta log

1 -

Delta Sharing

3 -

Delta-lake

1 -

Deploy

1 -

DESC

1 -

Details

1 -

Dev

1 -

Devops

1 -

Df

1 -

Different Notebook

1 -

Different Parameters

1 -

DimensionTables

1 -

Directory

3 -

Disable

1 -

Distribution

1 -

DLT

6 -

DLT Pipeline

3 -

Dolly

5 -

Dolly Demo

2 -

Download

2 -

EC2

1 -

Emr

2 -

Ensemble Models

1 -

Environment Variable

1 -

Epoch

1 -

Error handling

1 -

Error log

2 -

Eventhub

1 -

Example

1 -

Experiments

4 -

External Sources

1 -

Extract

1 -

Fact Tables

1 -

Failure

2 -

Feature Lookup

2 -

Feature Store

61 -

Feature Store API

2 -

Feature Store Table

1 -

Feature Table

6 -

Feature Tables

4 -

Features

2 -

FeatureStore

2 -

File Path

2 -

File Size

1 -

Fine Tune Spark Jobs

1 -

Forecasting

2 -

Forgot Password

2 -

Garbage Collection

1 -

Garbage Collection Optimization

1 -

Github

2 -

Github actions

2 -

Github Repo

2 -

Gitlab

1 -

GKE

1 -

Global Init Script

1 -

Global init scripts

4 -

Governance

1 -

Hi

1 -

Horovod

1 -

Html

1 -

Hyperopt

4 -

Hyperparameter Tuning

2 -

Iam

1 -

Image

3 -

Image Data

1 -

Inference Setup Error

1 -

INFORMATION

1 -

Input

1 -

Insert

1 -

Instance Profile

1 -

Int

2 -

Interactive cluster

1 -

Internal error

1 -

Invalid Type Code

1 -

IP

1 -

Ipython

1 -

Ipywidgets

1 -

JDBC Connections

1 -

Jira

1 -

Job

4 -

Job Parameters

1 -

Job Runs

1 -

Join

1 -

Jsonfile

1 -

Kafka consumer

1 -

Key Management

1 -

Kinesis

1 -

Lakehouse

1 -

Large Datasets

1 -

Latest Version

1 -

Learning

1 -

Limit

3 -

LLM

3 -

LLMs

3 -

Local computer

1 -

Local Machine

1 -

Log Model

2 -

Logging

1 -

Login

1 -

Logs

1 -

Long Time

2 -

Low Latency APIs

2 -

LTS ML

3 -

Machine

3 -

Machine Learning

24 -

Machine Learning Associate

1 -

Managed Table

1 -

Max Retries

1 -

Maximum Number

1 -

Medallion Architecture

1 -

Memory

3 -

Metadata

1 -

Metrics

3 -

Microsoft azure

1 -

ML Lifecycle

4 -

ML Model

4 -

ML Practioner

3 -

ML Runtime

1 -

MlFlow

75 -

MLflow API

5 -

MLflow Artifacts

2 -

MLflow Experiment

6 -

MLflow Experiments

3 -

Mlflow Model

10 -

Mlflow registry

3 -

Mlflow Run

1 -

Mlflow Server

5 -

MLFlow Tracking Server

3 -

MLModels

2 -

Model Deployment

4 -

Model Lifecycle

6 -

Model Loading

2 -

Model Monitoring

1 -

Model registry

5 -

Model Serving

28 -

Model Serving Cluster

2 -

Model Serving REST API

6 -

Model Training

2 -

Model Tuning

1 -

Models

8 -

Module

3 -

Modulenotfounderror

1 -

MongoDB

1 -

Mount Point

1 -

Mounts

1 -

Multi

1 -

Multiline

1 -

Multiple users

1 -

Nested

1 -

New Feature

1 -

New Features

1 -

New Workspace

1 -

Nlp

3 -

Note

1 -

Notebook

6 -

Notification

2 -

Object

3 -

Onboarding

1 -

Online Feature Store Table

1 -

OOM Error

1 -

Open Source MLflow

4 -

Optimization

2 -

Optimize Command

1 -

OSS

3 -

Overwatch

1 -

Overwrite

2 -

Packages

2 -

Pandas udf

4 -

Pandas_udf

1 -

Parallel

1 -

Parallel processing

1 -

Parallel Runs

1 -

Parallelism

1 -

Parameter

2 -

PARAMETER VALUE

2 -

Partner Academy

1 -

Pending State

2 -

Performance Tuning

1 -

Photon Engine

1 -

Pickle

1 -

Pickle Files

2 -

Pip

2 -

Points

1 -

Possible

1 -

Postgres

1 -

Pricing

2 -

Primary Key

1 -

Primary Key Constraint

1 -

Progress bar

2 -

Proven Practices

2 -

Public

2 -

Pymc3 Models

2 -

PyPI

1 -

Pyspark

6 -

Python

21 -

Python API

1 -

Python Code

1 -

Python Function

3 -

Python Libraries

1 -

Python Packages

1 -

Python Project

1 -

Pytorch

3 -

Reading-excel

2 -

Redis

2 -

Region

1 -

Remote RPC Client

1 -

RESTAPI

1 -

Result

1 -

Runtime update

1 -

Sagemaker

1 -

Salesforce

1 -

SAP

1 -

Scalability

1 -

Scalable Machine

2 -

Schema evolution

1 -

Script

1 -

Search

1 -

Security

2 -

Security Exception

1 -

Self Service Notebooks

1 -

Server

1 -

Serverless

1 -

Serving

1 -

Shap

2 -

Size

1 -

Sklearn

1 -

Slow

1 -

Small Scale Experimentation

1 -

Source Table

1 -

Spark config

1 -

Spark connector

1 -

Spark Error

1 -

Spark MLlib

2 -

Spark Pandas Api

1 -

Spark ui

1 -

Spark Version

2 -

Spark-submit

1 -

SparkML Models

2 -

Sparknlp

3 -

Spot

1 -

SQL

19 -

SQL Editor

1 -

SQL Queries

1 -

SQL Visualizations

1 -

Stage failure

2 -

Storage

3 -

Stream

2 -

Stream Data

1 -

Structtype

1 -

Structured streaming

2 -

Study Material

1 -

Summit23

2 -

Support

1 -

Support Team

1 -

Synapse

1 -

Synapse ML

1 -

Table

4 -

Table access control

1 -

Tableau

1 -

Task

1 -

Temporary View

1 -

Tensor flow

1 -

Test

1 -

Timeseries

1 -

Timestamps

1 -

TODAY

1 -

Training

6 -

Transaction Log

1 -

Trying

1 -

Tuning

2 -

UAT

1 -

Ui

1 -

Unexpected Error

1 -

Unity Catalog

12 -

Use Case

2 -

Use cases

1 -

Uuid

1 -

Validate ML Model

2 -

Values

1 -

Variable

1 -

Vector

1 -

Versioncontrol

1 -

Visualization

2 -

Web App Azure Databricks

1 -

Weekly Release Notes

2 -

Whl

1 -

Worker Nodes

1 -

Workflow

2 -

Workflow Jobs

1 -

Workspace

2 -

Write

1 -

Writing

1 -

Z-ordering

1 -

Zorder

1

- « Previous

- Next »