Databricks Platform Discussions

Dive into comprehensive discussions covering various aspects of the Databricks platform. Join the co...

Dive into comprehensive discussions covering various aspects of the Databricks platform. Join the co...

Engage in vibrant discussions covering diverse learning topics within the Databricks Community. Expl...

Hi Team, I cleared my Professional Data Engineer 2days back. received mail after clearing exam stating will receive digital badge with creds in 48 hours. till now i didn't received it. please let me know how much time or days it will take for receive...

Hi all,I set up a serverless endpoint for my agent via an S-account. This S-account is also granted access to another serverless endpoint. During the inference of my endpoint, I use the Databricks SDK to connect to the other endpoint without explicit...

@pikachu89 wrote:Hi all,I set up a serverless endpoint for my agent via an S-account. This S-account is also granted access to another serverless endpoint. During the inference of my endpoint, I use the Databricks SDK to connect to the other endpoint...

Hi everyone,- the situation:I have a Declarative pipeline. The pipeline consists several .py files.file1.py: creates a Materialized View: description.file2.py: create 2nd Materialized View by reading a table "transactions" and reading the MV "descri...

Hi @cdn_yyz_yul, My best guess is that the issue is being triggered by the array-flattening step rather than by the join itself. In Databricks, materialized views are maintained via refresh logic, and the public documentation on incremental refresh f...

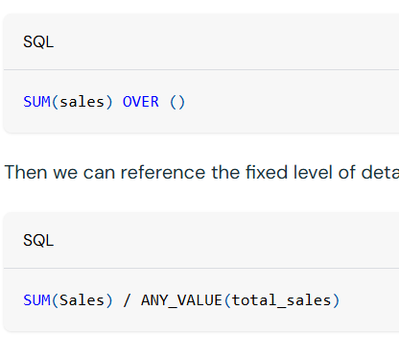

I'm trying to implement a percent of total measure in pivot table. I succeeded in creating a measure using the below formula.However, I want this measure to recognize global filter selections. For example, I want to show emissions share by category. ...

Hi @amekojc, The approach in your screenshot is the fixed LOD pattern, where you first define a total such as SUM(emissions) OVER () and then divide the grouped value by that total. That approach works for a basic per cent-of-total calculation, but i...

I have been thinking a lot about something Ali Ghodsi (CEO - Databricks) said recently. In a Bloomberg interview, he stated: "We already have artificial general intelligence. We don't need AI to get smarter. It is just lacking context."After years of...

Is there any practice exam available? I´d like to practice before take the real test.Thks,

Hellooo, Cant seem to find the practice exam with the link provided above, can you please send the direct link?Thank you!

Just cleared the Databricks Certified GenAI EngineerAssociate exam with 88% and wanted to share whatactually helped.The prep experience was a little frustrating. Official materialsare scattered across Academy courses, documentation, andnotebooks with...

I am trying to read a MongoDB collection using spark.read.format("mongodb"). However, when I attempt to display the collection, I receive the error: "UC command is not supported without recommendation." Please help resolve this issue.

Hi @shan-databricks, This is expected behaviour on Unity Catalog shared or standard compute. The mongodb Spark data source path can fail there with UC_COMMAND_NOT_SUPPORTED.WITHOUT_RECOMMENDATION / SQLSTATE 0AKUC. The public docs for that are UC_COMM...

AI Governance via UC MCP ConnectionI can not figure out how to make the policy option show up in the GUI to allow me to attach a policy to the MCP server. The "Policies" option is not showing for me even though I can confirm I have it enabled:See im...

Hey,Contact your Databricks account representative or support team and request enrollment in the Service Policies for MCP gated beta.

Hi all, We're hitting a sudden failure with Databrick's built-in python sandbox system.ai.python_exec function and want to check whether others are seeing the same thing, since it looks region-specific.Environment:Cloud: Azure DatabricksRegion: West ...

Hi, just had a quick look into this and it looks unlikely to be a customer-side permissions issue. system.ai.python_exec is a built-in Databricks function/tool, and there have been region-specific cases where the function was unavailable because it h...

When Delta Sharing is enabled and a link is shared, I understand that the data transfer happens directly and not through the sharing server. I'm curious how costs are calculated. Is the entity making the share available charged for data egress and ...

I am also curious about this - is there anyway to monitor this apart from system.billing.usage table?

After reviewing a surprising number of Databricks discussions around SCD2, CDC, historical reporting and temporal joins, I noticed that most historical data modeling challenges seem to fall into a small set of recurring patterns:Historical BackfillLa...

In Databricks notebooks, the find and replace functionality doesn't appear to be saving the replaced text. When I use find and replace text, it does initially appear to work correctly (I see that the highlighted texts are replaced).However, when I sc...

Hi @milan2, Thanks for raising this. What you are describing sounds like the text is being visually replaced in the editor, but the change is not actually being persisted. We have also seen a recent report of similar behaviour where using the browser...

Excel suddenly started automatically formatting data (convert everything to text) when loading from the new Azure Databricks Excel plug-in. I can still see my default formatting in the Databricks sideload. Just wondering if this behaviour was caused...

Hi, I just checked and this is a known regression from our side, the engineering team are working on a fix as we speak and are aiming to get it out this week. Apologies for any issues it's causing you. Thanks,Emma

Hi,I am using databricks runtime 17.3.x-scala2.13 , But I am unable to create python stored procedures, (functions are possible but they dont support a spark session like below) , any thoughts/help is much appreciated ? [INVALID_STATEMENT_OR_CLAUSE]...

Hi As others have said stored procedures don't currently support Python. You can either create the stored procedure with SQL using Windows functions and describe history or put it into a notebook and not have stored procedures. The video is about exp...

| User | Count |

|---|---|

| 1901 | |

| 1022 | |

| 930 | |

| 479 | |

| 356 |