- 34946 Views

- 22 replies

- 47 kudos

Databricks Announces the Industry’s First Generative AI Engineer Learning Pathway and Certification

Today, we are announcing the industry's first Generative AI Engineer learning pathway and certification to help ensure that data and AI practitioners have the resources to be successful with generative AI. At Databricks, we recognize that generative ...

- 34946 Views

- 22 replies

- 47 kudos

- 47 kudos

Dear Certifications TeamI have completed full Generative AI Engineering Pathway, so I received module wise knowledge badge but I didn't received the overall certificate which mentioned in description which is Generative AI Engineer with one Star. Req...

- 47 kudos

- 44 Views

- 0 replies

- 0 kudos

MCPs not working properly

Has anyone facing the same issue?Basically we created a MCP to use OpenMetadata functionalities. It works fine on AI Playground, and it works fine on Databricks Genie (previously, Databricks One). But when I try to add this MCP on Genie Code, it fail...

- 44 Views

- 0 replies

- 0 kudos

- 1326 Views

- 2 replies

- 5 kudos

Databricks Genie Code One Month In — What It Means for Us as Data Engineers

As a data engineer, I've been watching Genie Code closely since it went GA last month, and I wanted to share some honest thoughts here today not a feature dump, but how it's starting to change the way I think about my own role.Databricks recently sha...

- 1326 Views

- 2 replies

- 5 kudos

- 5 kudos

Very well said. Thanks for sharing your honest experiences!

- 5 kudos

- 279 Views

- 2 replies

- 1 kudos

Resolved! Hosted gpt-oss endpoint system prompts contain "You have no access to tools"

We are using the foundation model endpoints (provisioned and pay-per-token) for the gpt-oss models for a research project. We have been experiencing consistent tool call failures: gpt-oss-20b was failing ~1 out of every 12, while gpt-oss-120b was fai...

- 279 Views

- 2 replies

- 1 kudos

- 1 kudos

Hi @satusky, I'm not an expert in this area, but after some internal research, I wouldn't treat a leaked system-prompt snippet as a product issue related to tool use. I can’t comment on the exact internal prompt template for a specific invocation, bu...

- 1 kudos

- 193 Views

- 2 replies

- 0 kudos

Best Approach for Per-User Authentication with Databricks in an Agent-Based System

Hello everyone,I have built an ADK-based agent that connects to Databricks and can retrieve various information. However, I’m trying to design a secure way to authenticate each user individually when they interact with the agent.So far, I see two pos...

- 193 Views

- 2 replies

- 0 kudos

- 0 kudos

Hello @Vamsi3757 , This is an excellent architecture question. You are completely right to avoid PATs (due to lifecycle/security risks) and Service Principals (which mask individual user identities and make Unity Catalog audit logging difficult).To s...

- 0 kudos

- 372 Views

- 3 replies

- 2 kudos

Resolved! Genie UI MCP Server Connection Issue

Hi community,I ran into an issue while connecting a third-party MCP server to Genie's general chat UI and wanted to check if anyone else has seen this.The connection fails immediately during the initial MCP handshake with:`TypeError: unhashable type:...

- 372 Views

- 3 replies

- 2 kudos

- 2 kudos

Hi @Bihaag_N, From what I can gather, I couldn't find this exact TypeError: unhashable type: 'list' in the public docs, and I also don’t see any documented connection-side requirement stating that third-party MCP manifests need list fields normalised...

- 2 kudos

- 315 Views

- 2 replies

- 1 kudos

Resolved! How does Genie look under the hood

Hi everybody,I'm using Genie for some time which is really great. In order to improve and before recommending it more users within our company I need to get more insights on Genie including but not limited to:Is it correct that the “thinking” part is...

- 315 Views

- 2 replies

- 1 kudos

- 1 kudos

Hi @Ashwin_DSA thanks for your response.Regarding the performance issue the SQL is fast but the end-to-end response is still slow. So I assume that the bottleneck is Genie itself.I also resized the space in terms of number data sets added and improve...

- 1 kudos

- 333 Views

- 3 replies

- 1 kudos

Resolved! Can genie code be accessed via databricks app or external app; or is it confined to Workspace UI?

Hi, Can genie code be accessed via databricks app or external app; or is it confined to Workspace UI only?

- 333 Views

- 3 replies

- 1 kudos

- 1 kudos

The question raised by @Gautam2809 was about Genie Code - specifically can you use it outside UI. And as of now there's no such an option.

- 1 kudos

- 353 Views

- 1 replies

- 0 kudos

Resolved! Genie Agent Mode Visibility vs Standard Mode Monitoring

In standard Genie Space chat, space managers can use the Monitoring tab to review prompts/conversations. However, in Agent Mode, we see the warning:“Genie agent responses may contain results obtained using other users’ credentials and are hidden from...

- 353 Views

- 1 replies

- 0 kudos

- 0 kudos

Answers to your questions: Why Agent Mode is treated differentlyBecause Agent Mode can generate synthesized text/report answers from multi-step reasoning, and internal product guidance says those answers may contain data outside the reviewing manage...

- 0 kudos

- 249 Views

- 1 replies

- 1 kudos

Genie Code inline execution is very slow

Hi everyone,I’ve noticed that running inline code in Genie Code has been taking a long time, even for simple tasks.The only workaround I found so far was to explicitly instruct Genie to create notebooks in my personal folder and run the code there in...

- 249 Views

- 1 replies

- 1 kudos

- 1 kudos

Hi @lennonantonyy, Thanks for raising this. Yes, I've seen similar reports from others as well. A few people have run into notebook slowness/browser memory pressure when Genie Code is open, including lag while typing and generally poorer performance ...

- 1 kudos

- 527 Views

- 2 replies

- 0 kudos

Resolved! MLFlow tracking from Azure Container Instance

Hi everyone,we're building a voice chatbot for a customer using a mix of technologies — Databricks, Azure AI Foundry, and a few external containerized services.Currently, we're tracking requests and logs via Lakebase with custom traces, but I'm now e...

- 527 Views

- 2 replies

- 0 kudos

- 0 kudos

Similar response to the one from WorksBuddy. Short answer: yes, it's supported, and there's a specific Databricks guide for your case. The tutorial you found is for local/IDE; the production-container equivalent is here: Trace agents deployed out...

- 0 kudos

- 214 Views

- 1 replies

- 0 kudos

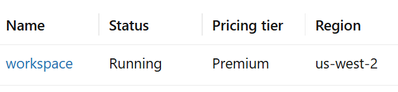

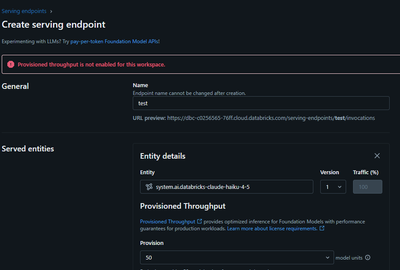

Provisioned throughput is not enabled for this workspace

I am seeing two related issues with Databricks-hosted Claude models in my workspace.Workspace details:- Cloud: AWS- Region: us-west-2- Tier: Premium PAYG- Serverless compute: enabled- Unity Catalog/metastore: configured- Compliance security profile...

- 214 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @andr11v, What you are seeing appears to be two separate issues rather than a single root cause. For the pay-per-token issue, the regional support table shows that Claude models are supported in AWS us-west-2, and the docs also say those models ar...

- 0 kudos

- 329 Views

- 1 replies

- 1 kudos

Resolved! Improving Genie Space via text instructions

Dear all,I'm trying to improve my Genie Space with some text instructions but very often Genie does not use it. If I prompt "Why have you not considered my instructions" after he answered a question he realizes that he forgot it. But this is too late...

- 329 Views

- 1 replies

- 1 kudos

- 1 kudos

Hi @michael365, There is no documented hard rule that "general instructions must be 20 lines or less." Databricks guidance is to keep text instructions small, focused, and well-organised, because long or overly broad instructions can become less effe...

- 1 kudos

- 349 Views

- 1 replies

- 2 kudos

Resolved! Genie Space API – Inspect Mode Limitation

Hi everyone,Is Inspect mode supported when using the Genie Space API?If not, is there any plan to support it in the future?I notice a significant difference in answer quality when using Genie with Inspect mode enabled and disabled in th Databricks UI...

- 349 Views

- 1 replies

- 2 kudos

- 2 kudos

Hi @lej6 ,For now inspection is not supported through Genie Space API. I guess it will be added in the future though. If my answer was helpful, please consider marking it as accepted solution.https://docs.databricks.com/aws/en/genie/conversation-api#...

- 2 kudos

- 311 Views

- 1 replies

- 1 kudos

Resolved! Facing issue while opening Genie Code

Hi,For the past 3-4 days, I have not been able to open the Genie Code AI assistant in the Databricks workspace. Earlier, it was working fine and was quite helpful for code development.I am getting the error message shown below (screenshot pasted). I ...

- 311 Views

- 1 replies

- 1 kudos

- 1 kudos

Hi @ashdc2026 ,Check below thread. Try to also delete cookies. If that won't help use inspect tool and attach more detailed error message:Solved: Not able to access Databricks AI assistant or crea... - Databricks Community - 147951

- 1 kudos

- 312 Views

- 1 replies

- 2 kudos

Resolved! OAuth authentication is required for MCP servers hosted on Databricks Apps

Hi all.I'm deploying an agent with a custom MCP using Databrikcs.Unfortunately when I try to test the MCP connectivity I receive this error:OAuth authentication is required for MCP servers hosted on Databricks Apps. Your current authentication method...

- 312 Views

- 1 replies

- 2 kudos

- 2 kudos

I found the issue. I missed to create the OAuth token into Identity & user. I was using the SP secret.

- 2 kudos

-

agent

2 -

agent bricks

2 -

Agent Skills

1 -

agents

2 -

AI

2 -

AI Agents

10 -

ai gateway

2 -

Anthropic

1 -

API Documentation

1 -

App

3 -

Application

1 -

Asset Bundles

1 -

Authentication

1 -

Autologging

1 -

automoation

1 -

Aws databricks

2 -

ChatDatabricks

1 -

claude

5 -

Cluster

1 -

Credentials

1 -

crewai

1 -

cursor

1 -

Databricks App

3 -

Databricks Course

1 -

Databricks Delta Table

1 -

Databricks Mlflow

2 -

Databricks Notebooks

1 -

Databricks SQL

1 -

Databricks Table Usage

1 -

Databricks-connect

1 -

databricksapps

1 -

delta sync

1 -

Delta Tables

1 -

Developer Experience

1 -

DLT Pipeline

1 -

documentation

1 -

Ethical Data Governance

1 -

Foundation Model

4 -

gemini

1 -

gemma

1 -

GenAI

11 -

GenAI agent

2 -

GenAI and LLMs

5 -

GenAI Generation AI

1 -

GenAIGeneration AI

46 -

Generation AI

2 -

Generative AI

5 -

Genie

20 -

Genie - Notebook Access

2 -

Genie Code

3 -

GenieAPI

5 -

Google

1 -

GPT

1 -

healthcare

1 -

Index

1 -

inference table

1 -

Information Extraction

1 -

Langchain

4 -

LangGraph

1 -

Llama

1 -

Llama 3.3

1 -

LLM

2 -

machine-learning

1 -

mcp

3 -

MlFlow

4 -

Mlflow registry

1 -

MLFlow Tracking Server

1 -

MLModels

1 -

Model Serving

3 -

modelserving

1 -

mosic ai search

1 -

Multiagent

2 -

NPM error

1 -

OpenAI

1 -

Pandas udf

1 -

Playground

1 -

productivity

1 -

Pyspark

1 -

Pyspark Dataframes

1 -

RAG

3 -

ro

1 -

Scheduling

1 -

Server

1 -

serving endpoint

3 -

streaming

2 -

Tasks

1 -

Vector

1 -

vector index

1 -

Vector Search

2 -

Vector search index

6

- « Previous

- Next »

| User | Count |

|---|---|

| 40 | |

| 28 | |

| 27 | |

| 14 | |

| 14 |