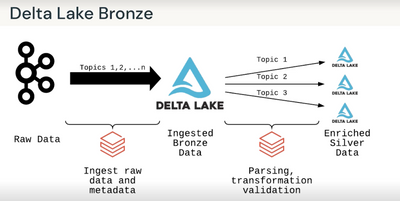

In "Advanced Data Engineering with Databricks", the section on Bronze Ingestion Patterns mentions that workspaces have limits of 5000 jobs triggered in an hour. As a solution, it suggest multiplex streaming to a single bronze table and then using subsequent processing to split data to individual tables.

Doesn't this just add one job to whatever the job count would have been otherwise? In the above example, it would have taken three jobs to ingest data to three tables, but instead we've now got four jobs: one to ingest to the multiplexed table and three others to split it out again.

What am I missing that makes this a suitable pattern for high job count environments?

Edit: It's late. It's saving job runs because only one job is required to populate the bronze layer.