Hello Experts,

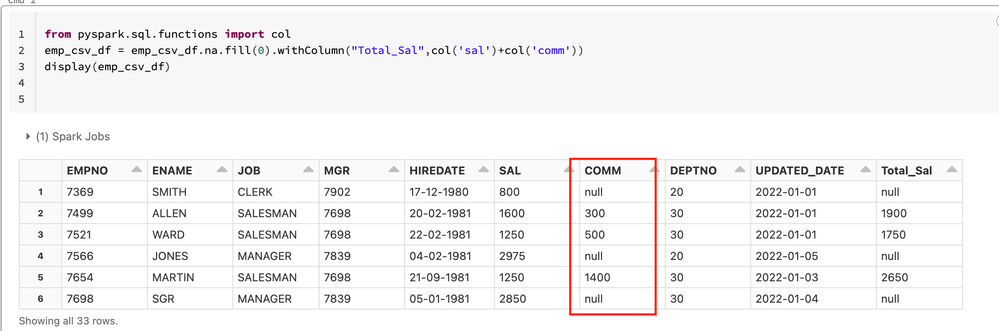

I am unable to replace nulls with 0 in a dataframe ,please refer to the screen shot

from pyspark.sql.functions import col

emp_csv_df = emp_csv_df.na.fill(0).withColumn("Total_Sal",col('sal')+col('comm'))

display(emp_csv_df)

erorr

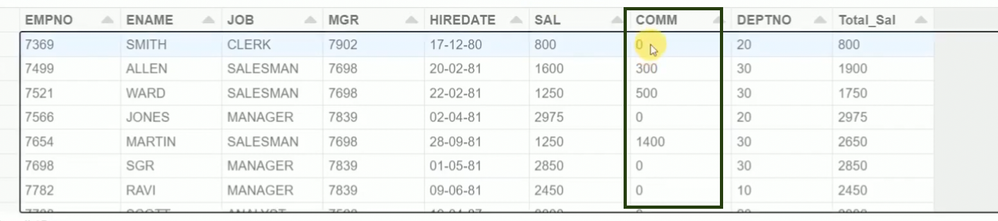

desired output

any suggestions ?

Regards,

Rakesh