Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Run more than nr-of-cores concurrent tasks.

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Run more than nr-of-cores concurrent tasks.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-20-2021 05:46 AM

We are using the terraform databricks provier, which is starting a cluster and checking every mount (since there is no mount rest API!). Each mount takes 20 seconds to check, and 99.9% of that time is idle waiting, and it starts a job per mount. If we could run many (more than nr of cores) jobs concurrently we should be able to make it faster, but I cant find how to do this. I have tried setting

`spark.executor.instances` to 2*cores, but it seems to be ignored.

So, is it possible to set databricks to use more spark executors than nr of cores?

8 REPLIES 8

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-20-2021 07:31 AM

Hi @ Erik! My name is Kaniz, and I'm the technical moderator here. Great to meet you, and thanks for your question! Let's see if your peers on the community have an answer to your question first. Or else I will follow up with my team and get back to you soon.Thanks.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-05-2021 04:16 AM

Hey @Kaniz Fatma , it does not seem like the community have an answer to this. Maybe you have access to some Databricks engineers who know the answer?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-05-2021 04:43 AM

Hi @Erik Parmann ,

The community will soon find an answer for you.

I've relayed this to my team. They will get back to you asap.

Thank you for your patience 😊.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-07-2021 10:52 AM

Hi @Erik Parmann ,

Does this old post helps link

Also, where did you added the Spark configuration for "spark.executor.instances"? this should be set at the cluster level setting.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-09-2021 04:54 AM

Hi @Jose Gonzalez, thanks for the suggestion. But that link asks how to *limit* the nr of executors, so each gets more memory. I want to do the opposite, I want *more* executors per core (or make each executor execute many parallell tasks). The default value for `spark.task.cpus` is `1`, and it does not seem to accept a value like 0.1, then it refuses to start up.

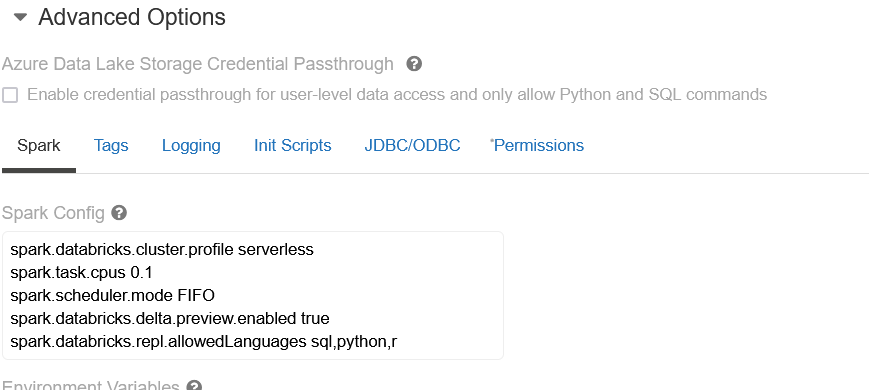

I set the cluster level settings under "Advanced options", below I attached a screenshot of how I tried editing the spark.task.cpus setting:

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-15-2021 03:23 AM

@Jose Gonzalez @Kaniz Fatma : Since there is no more answers I am starting to belive that maybe it is not possible to get databricks to use more spark executors than nr of cores. Can you verify this for me?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-18-2021 03:00 PM

hi @Erik Parmann ,

It is possible to do, but you might need to also enable dynamic allocation at the cluster level to be able to make sure your settings are apply at cluster creation . You can find more details here. As best practice, we do not recommend to change this configurations because it might create other issues. We recommend to use the default options we provided.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-20-2021 04:32 AM

Thanks for the reply! I understand that in generall the default options are good, but in this exact usecase (many tiny operations which are each 99.99999% IO bound) it is really suboptimal, and it really make the databricks-with-IAC experience a bit cumbersome.

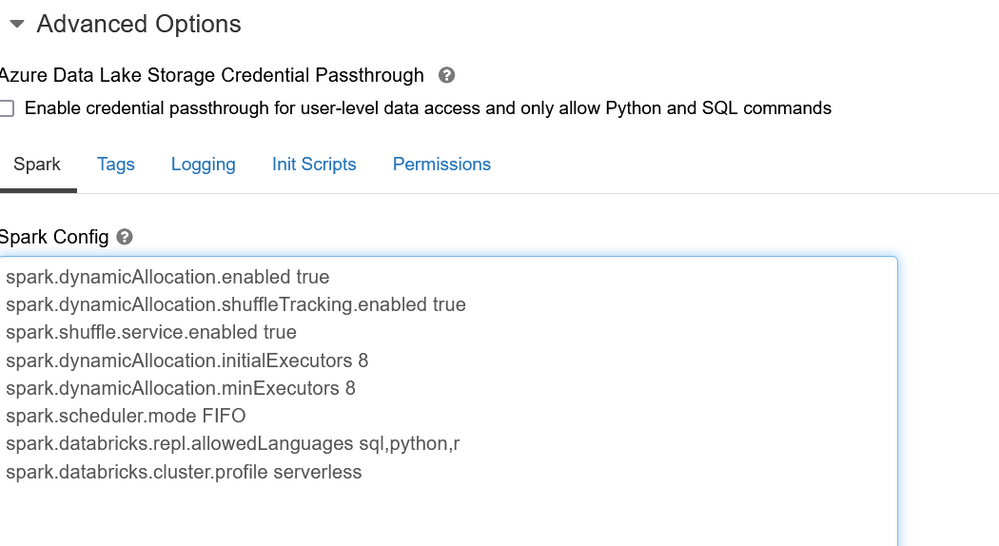

I tried with the following settings in the "Spark Config" section:

spark.dynamicAllocation.enabled true

spark.dynamicAllocation.shuffleTracking.enabled true

spark.shuffle.service.enabled true

spark.dynamicAllocation.initialExecutors 8

spark.dynamicAllocation.minExecutors 8

spark.scheduler.mode FIFO

But on a 4-core machine I am still only able to get 1 executor (as seen in the "Spark Cluster UI"-tab) executing up to 4 tasks in parallell. I tried with "High concurrency" cluster and "Standard". Are you actually able to get many executors running by changing "spark.dynamicAllocation.enabled" and "spark.dynamicAllocation.minExecutors" ?

Announcements

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- Pass Dataframe to child job in "Run Job" task in Data Engineering

- How to migrate Git repos with DLT configurations in Data Engineering

- I have to run the notebook in concurrently using process pool executor in python in Data Engineering

- Unity catalog issues in Data Engineering

- Job stuck while utilizing all workers in Data Engineering