- 4863 Views

- 2 replies

- 0 kudos

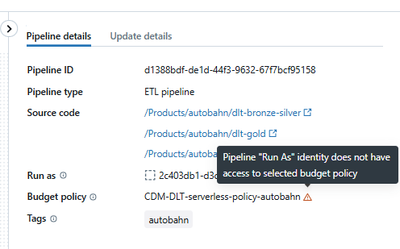

Budget Policy - Service Principals don't seem to be allowed to use budget policies

ObjectiveTransfer existing DLT pipeline to new owner (service principal). Budget policies enabled.Steps to reproduceCreated a service principalAssigned it group membership of a group that is allowed to use a budget policyEnsured it has access to the ...

- 4863 Views

- 2 replies

- 0 kudos

- 0 kudos

The error message "Pipeline 'Run As' identity does not have access to selected budget policy" typically indicates that, while your service principal is properly configured for general pipeline ownership, it’s missing explicit permission on the budget...

- 0 kudos

- 4551 Views

- 1 replies

- 1 kudos

Change AWS S3 storage class for subset of schema

I have a schema that has grown very large. There are mainly two types of tables in it. One of those types accounts for roughly 80% of the storage. Is there a way to somehow set a policy for those tables only to transfer them to a different storage cl...

- 4551 Views

- 1 replies

- 1 kudos

- 1 kudos

Yes, it's possible to manage storage costs in Databricks and Unity Catalog by targeting specific tables for different storage classes, but Unity Catalog does add complexity since it abstracts the direct S3 (or ADLS/GCS) object paths from you. Here’s ...

- 1 kudos

- 6576 Views

- 2 replies

- 3 kudos

Resolved! Create account group with terraform without account admin permissions

I’m trying to create an account-level group in Databricks using Terraform. When creating a group via the UI, it automatically becomes an account-level group that can be reused across workspaces. However, I’m struggling to achieve the same using Terra...

- 6576 Views

- 2 replies

- 3 kudos

- 3 kudos

You cannot create account-level groups in Databricks with Terraform unless your authentication mechanism has account admin privileges. This is a design limitation of both the Databricks API and Terraform provider, which require admin-level permission...

- 3 kudos

- 4808 Views

- 1 replies

- 0 kudos

"Break Glass" access for QA and PROD environments

We're a small team with three environments (development, qa, and production), each in a separate workspace. Our deployments are automated through CI/CD practices with manual approval gates to deploy to the qa and production environments.We'd like to ...

- 4808 Views

- 1 replies

- 0 kudos

- 0 kudos

Implementing "break glass" access control in Databricks, similar to Azure Privileged Identity Management (PIM), requires creating a process where users operate with minimal/default permissions, but can temporarily elevate their privileges for critica...

- 0 kudos

- 881 Views

- 1 replies

- 0 kudos

GKE Cluster Shows "Starting" Even After its turned on

Curious if anyone else has run into this. After changing to GKE based clusters, they all turn on but don't show as turned on - we'll have it show as "Starting" but be able to see the same cluster in the dropdown that's already active. "Changing" to t...

- 881 Views

- 1 replies

- 0 kudos

- 0 kudos

Yes, others have reported encountering this exact issue with Databricks clusters on Google Kubernetes Engine (GKE): after transitioning to GKE-based clusters, the UI may show clusters as "Starting" even though the cluster is already up and usable in ...

- 0 kudos

- 920 Views

- 1 replies

- 1 kudos

Power Automate Azure Databricks connector cannot get output result of a run

Hi everybody, I'm using the Azure Databricks connector in Power automate and try to trigger a job run + get result of that single run. My job created in databricks is to run a notebook that contains a single block of SQL code, and that's the only tas...

- 920 Views

- 1 replies

- 1 kudos

- 1 kudos

Even though your Databricks job only has one task, Power Automate might still treats it as a multi-task job under the hood. That’s why you're getting the error when trying to fetch the output directly from the job run.Here’s a simple workaround you c...

- 1 kudos

- 6375 Views

- 1 replies

- 0 kudos

Using Databricks CLI for generating Notebooks not supported or not implemented

Hi I'm a Data engineer and recently developed a Notebook analytics template for general purposes that I would like to be the standard on my company. Continuing, I created another notebook with a text widget that uses the user input to map the folder ...

- 6375 Views

- 1 replies

- 0 kudos

- 0 kudos

The issue you’re facing is common among Databricks users who try to automate notebook cloning via shell commands or %sh magic, only to encounter format loss: exporting via %sh databricks workspace export or related commands typically results in .dbc,...

- 0 kudos

- 5362 Views

- 1 replies

- 0 kudos

Bug when re-creating force deleted external location

When re-creating an external location that was previously force-deleted (because it had a soft-deleted managed table), the newly re-created external location preserves the reference to the soft-deleted managed table from the previous external locatio...

- 5362 Views

- 1 replies

- 0 kudos

- 0 kudos

Databricks Unity Catalog currently maintains references to soft-deleted managed tables even after the associated external location is force-deleted and re-created with the same name and physical location, causing persistent deletion failures due to l...

- 0 kudos

- 4587 Views

- 1 replies

- 0 kudos

Streaming job update

Hi! Using bundles, I want to update a running streaming job. All good until the new job gets deployed, but then the job needs to be stopped manually so that the new assets are used and it has to be started manually. This might lead to the job running...

- 4587 Views

- 1 replies

- 0 kudos

- 0 kudos

To handle updates to streaming jobs automatically and ensure that new code or assets are picked up without requiring manual stops and restarts, you typically use one of the following approaches depending on your streaming framework and deployment env...

- 0 kudos

- 7212 Views

- 1 replies

- 0 kudos

DNS resolution across vnet

Hi, I have created a new databricks workspace in Azure with backend private link. Settings are Required NSG rules - No Azure Databricks RuleNSG rules for AAD and azfrontdoor were added as per documentation. Private endpoint with subresource databric...

- 7212 Views

- 1 replies

- 0 kudos

- 0 kudos

Based on your description, the error when creating a Databricks compute cluster in Azure with Private Link is likely due to DNS resolution issues between the workspace VNET and the separate VNET hosting your private DNS zone. Even with VNET peering a...

- 0 kudos

- 4997 Views

- 1 replies

- 0 kudos

How to install private repository as package dependency in Databricks Workflow

I am a member of the development team in our company and we use Databricks as sort of like ETL tool. We utilize git integration for our program and run Workflow daily basis. Recently, we created another company internal private git repository and wan...

- 4997 Views

- 1 replies

- 0 kudos

- 0 kudos

You can install and use private repository packages in Databricks workflows in a scalable and secure way, but there are trade-offs and best practices to consider for robust, team-friendly automation. Here's a direct answer and a breakdown of solution...

- 0 kudos

- 5154 Views

- 1 replies

- 0 kudos

Why is writing direct to Unity Catalog Volume slower than to Azure Blob Storage (xarray -> zarr)

Hi,I have some workloads whereby i need to export an xarray object to a Zarr store.My UC volume is using ADLS.I tried to run a simple benchmark and found that UC Volume is considerably slower.a) Using a fsspec ADLS store pointing to the same containe...

- 5154 Views

- 1 replies

- 0 kudos

- 0 kudos

Writing directly to a Unity Catalog (UC) Volume in Databricks is often slower than writing to Azure Blob Storage (ADLS) using an fsspec-based store, especially for workloads exporting xarray objects to Zarr. This performance gap has been noted and di...

- 0 kudos

- 4779 Views

- 1 replies

- 1 kudos

How to calculate accurate usage cost for a longer contractual period?

Hi Experts!I work on providing and accurate total cost (in DBU and USD as well) calculation for my team for the whole ongoing contractual period. I'v checked the following four options:Account console: Manage account - Usage - Consumption (Legacy): t...

- 4779 Views

- 1 replies

- 1 kudos

- 1 kudos

Based on your description, the REST API for billable usage logs (Option 4) is likely the most comprehensive and reliable method for retrieving usage and cost data for the full contractual period, including potentially the missing first two months. Th...

- 1 kudos

- 4111 Views

- 1 replies

- 1 kudos

Get managedResourceGroup from serverless

Hello,In my job I have a task where I should modify a notebook to get dynamically the environment, for example:This is how we get it:dic = {"D":"dev", "Q":"qa", "P":"prod"}managedResourceGroup = spark.conf.get("spark.databricks.xxxxx")xxxxx_Index = m...

- 4111 Views

- 1 replies

- 1 kudos

- 1 kudos

To dynamically detect your Databricks environment (dev, qa, prod) in a serverless notebook, without relying on manual REST API calls, you typically need a reliable way to extract context directly inside the notebook. However, serverless notebooks oft...

- 1 kudos

- 4453 Views

- 1 replies

- 0 kudos

Query has been timed out due to inactivity while connecting from Tableau Prep

Hi,We are experiencing Query timed out error while running Tableau flows with connections to Databricks. The query history for Serverless SQL warehouse initially showing as finished in Databricks. But later the query status change to "Query has been ...

- 4453 Views

- 1 replies

- 0 kudos

- 0 kudos

The "Query has been timed out due to inactivity" error with Tableau flows connected to Databricks Serverless SQL Warehouse is a known and intermittent issue impacting several users, even when the SQL warehouse does not auto-terminate during the proce...

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

82 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |