- 170 Views

- 1 replies

- 0 kudos

Databricks permissions management at scale

Working with permissions in Databricks at scale gets tricky pretty fast.A few patterns that keep coming up:permissions spread across workspaces, catalogs, groupshard to answer simple questions like “who actually has access to X?”lots of manual queryi...

- 170 Views

- 1 replies

- 0 kudos

- 0 kudos

Cool solution!A lot of the auditing of what you have built will actually be available as part of the Governance Hub. It is built on the system tables- It also contains a lot of other cool features that are more in the areas of Data Quality, Classific...

- 0 kudos

- 294 Views

- 3 replies

- 0 kudos

How to prevent direct workspace changes in Databricks by vendors / external users?

Hi Team,I’m looking for guidance on workspace governance and change control in Databricks, specifically related to vendor access.We recently observed that workspace-level changes seem to be applied directly, and we want to understand how this is happ...

- 294 Views

- 3 replies

- 0 kudos

- 0 kudos

Thanks for adding more details.Since PAT and Databricks personnel workspace access are already disabled, I would suggest first reviewing audit logs to identify whether the change was made by a workspace admin, account admin, service principal, Terraf...

- 0 kudos

- 406 Views

- 1 replies

- 0 kudos

Training

I have signed up for training but when I log into Databricks it takes me to the account free edition and not giving me the option to get to my training.

- 406 Views

- 1 replies

- 0 kudos

- 0 kudos

I now see the course but it’s just a video and jo meeting to join! Why is this so complicated!!!

- 0 kudos

- 413 Views

- 2 replies

- 0 kudos

VNet Data Gateway unable to connect to Azure Databricks Serverless SQL via Private Endpoint

I’m facing connectivity issues when trying to connect Microsoft Fabric / Power BI to Azure Databricks Serverless and PRO SQL Warehouse using a VNet Data Gateway. I’m looking for guidance on whether this scenario is supported or if there are known lim...

- 413 Views

- 2 replies

- 0 kudos

- 0 kudos

We are able to fix the connectivity issue by correcting the NSG rules between Azure Databricks private endpoint and Fabrics Vnet gateway subnet.But errors are being occured while powerBI service semantic model query refresh using same Databricks conn...

- 0 kudos

- 115 Views

- 0 replies

- 0 kudos

How to download Course Invoice from Databricks Academy

I recently bought an Instructor-Led Databricks Academy Course, but i am unable to see the option to download an invoice for my Corporate Reimbursement!Anyone aware of how to download or facing the same issue? I checked/skimmed all over Databricks Aca...

- 115 Views

- 0 replies

- 0 kudos

- 1245 Views

- 4 replies

- 1 kudos

Resolved! DBSQL MCP Server - how to specify compute cluster?

Hi,the DBSQL MCP Server is really cool, however, I am not sure how to connect it to a specific cluster, and I could not find any information in any documentation page. My MCP settings looks like this:"databricks-sql-mcp": { "type": "streamable-http",...

- 1245 Views

- 4 replies

- 1 kudos

- 1 kudos

Thanks @Ashwin_DSA for your solution suggested here! we have a related situation. We have an AI-gateway that brokers connections to that externally-hosted Datbricks instance. As a result, we need a SQL warehouse to execute queries and can't alllocate...

- 1 kudos

- 352 Views

- 3 replies

- 0 kudos

Databricks Platform Administration Fundamentals

I have enrolled in Databricks Platform Administration Fundamentals and on the Demo: "Using Databricks Utilities and CLI" it require" you to " open this Notebook in your workspace: 1- Using Databricks Utilities and CLi" Where does one obtain this No...

- 352 Views

- 3 replies

- 0 kudos

- 0 kudos

Ashwin for the response win. Thanks... however, unfortunately I do have a trial, as well as access multiple corporate workspaces. There are not "recreate steps" - in the training: it simply says " now open this notebook" which you need. It first give...

- 0 kudos

- 491 Views

- 4 replies

- 1 kudos

Serverless Access to Public internet

HiI am trying to run notebooks on serverless compute but I cannot access the public internet. I cannot perform a get on google.com getting "[Errno -3] Temporary failure in name resolution". I checked my admin console network policies and they all wer...

- 491 Views

- 4 replies

- 1 kudos

- 1 kudos

Greetings @jpm2617 , I did some digging and would like to share my thoughts: @szymon_dybczak nailed the root cause. Your [Errno -3] Temporary failure in name resolution when calling google.com is the classic symptom of a workspace attached to a restr...

- 1 kudos

- 147 Views

- 0 replies

- 0 kudos

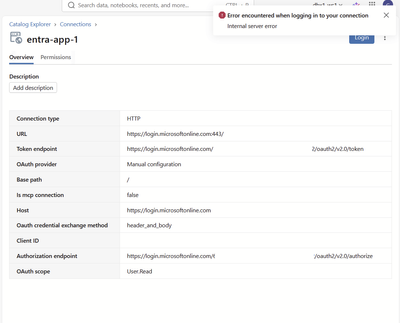

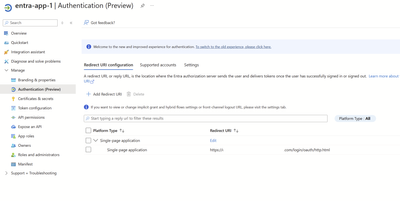

Getting 500 error on UC external HTTP connection to Entra with OAuth User-to-Machine Per User

I am getting 500 Internal Server Error with Unity Catalog external HTTP connection to Entra (for accessing Microsoft Graph API):There isn't any special setup with my Entra app, just the default one with my workspace url as the redirect url:Microsoft ...

- 147 Views

- 0 replies

- 0 kudos

- 450 Views

- 1 replies

- 0 kudos

Resolved! API does not return Genie space create and last modified dates

I am using "/api/2.0/genie/spaces" endpoint to retrieve genie spaces, but this does not return "created date" and "last modified dates". Is there a way to retrieve above information?

- 450 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @Beka, Unfortunately, no. Those timestamps aren’t exposed for Genie spaces today. The GenieSpace resource behind both GET /api/2.0/genie/spaces and GET /api/2.0/genie/spaces/{space_id} contains only fields such as space_id, title, description, par...

- 0 kudos

- 172 Views

- 0 replies

- 0 kudos

Network Check Control Plane Failure- Vnet injection

Hi,I'm receiving the following error when trying to run a classic compute:Error message: [details] X_NHC_MULTIPLE_COMPONENTS_SSL_ERROR: Instance failed network health check before bootstrapping with fatal error: X_NHC_MULTIPLE_COMPONENTS_SSL_ERROR 3 ...

- 172 Views

- 0 replies

- 0 kudos

- 779 Views

- 3 replies

- 2 kudos

Resolved! I built a free 11-tab Cost Observability Dashboard as a Databricks App — open source

I built Databricks Cost Observability — a full-stack observability dashboardthat runs natively as a Databricks App and reads directly from system tables.No data leaves your workspace, no external BI tools needed.What it covers across 11 tabs:• Execut...

- 779 Views

- 3 replies

- 2 kudos

- 2 kudos

The main tradeoff: Azure Cost Management API has a 1-2 day lag, so the data is never truly real-time, but that's fine for a cost observability use case. We can have a ingestion notebook would run daily to get those cost ingested. Azure Cost Managemen...

- 2 kudos

- 187 Views

- 0 replies

- 0 kudos

Feature Request: API support for Context-Based Ingress Control IP lists

I know that context-based ingress control is currently a preview feature, but it is an extremely important one. It removes the need for workspace-level IP access lists, making it much easier to govern allowed IPs centrally and preventing workspace-le...

- 187 Views

- 0 replies

- 0 kudos

- 738 Views

- 5 replies

- 1 kudos

Databricks integrating with ServiceNow via Lakeflow Connect for data ingestion

Databricks integrating with ServiceNow via Lakeflow Connect for data ingestion and looking for guidance on enforcing integration-user based data access.Observed behaviourU2M OAuth authentication succeeds when ServiceNow access is granted to the works...

- 738 Views

- 5 replies

- 1 kudos

- 1 kudos

Hi @emma_s I’ve reviewed the setup and wanted to clarify the behavior I’m seeing with the ServiceNow connector and U2M OAuth.The ServiceNow connection was created successfully using a U2M OAuth integration user, and that integration user has admin pe...

- 1 kudos

- 622 Views

- 4 replies

- 0 kudos

Resolved! Cannot enable GPU for serving endpoint

Good morning, I want to create a serving endpoint with a GPU. However, I get a warning "GPU is not enabled for this workspace". Your AI chatbot is telling me I have to contact someone at Databricks. I tried this form - https://www.databricks.com/comp...

- 622 Views

- 4 replies

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

81 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |