Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Administration & Architecture

Explore discussions on Databricks administration, deployment strategies, and architectural best practices. Connect with administrators and architects to optimize your Databricks environment for performance, scalability, and security.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Administration & Architecture

- Databricks job creator update with API

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 12:06 PM - edited 02-12-2024 12:07 PM

Hi team,

Greetings.

Do you know if there is a way to update the creator of a databricks_job using the API? the Documentation does not show "the creator" property and when I tried setting the creator, this property is not updated in the workspace UI.

The reason behind this is for having the same feature with a cluster creator when we can update the owner, but with databricks_job don't.

Note:

I am not referring about the "owner" in the job permissions. I am referring to the "creator" of the job

Thanks

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 09:25 PM

Hi @danmlopsmaz , Thanks for bringing up your concerns, always happy to help 😁

I understand the customer wanted to change the creator of the job but at this moment we could change the owner but not the creator. But you should be able to clone an existing job, this will ensure all the configs are replicated and the creator name would be updated.

Kindly refer to this document to copy the job: https://docs.databricks.com/en/archive/dev-tools/cli/jobs-cli.html#create-a-job:~:text=To%20copy%20a....

Please let me know if this helps and leave a like if this helps, followups are appreciated.

Kudos

Ayushi

4 REPLIES 4

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 02:54 PM - edited 02-12-2024 02:56 PM

Hey @danmlopsmaz

Thanks for bringing your concern to the community,

Unfortunately, looks like updating the "creator" of a Databricks job through the API is not possible at this time. The Databricks Jobs API and documentation do not currently expose any such functionality as you might have already checked. Additionally, attempting to set the "creator" field while updating the job will not change the displayed creator in the workspace UI.

While the lack of this feature might seem like a limitation compared to updating cluster ownership, according to me there could be important reasons behind this choice:

- The "creator" field serves as an audit trail, providing an immutable record of who initially created the job.

- It could introduce security vulnerabilities,potentially allowing unauthorised users to claim ownership of sensitive jobs.

As I don’t have specific details about your use case but here are a few alternative approaches which I can think of:

1. Leverage Permissions and Owners:

- Grant appropriate permissions to specific users or groups to manage and modify jobs without needing to change the creator.

- Utilize the "owner" field in job permissions,which controls who can edit and schedule the job.

2. Store the creator information (username,timestamp, etc.) alongside the job metadata in a separate system like a database or data lake. Using this you can manage your user who created the job.

3. Consider using Databricks Workflows, which offer shared ownership and collaborative development on job definitions.

This way, multiple users can contribute to the job while maintaining transparency and accountability.

Any followups are highly appreciated, thanks for taking the time to read.

Leave a like if this helps! Kudos,

Palash

Palash

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 09:25 PM

Hi @danmlopsmaz , Thanks for bringing up your concerns, always happy to help 😁

I understand the customer wanted to change the creator of the job but at this moment we could change the owner but not the creator. But you should be able to clone an existing job, this will ensure all the configs are replicated and the creator name would be updated.

Kindly refer to this document to copy the job: https://docs.databricks.com/en/archive/dev-tools/cli/jobs-cli.html#create-a-job:~:text=To%20copy%20a....

Please let me know if this helps and leave a like if this helps, followups are appreciated.

Kudos

Ayushi

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-13-2024 03:25 AM - edited 02-13-2024 03:27 AM

Hi @Ayushi_Suthar thanks for such a quick response.

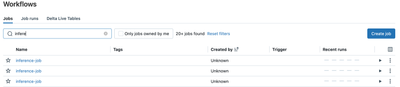

I am aware that we can clone the job to update the creator. The reason why I don't do that is because I want to keep the job "runs". We have a KPI for availability based on the number of successfully job runs vs fails job runs and if I clone the job, I will lose all that information. Also, when someone is not longer working in the Databricks workspace we have this "Unknown" property in the UI:

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-13-2024 10:56 PM

Hey @danmlopsmaz

Looking at your concern of maintaining the KPI. I agree that if you clone or re-create the job you will loose the history. A way which I can think of is if you can capture these details of your runs in a separate table. Look at my response above where in I've explained further about this. Due to some technical issues my post was not posted yesterday and it's showing up now.

Let us know if this helps, follow ups are appreciated.

Leave a like if this helps! Kudos,

Palash

Palash

Announcements

Related Content

- Init Script to Install ODBC Driver Causes Cluster Crash (JVM Thread Dump) in Administration & Architecture

- Automating Job Permission Updates in Databricks Using a Notebook in Data Engineering

- refresh power BI without premium, fabric instead in Warehousing & Analytics

- TruProxy - Live Cost Estimator - Clusters in Administration & Architecture

- Genie PDF export corrupts non-ASCII characters (Polish diacritics ł, ż, ś, ź, ę, ą) in Generative AI