Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Community Articles

Dive into a collaborative space where members like YOU can exchange knowledge, tips, and best practices. Join the conversation today and unlock a wealth of collective wisdom to enhance your experience and drive success.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Community Articles

- Re: Building a Metadata Table-Driven Framework Usi...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Building a Metadata Table-Driven Framework Using LakeFlow Declarative (Formerly DLT) Pipelines

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-22-2025 02:49 AM - edited 07-22-2025 03:08 AM

Introduction

Scaling data pipelines across an organization can be challenging, particularly when data sources, requirements, and transformation rules are always changing. A metadata table-driven framework using LakeFlow Declarative (Formerly DLT) enables teams to automate, standardize, and scale pipelines rapidly, with minimal code changes. Let’s explore how to architect and implement such a framework.

What Is a Metadata Table-Driven Framework?

A metadata table-driven framework externalizes the configuration of your data pipelines—such as source/target mappings, transformation logic, and quality rules—into metadata tables. Pipelines are designed generically to consume this metadata, making onboarding new datasets or changing business rules a matter of updating tables—not redeploying code.

Why Use LakeFlow Declarative (Formerly DLT)?

DLT, part of Databricks, offers a declarative framework for building reliable and scalable data pipelines, supporting batch and streaming data. Combined with a metadata-driven approach, DLT provides:

- Automation of repeatable ingestion and transformation patterns.

- Data quality enforcement through built-in expectations.

- Scalability and maintainability of complex Lakehouse architectures

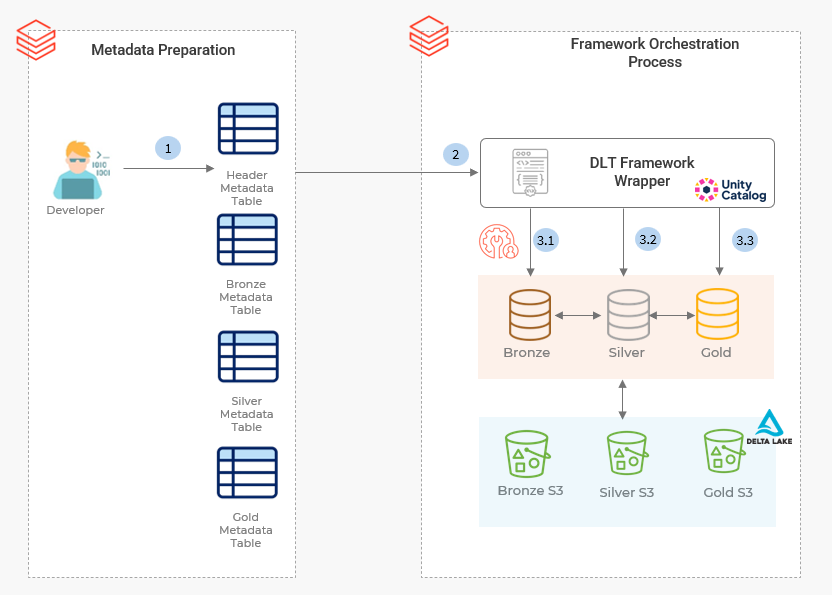

Process Flow:

Key Framework Components

Component | Purpose |

Metadata Tables | Store pipeline configs: source, target, rules, transformations |

Generic DLT Pipeline | Reads metadata to build ingestion, validation, and enrichment dynamically |

Transformation Logic | Parameterized SQL or scripts referenced from metadata |

How It Works

- Define Metadata Structure: Create tables that capture the required configurations:

- Control Header Table: Unique Logical Flow Group Identifier, Ingestion Pattern, ETL Layer, Compute Class

- Bronze Layer Metadata Table: Detail dataset level Entry for Flow Group available in Control Header table containing Source object name, type, format, landing path, Data Quality Rules.

- Silver Layer Metadata Table: Detail dataset level Entry for Flow Group available in Control Header table containing Transformation Query, CDC Logics, Partitioning/clustering

- Gold Layer Metadata table: Detail dataset level Entry for Flow Group available in Control Header table containing Business level aggregates, Data partitioning and archival policies, retention, data security via RLS/CLF.

- Orchestrate with a Generic DLT Framework Wrapper Pipeline

- Develop a single, parameter-driven DLT pipeline:

- Reads pipeline configurations from metadata tables.

- Dynamically ingests data, applies transformations & validations, writes outputs.

- Supports multiple data layers (bronze, silver, gold).

- Compatible with batch and streaming sources

- Processing Each Dataset in its respective schema

- Utilize common utilities and functions to handle each dataset according to the processing requirements defined for each layer using a generic wrapper script.

- The processed streaming tables and materialized views are then stored in their corresponding schemas: bronze, silver, and gold. Based on the parameters received from the orchestration process.

Note: For this process, generate a distinct DLT pipeline for every Logical Flow Group ID listed in the Header Metadata table, ensuring that each name is unique and corresponds to its group. Orchestration can be managed based on the Flow group ID using tools such as Control-M, Airflow, Databricks Workflows, or similar scheduling platforms.

Benefits of a Metadata-Driven LakeFlow Declarative (Formerly DLT) Framework

- Agility: Quickly onboard new data sources or update pipeline logic by modifying metadata—no code changes required.

- Consistency & Maintainability: Standardize transformations, quality rules, and updates across all datasets via centralized metadata, ensuring uniform processing.

- Scalability: Seamlessly scale to support hundreds of datasets with minimal incremental effort.

- Performance Optimization: Leverage Delta Lake’s high-performance, vectorized execution for efficient processing.

- Automation: Achieve built-in task orchestration, dependency management, and automated retries, reducing operational overhead.

- Data Quality & Reliability: Enforce data quality rules, enable native cleansing and deduplication, and benefit from ACID transactions for increased trustworthiness.

- Modularity & Reusability: Build modular, reusable pipeline components for flexible workflow design.

- Advanced Features: Natively support event-driven processing, real-time data quality metrics, visual pipeline DAG representation, and simple configuration for CDC and SCD Type 2.

- Auditability & Lineage: Track pipeline changes and data lineage for compliance and auditing.

Labels:

4 REPLIES 4

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-22-2025 03:41 AM

Wonderful content @TejeshS

Rishabh Pandey

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-25-2025 02:47 AM

Good one Tejesh. Quick intro on DLT meta.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-04-2025 04:33 AM - edited 11-04-2025 04:35 AM

Helpful article @TejeshS . I have a question like if I want to pass parameters from my workflow to pipeline, is it possible? if yes what will be the best approach.

Nagesh Patil

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-29-2026 09:44 PM

can you please share the details how this can be implemented using a sample use case in step by step process. Also python code that needs to written in each layer (bronze/silver/gold)

Announcements

Related Content

- Automating Databricks Lakeflow Connect Pipelines for CDC Databases in Community Articles

- Databricks Genie vs Databricks Genie Code in MVP Articles

- Building a Scalable Data Pipeline with Databricks Free edition | Spark Declarative Pipelines in Community Articles

- Solving the "Untitled" Lineage Mystery in Unity Catalog in Community Articles

- Databricks Introduces Column Popularity in Unity Catalog: A Smarter Way to Understand Data Usage in MVP Articles