- 1439 Views

- 1 replies

- 1 kudos

Terraform support for AI/BI dashboards

AI/BI dashboards can now be managed through Terraform.Dashboard using serialized_dashboard attribute: data "databricks_sql_warehouse" "starter" { name = "Starter Warehouse" } resource "databricks_dashboard" "dashboard" { display_name = "...

- 1439 Views

- 1 replies

- 1 kudos

- 1 kudos

if the content of `file_path` has changed, terraform detects no changes. It is better if you make it checking md5 of the file to allow resource updates.

- 1 kudos

- 198 Views

- 0 replies

- 2 kudos

Scaling Databricks Pipelines with Templates & ADF Orchestration

In a Databricks project integrating multiple legacy systems, one recurring challenge was maintaining development consistency as pipelines and team size grew.Pipeline divergence tends to emerge quickly:• Different ingestion approaches• Inconsistent tr...

- 198 Views

- 0 replies

- 2 kudos

- 254 Views

- 0 replies

- 1 kudos

Databricks AIBI: Moving from Report Factories to bAIBI

Organizations created report factories in the last few decades. We have spent decades in creating the paginated reports and interactive dashboards in various BI tools yet face the same bottleneck when the business asks a question, and data team takes...

- 254 Views

- 0 replies

- 1 kudos

- 312 Views

- 0 replies

- 2 kudos

Bristol's first ever Databricks Meetup

What an excellent evening at the inaugural Databricks Bristol Meetup! It was great to finally have an Databricks community in Bristol. A massive thanks to iO Associates for pulling this together and also to the awesome speakers. The evening had two g...

- 312 Views

- 0 replies

- 2 kudos

- 334 Views

- 0 replies

- 3 kudos

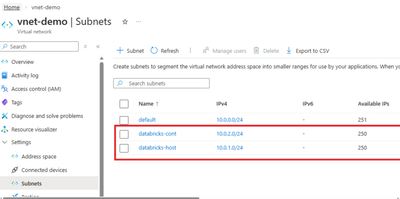

Stream a CockroachDB Changefeed to Databricks (Azure Edition)

Please check https://github.com/rsleedbx/crdb_to_dbx which has the steps and a working notebook. This guide shows how to stream CockroachDB data to Databricks using changefeeds, Azure Blob Storage, Unity Catalog, and Delta Lake. You get one platform...

- 334 Views

- 0 replies

- 3 kudos

- 1288 Views

- 1 replies

- 5 kudos

Resolved! Data Driven AI Roadmap Databricks Governance Best Practices Aligned with Gartner's AI Model

Introduction“AI First” - But Data Always Comes FirstI have been working in the data space for close to two decades. My journey started as an ETL developer and gradually evolved into roles spanning data engineering, platform design, and solution archi...

- 1288 Views

- 1 replies

- 5 kudos

- 5 kudos

@Saurabh2406 , I really appreciate how you grounded the “AI-first” conversation in the reality that data governance, security, and quality are what actually determine whether AI can scale beyond pilots. The tie-in to Gartner’s AI maturity model, and ...

- 5 kudos

- 1195 Views

- 1 replies

- 4 kudos

Wait, Did Databricks Just Put Git Inside My Database?

Wait, Did Databricks Just Put Git Inside My Database? If you've been scratching your head at Lakebase's "branching" feature wondering "am I working with a database or GitHub?"—you're not alone. Let me break down what's actually happening here, becaus...

- 1195 Views

- 1 replies

- 4 kudos

- 4 kudos

@AbhaySingh , This was a fun read — and a great way to spark discussion about what “Git inside my database” really means in practice. From what I’m seeing in the product world, Databricks isn’t literally putting Git inside the storage engine of your...

- 4 kudos

- 539 Views

- 0 replies

- 4 kudos

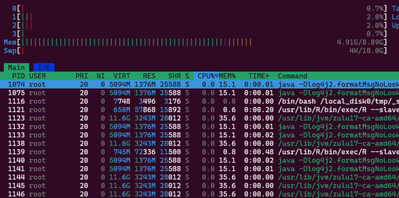

Beyond Notebooks: Why SSH Access to the Databricks Driver Matters

IntroductionCloud-native data platforms like Azure Databricks are powerful because they abstract away infrastructure so you can focus on data engineering, analytics, and ML workloads. However, there are situations where you may run into issues that r...

- 539 Views

- 0 replies

- 4 kudos

- 464 Views

- 0 replies

- 1 kudos

New Feature for developer-productivity

Databricks has added 2 new feature on its UI. These are small but quite effective for the developer productivity. 1. Paste images into notebooksCopy images from your local file system and paste them into markdown cells in Databricks notebookshttps:/...

- 464 Views

- 0 replies

- 1 kudos

- 4825 Views

- 4 replies

- 9 kudos

Building a Metadata Table-Driven Framework Using LakeFlow Declarative (Formerly DLT) Pipelines

IntroductionScaling data pipelines across an organization can be challenging, particularly when data sources, requirements, and transformation rules are always changing. A metadata table-driven framework using LakeFlow Declarative (Formerly DLT) enab...

- 4825 Views

- 4 replies

- 9 kudos

- 9 kudos

can you please share the details how this can be implemented using a sample use case in step by step process. Also python code that needs to written in each layer (bronze/silver/gold)

- 9 kudos

- 8112 Views

- 2 replies

- 7 kudos

Turning Databricks into an AI pair‑programmer with Claude‑powered coding agents

Databricks + Claude Code This guide walks through a practical, end‑to‑end setup: installing Claude Code, wiring it to Anthropic models served from Databricks, and configuring authentication so everything “just works” from your terminal and editor. Yo...

- 8112 Views

- 2 replies

- 7 kudos

- 7 kudos

I have yet to use Claude within Databricks - Thanks for this @pradeep_singh

- 7 kudos

- 446 Views

- 0 replies

- 1 kudos

Hive (Dark) Metastore — Azure Databricks Standard Tier Retirement is a great move

Retirement is planned for Azure in Oct 2026. Completed in other clouds in Oct 2025Data residing in the Hive Metastore is opaque, suffers from low governance and is siloed in legacy technical constructs. The Hive Metastore (HMS) was a technology revol...

- 446 Views

- 0 replies

- 1 kudos

- 453 Views

- 0 replies

- 0 kudos

You can use built-in AI functions directly in Databricks SQL

Databricks provides built-in AI functions that can be used directly in SQL or notebooks, without managing models or infrastructure.Example:SELECT ticket_id, ai_generate( 'Summarize this support ticket:\n{{text}}', 'databricks-dbrx-instruct', descript...

- 453 Views

- 0 replies

- 0 kudos

- 523 Views

- 2 replies

- 8 kudos

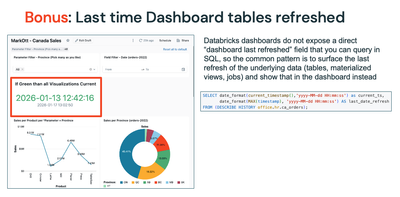

How can I tell my Dashboard visualizations aren't stale?

With AI/BI Dashboards, a best practice is for the creator/owner to 'Schedule' the Dashboard to rerun the underlying datasets when changes have occurred. This ensures the Visualizations are rendered with the freshest data. But users still will questi...

- 523 Views

- 2 replies

- 8 kudos

- 8 kudos

@mark_ott That is a nice idea and quite useful. Quick question: how did you define 'stale' data in your case? So what is the threshold at which your 'conditional equation' color codes the date red? did you somehow link that to the refresh schedule?

- 8 kudos

- 1629 Views

- 2 replies

- 6 kudos

Data + AI Is Not the Future at Databricks. It’s the Present.

One thing becomes very clear when you spend time in the Databricks community: AI is no longer an experiment. It is already part of how real teams build, ship, and operate data systems at scale.For a long time, many organizations treated data engineer...

- 1629 Views

- 2 replies

- 6 kudos

- 6 kudos

Thanks @Louis_Frolio for your kind words. Happy to contribute here.

- 6 kudos

-

Access Data

1 -

ADF Linked Service

1 -

ADF Pipeline

1 -

Advanced Data Engineering

3 -

agent bricks

1 -

Agentic AI

3 -

AI

1 -

AI Agents

3 -

AI Readiness

1 -

Apache spark

3 -

Apache Spark 3.0

2 -

ApacheSpark

1 -

Associate Certification

1 -

Auto-loader

1 -

Automation

1 -

AWSDatabricksCluster

1 -

Azure

1 -

Azure databricks

3 -

Azure Databricks Job

2 -

Azure Delta Lake

2 -

Azure devops integration

1 -

AzureDatabricks

2 -

BI

1 -

BI Integrations

1 -

Big data

1 -

Billing and Cost Management

2 -

Blog

1 -

Caching

2 -

CDC

1 -

CICDForDatabricksWorkflows

1 -

Cluster

1 -

Cluster Policies

1 -

Cluster Pools

1 -

Collect

1 -

Community Event

1 -

CommunityArticle

2 -

Cost Optimization Effort

2 -

CostOptimization

2 -

custom compute policy

1 -

CustomLibrary

1 -

Data

1 -

Data Analysis with Databricks

1 -

Data Architecture

1 -

Data Driven AI Roadmap

1 -

Data Engineering

7 -

Data Governance

1 -

Data Ingestion

1 -

Data Ingestion & connectivity

1 -

Data Mesh

1 -

Data Processing

1 -

Data Quality

1 -

Data warehouse

1 -

databricks

1 -

Databricks App

1 -

Databricks Assistant

2 -

Databricks Community

1 -

Databricks Dashboard

2 -

Databricks Delta Table

1 -

Databricks Demo Center

1 -

databricks genie

1 -

Databricks Job

1 -

Databricks Lakehouse

1 -

Databricks Migration

3 -

Databricks Mlflow

1 -

Databricks Notebooks

1 -

Databricks Serverless

1 -

Databricks Support

1 -

Databricks Training

1 -

Databricks Unity Catalog

2 -

Databricks Workflows

2 -

DatabricksML

1 -

DBR Versions

1 -

Declartive Pipelines

1 -

DeepLearning

1 -

Delta Lake

7 -

Delta Live Table

1 -

Delta Live Tables

1 -

Delta Time Travel

1 -

Devops

1 -

DimensionTables

1 -

DLT

2 -

DLT Pipelines

3 -

DLT-Meta

1 -

Dns

1 -

Dynamic

1 -

Free Databricks

3 -

Free Edition

1 -

GenAI agent

2 -

GenAI and LLMs

2 -

GenAIGeneration AI

2 -

Generative AI

1 -

Genie

1 -

Governance

1 -

Governed Tag

1 -

hackathon

1 -

Hive metastore

1 -

Hubert Dudek

42 -

Hybrid Lakehouse

1 -

Lakeflow Pipelines

1 -

Lakehouse

2 -

Lakehouse Migration

1 -

Lazy Evaluation

1 -

Learning

1 -

Library Installation

1 -

Llama

1 -

LLMs

1 -

mcp

2 -

Medallion Architecture

2 -

Metric Views

1 -

Microsoft Teams

1 -

Migrations

1 -

MSExcel

3 -

Multi-Table Transactions

1 -

Multiagent

3 -

Networking

2 -

NotMvpArticle

1 -

Partitioning

1 -

Partner

1 -

Performance

2 -

Performance Tuning

2 -

Private Link

1 -

Pyspark

2 -

Pyspark Code

1 -

Pyspark Databricks

1 -

Pytest

1 -

Python

1 -

Reading-excel

2 -

Scala Code

1 -

Scripting

1 -

SDK

1 -

Serverless

2 -

slack

1 -

Spark

5 -

Spark Caching

1 -

Spark Performance

1 -

SparkSQL

1 -

SQL

2 -

Sql Scripts

2 -

SQL Serverless

1 -

Students

2 -

Support Ticket

1 -

Sync

1 -

Training

1 -

Tutorial

1 -

UCSD

1 -

Unit Test

1 -

Unity Catalog

8 -

Unity Catlog

1 -

University Alliance

1 -

Variant

1 -

Warehousing

1 -

Workflow Jobs

1 -

Workflows

7 -

Zerobus

1

- « Previous

- Next »

| User | Count |

|---|---|

| 85 | |

| 72 | |

| 50 | |

| 44 | |

| 42 |