- 1352 Views

- 9 replies

- 6 kudos

Streaming Failure Models: Why "It Didn't Crash" Is the Worst Outcome

Most Databricks streaming failures don't look dramatic.No cluster termination. No red wall of errors. The UI says RUNNING — and your customers start reporting nonsense.I wrote about the incident that changed how we think about streaming jobs on share...

- 1352 Views

- 9 replies

- 6 kudos

- 6 kudos

Completely agree, production war stories are worth more than any documentation. I’ve eaten enough teeth on production data lake issues to write my own chapter on what can go wrong, whether that’s deploying Databricks in financial institutions or bein...

- 6 kudos

- 351 Views

- 0 replies

- 1 kudos

Built a NiFi processor for Zerobus Ingest - gotchas the docs won’t tell you

Zerobus went GA on February 23rd. Connector ecosystem: empty. I run NiFi for security telemetry so I built the processor myself. Apache 2.0, source on GitHub.NiFi uses NAR packaging — each archive gets its own classloader. The Zerobus Java SDK is JNI...

- 351 Views

- 0 replies

- 1 kudos

- 857 Views

- 0 replies

- 3 kudos

Databricks Multi Table Transactions - All Data or Nothing

Databricks introduces multi-table transactions, allowing operations across multiple Delta tables to execute as a single atomic unit. Delta Lake has provided ACID guarantees at the table level, but ensuring atomicity across multiple tables previously ...

- 857 Views

- 0 replies

- 3 kudos

- 632 Views

- 1 replies

- 2 kudos

Multi-Task on a Shared Cluster — Why That's Also Not Enough

Part 2 of 3 — Databricks Streaming ArchitectureThe instinct after Part 1 was obvious.If running eight queries in one task means one failure can hide while others keep running — split them into multiple tasks. Separate concerns. Give each component it...

- 632 Views

- 1 replies

- 2 kudos

- 2 kudos

Part 1: Streaming Failure Models: Why "It Didn't Crash" Is the Worst OutcomePart 3: One Cluster per Task — Proven, Ready, and Waiting

- 2 kudos

- 625 Views

- 0 replies

- 1 kudos

Enterprise Data Platform Architecture on Azure with Databricks

Hi everyone,I recently wrote an article on designing an enterprise-scale data platform architecture using Azure and Databricks.The article covers:• End-to-end architecture for enterprise data platforms• Data ingestion using Azure Data Factory and Kaf...

- 625 Views

- 0 replies

- 1 kudos

- 1220 Views

- 0 replies

- 4 kudos

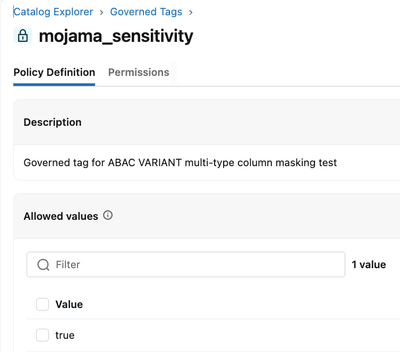

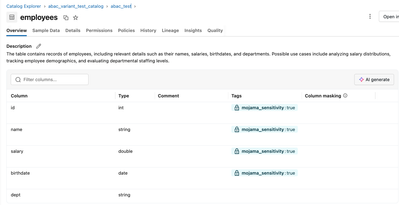

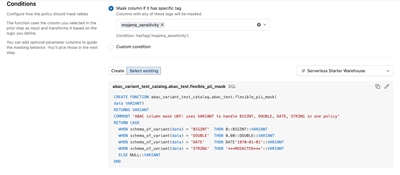

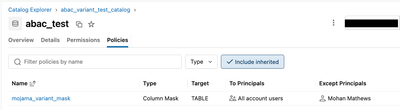

One Policy to Mask Them All: ABAC + VARIANT in Unity Catalog

Databricks ABAC lets you apply a single schema-level policy across columns of any data type — no more managing one mask function per type. Here's how to use the VARIANT data type to make it work. If you've implemented column masking in Unity Catalog,...

- 1220 Views

- 0 replies

- 4 kudos

- 438 Views

- 0 replies

- 1 kudos

One Cluster per Task — Proven, Ready, and Waiting

Part 3 of 3: Databricks Streaming ArchitectureBy the end of Part 1 & Part 2, we knew what the real answer was. We just hadn’t committed to it yet.Not because it wouldn’t work. We tested it. We documented it. The code was ready. The answer was one clu...

- 438 Views

- 0 replies

- 1 kudos

- 1609 Views

- 0 replies

- 2 kudos

Building a Hybrid Lakehouse: Strategic Use of Apache Hudi and Delta Lake in Databricks

Apache Hudi and Delta Lake are built for different workloads. Hudi is optimised for high-frequency writes; Delta Lake is built for fast, reliable reads. Using one format across the entire data platform forces an unnecessary trade-off high ingestion c...

- 1609 Views

- 0 replies

- 2 kudos

- 1104 Views

- 0 replies

- 2 kudos

From SSIS to Databricks: Accelerating ETL Modernization with AI-Powered Utility

As enterprises race toward cloud-native data platforms, modernising legacy ETL pipelines remains one of the most persistent bottlenecks. For organizations that have relied on SQL Server Integration Services (SSIS) for years, rewriting hundreds of pac...

- 1104 Views

- 0 replies

- 2 kudos

- 496 Views

- 0 replies

- 4 kudos

Why Pipeline Design Matters in Databricks

Hi everyone,I just published a new article in my Medium. This article explores an important topic: Designing reliable data pipelines in Databricks.Many pipelines fail not because of code, but because of design decisions made early in development. In ...

- 496 Views

- 0 replies

- 4 kudos

- 5744 Views

- 6 replies

- 8 kudos

The End of an Era - Azure Databricks is Retiring the Standard Tier

Microsoft announced the retirement plan for the Azure Databricks Standard tier. This is vital information for Organizations still on the Standard Tier. It represents a fundamental architectural realignment that Organizations must navigate with precis...

- 5744 Views

- 6 replies

- 8 kudos

- 8 kudos

I've created an Azure Resource Graph query that identifies all standard tier Databricks in your environment (assuming you have read access)https://github.com/cjpluta/azretirementqueries/blob/main/queries/databricks-standard.kql

- 8 kudos

- 6371 Views

- 3 replies

- 6 kudos

Resolved! CI/CD on Databricks with Asset Bundles (DABs) and GitHub Actions

Hi all.If you've ever manually promoted resources from dev to prod on Databricks — copying notebooks, updating configs, hoping nothing breaks — this post is for you.I've been building a CI/CD setup for a Speech-to-Text pipeline on Databricks, and I w...

- 6371 Views

- 3 replies

- 6 kudos

- 6 kudos

Hi, Great question! Databricks Asset Bundles (DABs) are the recommended approach for CI/CD on Databricks. Here is a comprehensive walkthrough. WHAT ARE DATABRICKS ASSET BUNDLES? DABs let you define your Databricks resources (jobs, pipelines, dashboar...

- 6 kudos

- 1498 Views

- 2 replies

- 2 kudos

Orchestrating Irregular Databricks Jobs from external source Timestamps

Works for any event-driven workload: IoT alerts, e-commerce flash sales, financial market close processing.GoalIn this project, I needed to start Databricks jobs on an irregular basis, driven entirely by timestamps stored in PostgreSQL rather than by...

- 1498 Views

- 2 replies

- 2 kudos

- 2 kudos

@PiotrPustola -- The self-rescheduling orchestrator pattern is a really elegant solution for event-driven workloads that depend on externally managed timestamps. A few thoughts and additions that might help you and others who land on this article: AD...

- 2 kudos

- 506 Views

- 0 replies

- 3 kudos

Databricks Community Fellows February 2026 Recap - Living the Values, Rising Stars!

Databricks Community Fellows February 2026 Recap The Databricks Community Fellows are internal Brickster experts who volunteer their time to help customers succeed by answering questions in the Databricks Community forums. This month: 92 customer que...

- 506 Views

- 0 replies

- 3 kudos

- 2376 Views

- 0 replies

- 1 kudos

Building a Production‑Style SCD Type 2 Dimension on Delta Lake — Using Databricks Community Edition

If you’ve ever needed to maintain historical truth in a data warehouse, you’ve likely bumped into Slowly Changing Dimensions (SCD)—specifically Type 2. In SCD2, we keep every version of a record as it changes over time, so analysis can answer questio...

- 2376 Views

- 0 replies

- 1 kudos

-

Access Data

1 -

Access Delta Tables

1 -

ADF Linked Service

1 -

ADF Pipeline

1 -

Advanced Data Engineering

5 -

agent bricks

1 -

Agentic AI

3 -

AI

1 -

AI Agents

4 -

AI Readiness

1 -

AIBI

1 -

Analytics Engineering

1 -

Apache spark

3 -

Apache Spark 3.0

2 -

ApacheSpark

1 -

Architecture

1 -

Associate Certification

1 -

Audit

1 -

Auto-loader

1 -

Automation

1 -

AWSDatabricksCluster

2 -

Azure

2 -

Azure databricks

3 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

2 -

Azure Delta Lake

3 -

Azure devops integration

1 -

AzureDatabricks

2 -

BI

1 -

BI Integrations

1 -

Big data

1 -

Billing and Cost Management

2 -

Blog

1 -

Caching

2 -

CDC

1 -

CICD

2 -

CICDForDatabricksWorkflows

1 -

Cluster

1 -

Cluster Policies

1 -

Cluster Pools

1 -

Collect

1 -

Community Event

1 -

CommunityArticle

2 -

Cost Optimization Effort

2 -

CostOptimization

2 -

custom compute policy

1 -

CustomLibrary

1 -

DABs

1 -

DAIS 0206

3 -

Dashboards

1 -

Data

1 -

Data Analysis with Databricks

1 -

Data Architecture

2 -

Data Driven AI Roadmap

1 -

Data Engineering

10 -

Data Governance

2 -

Data Ingestion

1 -

Data Ingestion & connectivity

1 -

data layout

1 -

Data Mesh

1 -

data optimization

1 -

Data Processing

1 -

Data Quality

1 -

Data warehouse

1 -

databricks

1 -

Databricks App

1 -

Databricks Apps

1 -

Databricks Assistant

2 -

Databricks Community

1 -

Databricks Dashboard

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

databricks genie

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse

2 -

Databricks Migration

3 -

Databricks Mlflow

1 -

Databricks News

1 -

Databricks Notebooks

1 -

Databricks Pyspark

3 -

Databricks Serverless

1 -

Databricks Support

1 -

Databricks Training

1 -

Databricks Unity Catalog

3 -

Databricks Workflows

3 -

DatabricksML

1 -

DBR Versions

1 -

Declartive Pipelines

1 -

DeepLearning

1 -

Delta Lake

9 -

Delta Live Table

1 -

Delta Live Tables

1 -

Delta Time Travel

1 -

DevOps

2 -

DimensionTables

1 -

DLT

2 -

DLT Pipelines

3 -

DLT-Meta

1 -

Dns

1 -

Dynamic

1 -

ETL Pipelines

1 -

fastapi

1 -

Free Databricks

3 -

Free Edition

1 -

GenAI agent

2 -

GenAI and LLMs

3 -

GenAIGeneration AI

2 -

Generation AI

1 -

Generative AI

1 -

Genie

3 -

Git

1 -

Google Bigquery

1 -

Google cloud

1 -

Governance

1 -

Governed Tag

1 -

hackathon

1 -

Hive metastore

1 -

Hubert Dudek

42 -

Hybrid Lakehouse

1 -

Kafka streaming

2 -

Lakeflow Pipelines

1 -

Lakehouse

2 -

Lakehouse Migration

1 -

Lazy Evaluation

1 -

Learning

1 -

Library Installation

1 -

Lineage

1 -

Live Tables CDC

1 -

Llama

1 -

LLMs

1 -

Machine Learning

1 -

mcp

2 -

Medallion Architecture

3 -

MERGE Performance

1 -

Metadata

1 -

Metric Views

2 -

Microsoft Teams

1 -

Migrations

1 -

MSExcel

3 -

Multi-Table Transactions

1 -

Multiagent

3 -

Networking

2 -

New Features

1 -

NotMvpArticle

1 -

Optimize Command

1 -

Partitioning

1 -

Partner

1 -

Performance

2 -

Performance Tuning

3 -

Private Link

1 -

Pyspark

4 -

Pyspark Code

1 -

Pyspark Databricks

1 -

Pytest

1 -

Python

1 -

Reading-excel

2 -

SAP

1 -

Sap Hana Driver

1 -

Scala Code

1 -

Scripting

1 -

SDK

1 -

Security

1 -

Semantic Layer

1 -

Serverless

2 -

slack

1 -

Spark

5 -

Spark Caching

1 -

Spark Performance

1 -

SparkSQL

1 -

SQL

2 -

Sql Scripts

2 -

SQL Serverless

1 -

streamlit

1 -

Structured streaming

1 -

Students

2 -

Support Ticket

1 -

Sync

1 -

Training

1 -

Tutorial

3 -

UCSD

1 -

Unit Test

1 -

Unity Catalog

9 -

Unity Catlog

1 -

University Alliance

1 -

VACUUM Command

1 -

Variant

1 -

Warehousing

1 -

Workflow Jobs

1 -

Workflows

8 -

Zerobus

1

- « Previous

- Next »

| User | Count |

|---|---|

| 85 | |

| 74 | |

| 56 | |

| 44 | |

| 42 |