- 154 Views

- 1 replies

- 0 kudos

Azure Databricks Exclusive groups

Une nouvelle primitive de permissions pour empêcher le croisement de données entre usages hébergés sur le même workspace Databricks.

- 154 Views

- 1 replies

- 0 kudos

- 0 kudos

lien Medium :https://medium.com/@kacn12872/azure-databricks-exclusive-groups-garantir-létanchéité-entre-cas-d-usage-sur-la-lakehouse-e340ce28f332

- 0 kudos

- 240 Views

- 0 replies

- 1 kudos

Databricks Kafka Multi-Stream Ingestion Architecture: Scaling Beyond Single-Stream Bottlenecks

The Real Problem: Kafka Source Parallelism in SparkBefore discussing foreachBatch, multi-table writes, or any specific use case, it helps to understand the underlying issue. This is a problem with how Spark Structured Streaming consumes from Kafka, a...

- 240 Views

- 0 replies

- 1 kudos

- 402 Views

- 1 replies

- 2 kudos

How Databricks Genie Turns Collaboration Tools into AI-Powered Intelligence Platforms

Most organizations don’t have a data problem anymore.They have a data access and usability problem.The dashboards exist. The warehouses are modernized. The lakehouse is running. Yet business teams still wait days for answers because analytics remains...

- 402 Views

- 1 replies

- 2 kudos

- 2 kudos

The Dashboard Era Is Ending. Conversation Is Replacing It.

- 2 kudos

- 328 Views

- 0 replies

- 1 kudos

Scaling Enterprise AI with Databricks Without Losing Control

Enterprise AI becomes difficult to govern as useful projects accumulate. A machine learning team ships a forecasting model. A data engineering team automates pipeline refreshes. Another group connects a generative AI assistant to internal documentati...

- 328 Views

- 0 replies

- 1 kudos

- 267 Views

- 0 replies

- 2 kudos

Live Cost Estimator

I'm building a live cost estimator that doesn't have to wait for the system tables or billing data to update. It gives me immediate cost feedback every second and I'm sharing the development journey on YouTube.I already have live costs estimates for ...

- 267 Views

- 0 replies

- 2 kudos

- 277 Views

- 0 replies

- 0 kudos

Managed vs External Tables in Unity Catalog: The Decision That’s Silently Inflating Your Cloud Bill

Hi everyone,I recently took a look into a silent cost driver in many data platforms: the default choice between managed and external tables in Unity Catalog.It is very common for teams to default to external tables, but this choice often leads to acc...

- 277 Views

- 0 replies

- 0 kudos

- 272 Views

- 0 replies

- 1 kudos

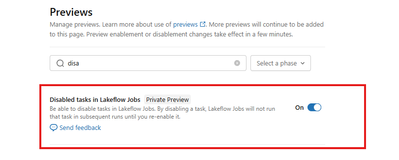

Finally! Databricks lets you disable tasks without hacks

For years, there was no simple way to disable a single task in a Databricks workflow. Let that sink in If you wanted to skip a task, you had to get creative - Add custom flags - Wrap logic in if/else blocks - Or build your own workaround just to not...

- 272 Views

- 0 replies

- 1 kudos

- 8472 Views

- 7 replies

- 13 kudos

Attribute-Based Access Control (ABAC) in Databricks Unity Catalog

What Is ABAC and Why Does It Matter?Attribute-Based Access Control (ABAC) is a data governance model now available in Databricks, designed to offer fine-grained, dynamic, and scalable access control for data, AI assets, and files managed through Data...

- 8472 Views

- 7 replies

- 13 kudos

- 13 kudos

I wanted to check if ABAC (Attribute-Based Access Control) policies can be applied to metric views in Databricks.I have successfully applied ABAC policies on a fact table, and they are working as expected. However, when I query a metric view that use...

- 13 kudos

- 335 Views

- 0 replies

- 1 kudos

DataHacks 2026: University Alliance in Action at UCSD

DataHacks 2026: University Alliance in Action at UCSD How a single weekend of hands on exposure creates the next generation of Databricks advocates Workshop Lead: Anjana Sriram Why University Alliances Matters in the Field Early in my career, the ...

- 335 Views

- 0 replies

- 1 kudos

- 1029 Views

- 0 replies

- 1 kudos

Databricks Metric Views - Power BI - BI compatibility mode Removal

BI compatibility mode allows us to query Unity Catalog Metric Views from external BI tools. It allows Databricks to rewrite queries generated by tools like Power BI, ensuring complex metric view logic is executed correctly while appearing as standard...

- 1029 Views

- 0 replies

- 1 kudos

- 1877 Views

- 3 replies

- 2 kudos

Unity Catalog-only workspace for new Azure Databricks deployments

Big shift coming for Azure Databricks usersStarting 30 September 2026, all new Azure Databricks workspaces will be Unity Catalog only.- No DBFS root.- No Hive Metastore.- No legacy runtimes below 13.3 LTS.- No “old way” of doing things.Disabling DBFS...

- 1877 Views

- 3 replies

- 2 kudos

- 2 kudos

Hi guys,There was an email message sent by Azure Databricks with this information. So no direct link, just information sent by Databricks to users.

- 2 kudos

- 377 Views

- 0 replies

- 1 kudos

How we solved the "18-Hour Running Job" problem with Data-Driven Timeouts

Hi everyone,I recently dealt with a frustrating scenario: a Databricks job that usually takes minutes ran for 18 hours without failing, quietly consuming compute and blocking downstream pipelines.The driver hadn't crashed, and the job hadn't failed—i...

- 377 Views

- 0 replies

- 1 kudos

- 494 Views

- 0 replies

- 2 kudos

Lakeflow Spark Declarative Pipelines now decouples pipeline and tables lifecycle

Ever deleted a pipeline… and accidentally wiped out the data with it? Databricks just introduced a beta feature that lets you decouple pipelines from the tables they manage.Lakeflow Spark Declarative Pipelines were desinged with data-as-code approach...

- 494 Views

- 0 replies

- 2 kudos

- 2803 Views

- 2 replies

- 1 kudos

Databricks Vector Search Integration: Powering Claude Desktop with MCP

https://medium.com/@bijumathewt/databricks-vector-search-integration-powering-claude-desktop-with-mcp-838c591b50b5

- 2803 Views

- 2 replies

- 1 kudos

- 1 kudos

Good Question Edonaire. There 2 options here. Continuous and Triggered. If continuous, it will take care of incrimental load automatically, but costly.Triggered can be filed by setting up job with a task to run the "Refresh" code.

- 1 kudos

- 558 Views

- 0 replies

- 1 kudos

From Data to Deck — Auto-Generate PowerPoint from Databricks

Creating PowerPoint decks from data is usually manual and repetitive:Export charts → take screenshots → format slides → repeat.Not anymore.You can now generate a complete PowerPoint directly from a Databricks table — in one notebook run. What this do...

- 558 Views

- 0 replies

- 1 kudos

-

Access Data

1 -

ADF Linked Service

1 -

ADF Pipeline

1 -

Advanced Data Engineering

3 -

agent bricks

1 -

Agentic AI

3 -

AI

1 -

AI Agents

3 -

AI Readiness

1 -

Apache spark

3 -

Apache Spark 3.0

2 -

ApacheSpark

1 -

Associate Certification

1 -

Auto-loader

1 -

Automation

1 -

AWSDatabricksCluster

1 -

Azure

1 -

Azure databricks

3 -

Azure Databricks Job

2 -

Azure Delta Lake

3 -

Azure devops integration

1 -

AzureDatabricks

2 -

BI

1 -

BI Integrations

1 -

Big data

1 -

Billing and Cost Management

2 -

Blog

1 -

Caching

2 -

CDC

1 -

CICD

1 -

CICDForDatabricksWorkflows

1 -

Cluster

1 -

Cluster Policies

1 -

Cluster Pools

1 -

Collect

1 -

Community Event

1 -

CommunityArticle

2 -

Cost Optimization Effort

2 -

CostOptimization

2 -

custom compute policy

1 -

CustomLibrary

1 -

Data

1 -

Data Analysis with Databricks

1 -

Data Architecture

1 -

Data Driven AI Roadmap

1 -

Data Engineering

7 -

Data Governance

1 -

Data Ingestion

1 -

Data Ingestion & connectivity

1 -

Data Mesh

1 -

Data Processing

1 -

Data Quality

1 -

Data warehouse

1 -

databricks

1 -

Databricks App

1 -

Databricks Assistant

2 -

Databricks Community

1 -

Databricks Dashboard

2 -

Databricks Delta Table

1 -

Databricks Demo Center

1 -

databricks genie

1 -

Databricks Job

1 -

Databricks Lakehouse

1 -

Databricks Migration

3 -

Databricks Mlflow

1 -

Databricks News

1 -

Databricks Notebooks

1 -

Databricks Serverless

1 -

Databricks Support

1 -

Databricks Training

1 -

Databricks Unity Catalog

2 -

Databricks Workflows

2 -

DatabricksML

1 -

DBR Versions

1 -

Declartive Pipelines

1 -

DeepLearning

1 -

Delta Lake

7 -

Delta Live Table

1 -

Delta Live Tables

1 -

Delta Time Travel

1 -

Devops

1 -

DimensionTables

1 -

DLT

2 -

DLT Pipelines

3 -

DLT-Meta

1 -

Dns

1 -

Dynamic

1 -

Free Databricks

3 -

Free Edition

1 -

GenAI agent

2 -

GenAI and LLMs

2 -

GenAIGeneration AI

2 -

Generative AI

1 -

Genie

1 -

Governance

1 -

Governed Tag

1 -

hackathon

1 -

Hive metastore

1 -

Hubert Dudek

42 -

Hybrid Lakehouse

1 -

Lakeflow Pipelines

1 -

Lakehouse

2 -

Lakehouse Migration

1 -

Lazy Evaluation

1 -

Learning

1 -

Library Installation

1 -

Llama

1 -

LLMs

1 -

mcp

2 -

Medallion Architecture

2 -

Metric Views

1 -

Microsoft Teams

1 -

Migrations

1 -

MSExcel

3 -

Multi-Table Transactions

1 -

Multiagent

3 -

Networking

2 -

New Features

1 -

NotMvpArticle

1 -

Optimize Command

1 -

Partitioning

1 -

Partner

1 -

Performance

2 -

Performance Tuning

2 -

Private Link

1 -

Pyspark

2 -

Pyspark Code

1 -

Pyspark Databricks

1 -

Pytest

1 -

Python

1 -

Reading-excel

2 -

Scala Code

1 -

Scripting

1 -

SDK

1 -

Security

1 -

Serverless

2 -

slack

1 -

Spark

5 -

Spark Caching

1 -

Spark Performance

1 -

SparkSQL

1 -

SQL

2 -

Sql Scripts

2 -

SQL Serverless

1 -

Students

2 -

Support Ticket

1 -

Sync

1 -

Training

1 -

Tutorial

3 -

UCSD

1 -

Unit Test

1 -

Unity Catalog

8 -

Unity Catlog

1 -

University Alliance

1 -

VACUUM Command

1 -

Variant

1 -

Warehousing

1 -

Workflow Jobs

1 -

Workflows

7 -

Zerobus

1

- « Previous

- Next »

| User | Count |

|---|---|

| 85 | |

| 72 | |

| 50 | |

| 44 | |

| 42 |