Hi @Retired_mod

I did some digging on the messages that we are receiving.

By default, autoloader generates the Event Grid System Topic, Event Subscriptions and Storage Queue endpoint.

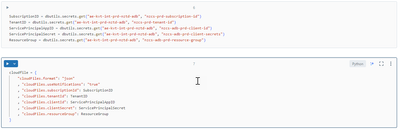

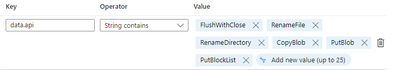

Looking at the queue endpoint it has a filter that is set automatically below.

In our test we have removed this filter and see what messages we are getting.

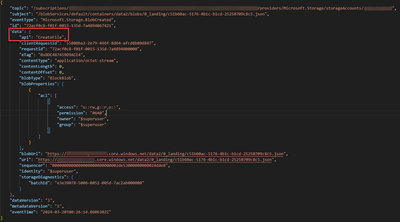

We’ve noticed that messages that we are receiving in the Storage Queue only have a tag of “CreateFile”.

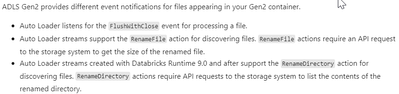

But autoloader seems to be listening to different api tags according to this below.

I think that it could be the reason why we do not get any Active jobs in SparkUI because Autoloader is looking to different api tags to process.

Not sure why this is happening.