Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Re: Bigquery - Databricks integration issue.

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Bigquery - Databricks integration issue.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-08-2023 09:18 AM

I am trying to get the Bigquery data to Databricks using Notebooks. Following the steps based on this https://docs.databricks.com/external-data/bigquery.html. I believe I am making some mistake with this step and getting the below error. I tried giving the .json as a file input, but still same error.

Facing this error:

"Py4JJavaError: An error occurred while calling o451.load.

: com.google.cloud.spark.bigquery.repackaged.com.google.inject.ProvisionException: Unable to provision, see the following errors:

1) Error in custom provider, java.lang.IllegalArgumentException: A project ID is required for this service but could not be determined from the builder or the environment. Please set a project ID using the builder.

at com.google.cloud.spark.bigquery.SparkBigQueryConnectorModule.provideSparkBigQueryConfig(SparkBigQueryConnectorModule.java:65)

while locating com.google.cloud.spark.bigquery.SparkBigQueryConfig

Labels:

- Labels:

-

Bigquery

-

Integration

3 REPLIES 3

Anonymous

Not applicable

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-09-2023 08:30 AM

@Madhan Potluri :

The error message you are seeing indicates that there is an issue with the project ID when loading data from BigQuery to Databricks. To resolve this issue, you can set the project ID explicitly in your code.

Here's an example code snippet that shows how to set the project ID when loading data from BigQuery to Databricks:

import os

from google.oauth2 import service_account

# Set the path to your service account JSON key file

service_account_json = '/path/to/service_account.json'

# Set the project ID

project_id = 'your-project-id'

# Load the service account key

credentials = service_account.Credentials.from_service_account_file(service_account_json)

# Set the environment variable for the Google Cloud project ID

os.environ['GOOGLE_CLOUD_PROJECT'] = project_id

# Load data from BigQuery to Databricks

df = spark.read.format('bigquery') \

.option('table', 'your_dataset.your_table') \

.option('credentials', credentials) \

.load()Make sure to replace your-project-id with the actual project ID of your Google Cloud project, and

your_dataset.your_table with the actual BigQuery table you want to load data from. Also, make sure that the path to your service account JSON key file is correct.

I hope this helps! Let me know if you have any further questions.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-11-2023 12:54 AM

I tried the above way, but still getting the the below error.

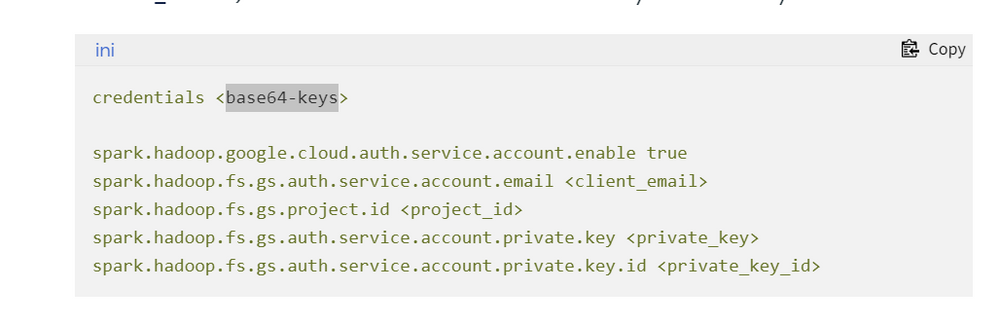

Do we need to encode the key.json file here? If yes, could you please suggest the clear steps.

194 def deco(*a: Any, **kw: Any) -> Any:

195 try:

--> 196 return f(*a, **kw)

197 except Py4JJavaError as e:

198 converted = convert_exception(e.java_exception)

/databricks/spark/python/lib/py4j-0.10.9.5-src.zip/py4j/protocol.py in get_return_value(answer, gateway_client, target_id, name)

324 value = OUTPUT_CONVERTER[type](answer[2:], gateway_client)

325 if answer[1] == REFERENCE_TYPE:

--> 326 raise Py4JJavaError(

327 "An error occurred while calling {0}{1}{2}.\n".

328 format(target_id, ".", name), value)

Py4JJavaError: An error occurred while calling o1217.load.

: com.google.cloud.spark.bigquery.repackaged.com.google.inject.ProvisionException: Unable to provision, see the following errors:

1) Error in custom provider, java.lang.IllegalArgumentException: com.google.cloud.spark.bigquery.repackaged.com.google.common.io.BaseEncoding$DecodingException: Unrecognized character: <

at com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryClientModule.provideBigQueryCredentialsSupplier(BigQueryClientModule.java:46)

while locating com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryCredentialsSupplier

for the 3rd parameter of com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryClientModule.provideBigQueryClient(BigQueryClientModule.java:63)

at com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryClientModule.provideBigQueryClient(BigQueryClientModule.java:63)

while locating com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryClient

1 error

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InternalProvisionException.toProvisionException(InternalProvisionException.java:226)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InjectorImpl$1.get(InjectorImpl.java:1097)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InjectorImpl.getInstance(InjectorImpl.java:1131)

at com.google.cloud.spark.bigquery.BigQueryRelationProvider.createRelationInternal(BigQueryRelationProvider.scala:80)

at com.google.cloud.spark.bigquery.BigQueryRelationProvider.createRelation(BigQueryRelationProvider.scala:48)

at org.apache.spark.sql.execution.datasources.DataSource.resolveRelation(DataSource.scala:387)

at org.apache.spark.sql.DataFrameReader.loadV1Source(DataFrameReader.scala:375)

at org.apache.spark.sql.DataFrameReader.$anonfun$load$2(DataFrameReader.scala:331)

at scala.Option.getOrElse(Option.scala:189)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:331)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:223)

at sun.reflect.GeneratedMethodAccessor361.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at py4j.reflection.MethodInvoker.invoke(MethodInvoker.java:244)

at py4j.reflection.ReflectionEngine.invoke(ReflectionEngine.java:380)

at py4j.Gateway.invoke(Gateway.java:306)

at py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132)

at py4j.commands.CallCommand.execute(CallCommand.java:79)

at py4j.ClientServerConnection.waitForCommands(ClientServerConnection.java:195)

at py4j.ClientServerConnection.run(ClientServerConnection.java:115)

at java.lang.Thread.run(Thread.java:750)

Caused by: java.lang.IllegalArgumentException: com.google.cloud.spark.bigquery.repackaged.com.google.common.io.BaseEncoding$DecodingException: Unrecognized character: <

at com.google.cloud.spark.bigquery.repackaged.com.google.common.io.BaseEncoding.decode(BaseEncoding.java:218)

at com.google.cloud.spark.bigquery.repackaged.com.google.api.client.util.Base64.decodeBase64(Base64.java:106)

at com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryCredentialsSupplier.createCredentialsFromKey(BigQueryCredentialsSupplier.java:71)

at com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryCredentialsSupplier.<init>(BigQueryCredentialsSupplier.java:45)

at com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryClientModule.provideBigQueryCredentialsSupplier(BigQueryClientModule.java:53)

at com.google.cloud.spark.bigquery.repackaged.com.google.cloud.bigquery.connector.common.BigQueryClientModule$$FastClassByGuice$$b1b60333.invoke(<generated>)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.ProviderMethod$FastClassProviderMethod.doProvision(ProviderMethod.java:264)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.ProviderMethod.doProvision(ProviderMethod.java:173)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InternalProviderInstanceBindingImpl$CyclicFactory.provision(InternalProviderInstanceBindingImpl.java:185)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InternalProviderInstanceBindingImpl$CyclicFactory.get(InternalProviderInstanceBindingImpl.java:162)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.ProviderToInternalFactoryAdapter.get(ProviderToInternalFactoryAdapter.java:40)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.SingletonScope$1.get(SingletonScope.java:168)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InternalFactoryToProviderAdapter.get(InternalFactoryToProviderAdapter.java:39)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.SingleParameterInjector.inject(SingleParameterInjector.java:42)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.SingleParameterInjector.getAll(SingleParameterInjector.java:65)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.ProviderMethod.doProvision(ProviderMethod.java:173)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InternalProviderInstanceBindingImpl$CyclicFactory.provision(InternalProviderInstanceBindingImpl.java:185)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InternalProviderInstanceBindingImpl$CyclicFactory.get(InternalProviderInstanceBindingImpl.java:162)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.ProviderToInternalFactoryAdapter.get(ProviderToInternalFactoryAdapter.java:40)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.SingletonScope$1.get(SingletonScope.java:168)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InternalFactoryToProviderAdapter.get(InternalFactoryToProviderAdapter.java:39)

at com.google.cloud.spark.bigquery.repackaged.com.google.inject.internal.InjectorImpl$1.get(InjectorImpl.java:1094)

... 20 more

Caused by: com.google.cloud.spark.bigquery.repackaged.com.google.common.io.BaseEncoding$DecodingException: Unrecognized character: <

at com.google.cloud.spark.bigquery.repackaged.com.google.common.io.BaseEncoding$Alphabet.decode(BaseEncoding.java:492)

at com.google.cloud.spark.bigquery.repackaged.com.google.common.io.BaseEncoding$Base64Encoding.decodeTo(BaseEncoding.java:972)

at com.google.cloud.spark.bigquery.repackaged.com.google.common.io.BaseEncoding.decodeChecked(BaseEncoding.java:233)

at com.google.cloud.spark.bigquery.repackaged.com.google.common.io.BaseEncoding.decode(BaseEncoding.java:216)

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-23-2023 02:06 AM

Hi!

Did you get the problem solved?

I am facing the same issue

please guide

Announcements

Related Content

- Databricks integrating with ServiceNow via Lakeflow Connect for data ingestion in Administration & Architecture

- Databricks SQL Alerts and Jobs Integration: Legacy vs V2 in Data Engineering

- Netsuite Data Connector Not Available in Data Engineering

- Job compute fails due to BQ permissions in Machine Learning

- Genie integration in Dashboards fails with "GenericChatCompletionServiceException" (Free Edition) in Generative AI