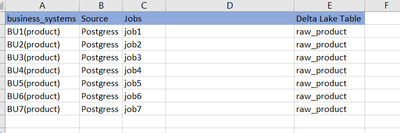

I have created 7 job for each business system to extract product data from each postgress source then write all job data into one data lake delta table [raw_product].

each business system product table has around 20 GB of data.

do the same thing for 15 table .

is any way to read and write fast in delta tables

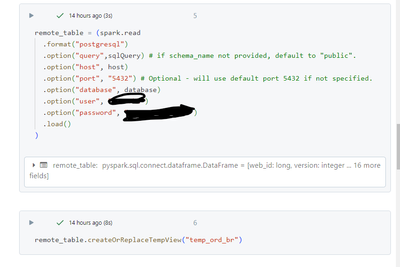

one job looks like the one below

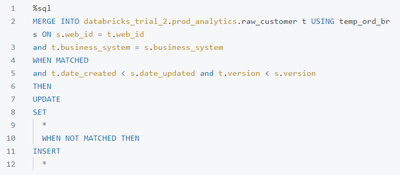

daily day loaded into delta table by using merge command