Hi there, I'm finding this a bit trickier than originally expected and am hoping someone can help me understand if I'm missing something.

I have 3 jobs:

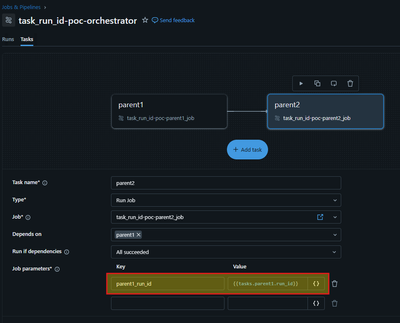

- One orchestrator job (tasks are type run_job)

- Two "Parent" jobs (tasks are type notebook)

- parent1 runs the task child1

- parent2 runs the task child2

I need to get the task_run_id of the *launched* Parent1 job's Child1. Originally, I was exploring using Dynamic Value Reference in order to feed the job parameter

parent1_run_id = {{tasks.parent1.run_id}}

I was thinking that with this run_id I could use Databricks REST API in order to find the child1 run_id. However, what I'm seeing is that because

{{tasks.parent1.run_id}}

corresponds to the orchestrator parent1 task, and this is different than the actual *launched* parent1 run_id, this is a dead end, and I cannot use REST API to pull the child1 run_id.

Please let me know if I'm missing anything here. Attached some images for reference.