Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Re: How to get all occurrences of duplicate record...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-29-2022 10:55 PM

Hi,

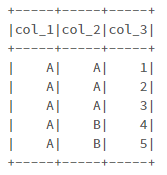

I need to find all occurrences of duplicate records in a PySpark DataFrame. Following is the sample dataset:

# Prepare Data

data = [("A", "A", 1), \

("A", "A", 2), \

("A", "A", 3), \

("A", "B", 4), \

("A", "B", 5), \

("A", "C", 6), \

("A", "D", 7), \

("A", "E", 8), \

]

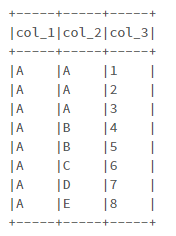

# Create DataFrame

columns= ["col_1", "col_2", "col_3"]

df = spark.createDataFrame(data = data, schema = columns)

df.printSchema()

df.show(truncate=False)

When I try the following code:

primary_key = ['col_1', 'col_2']

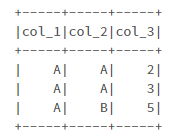

duplicate_records = df.exceptAll(df.dropDuplicates(primary_key))The output will be:

As you can see, I don't get all occurrences of duplicate records based on the Primary Key, since one instance of duplicate records is present in "df.dropDuplicates(primary_key)". The 1st and the 4th records of the dataset must be in the output.

Any idea to solve this issue?

Labels:

- Labels:

-

Duplicate Records

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-29-2022 11:26 PM

Hi,

Getting the not duplicated records and doing 'left_anti' join should do the trick.

not_duplicate_records = df.groupBy(primary_key).count().where('count = 1').drop('count')

duplicate_records = df.join(not_duplicate_records, on=primary_key, how='left_anti').show()

3 REPLIES 3

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-29-2022 11:26 PM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-30-2022 12:44 AM

@Mohammad Saber how about using window function like below

windowSpec = Window.partitionBy(*primary_key)

df.withColumn("primary_key_count",F.count("*").over(windowSpec)).filter(F.col("primary_key_count") > 1).drop("primary_key_count").show(truncate=False)Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-30-2022 01:30 AM

Hi,

In my experience, if you use dropDuplicates(), Spark will keep a random row.

Therefore, you should define a logic to remove duplicated rows.

Announcements

Related Content

- Spark JDBC Write Fails for Record Not Present - PK error in Data Engineering

- Old files also getting added in dlt autoloader in Data Engineering

- Duplicates in CSV Export to ADLS in Data Engineering

- Issue with Column Name Conflict While Importing .gz File into Spark DataFrame in Warehousing & Analytics

- pyspark dropDuplicates performance issue in Data Engineering