Hi, I'm currently using Databricks Runtime Version 9.1 LTS and everything is fine. When I change it to 11.0 (while keeping everything else the same), my libraries failed to install. Here is the error message:

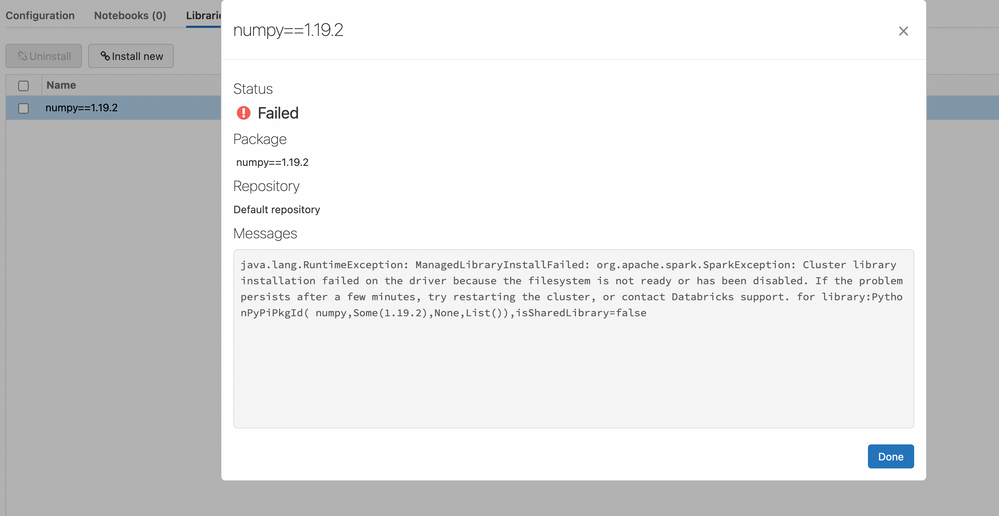

java.lang.RuntimeException: ManagedLibraryInstallFailed: org.apache.spark.SparkException: Cluster library installation failed on the driver because the filesystem is not ready or has been disabled. If the problem persists after a few minutes, try restarting the cluster, or contact Databricks support. for library:PythonPyPiPkgId( numpy,Some(1.19.2),None,List()),isSharedLibrary=false

and a screenshot:

I tried waiting a few minutes and restarting the cluster. Any ideas of how to correct this?

I tried waiting a few minutes and restarting the cluster. Any ideas of how to correct this?