Hello

we are facing some optimization problems for a workflow that interpolates raw measurements to one second intervals.

We are currently using dbl tempo for this, but had the same issues when doing an simple approach with window function.

we have the following input dataframe:

interpolate the df based like

tsdf_aligned = tsdf_measurements.interpolate(

freq=f"1 seconds",

func="ceil",

method="ffill",

partition_cols=["id"],

perform_checks=False,

)

As spark creates the partitions for the input df based on the raw data and this contains both high frequency data and very low frequency data, the input df has partitions that contain very different amount of measurements per signal:

- one partition may only contain only a few signals that have high frequency data

another partition may contain the data of 1000 of signals that only have a few measurements in the timespan

this results in data skew in the interpolation step as the partitions with a measurements of low frequency data suddenly will be interpolated to have 1 entry per second per signal.

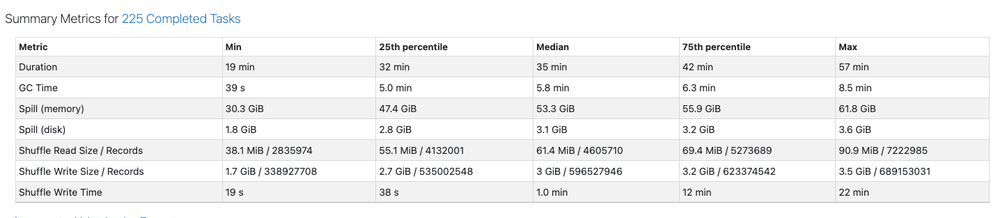

For example if we don't configure any spark conf, the input df for one week of data will have 225 paritions:

We observed huge spilling and a bad distribution.

If we force spark to have smaller partitions, we can reduce the spill a bit but still have huge skew in the data. E.G. we set

spark.conf.set("spark.sql.files.maxPartitionBytes", "16m") spark.conf.set("spark.sql.adaptive.advisoryPartitionSizeInBytes", "16m")

and end up with 1100 partitions in the interpolation step:

but the data is still heavily skewed.

We tried to use call repartitionByRange(4000, "id") on the df to create a more evenly distributed input df but this will create some partitions where 1000 of low frequent sending signals are grouped together and some partitions where only a few signals are grouped together (as spark tries to make evenly sized partitions).

We are running out of ideas how to prepare the input df in a way to have well defined partitions. Are we missing something or are we using a completely wrong approach?