Hi Team,

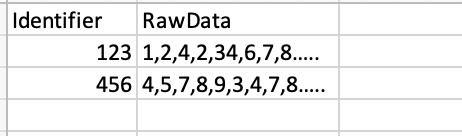

My python dataframe is as below.

The raw data is quite a long series of approx 5000 numbers. My requirement is to go through each row in RawData column and calculate 2 metrics. I have created a function in Python and it works absolutely fine.

The raw data is quite a long series of approx 5000 numbers. My requirement is to go through each row in RawData column and calculate 2 metrics. I have created a function in Python and it works absolutely fine.

Python Function

def calculate_metrics(value_dict):

df = value_dict.copy()

df['Metric1'] =pd.Series(dtype='float')

df['Metric2'] = pd.Series(dtype='float')

for index,row in value_dict.iterrows():

df.loc[df['Id']==row['Id'],'Metric1'] = Function1(row['RawData'])

df.loc[df['Id']==row['Id'],'Metric2'] = Function2(row['RawData'])

return df

Here I pass a data frame value_dict with "Identifier and RawData" as two columns.

I call as below

value_dict['RawData'] = value_dict['RawData'].apply(lambda x: np.array(x))

df_Fullagg = calculate_metrics(value_dict)

This calculates all the metrics I need and returns in a dataframe.

The volumen of data is quite high here. I want to use spark frame work here with azure synapse. How can I write the same function using pandas_Udf.

I am looking for some implementation like this.

@pandas_udf('int', PandasUDFType.SCALAR)

def calculate_metrics(value_dict):

df = value_dict.copy()

df['Metric1'] =pd.Series(dtype='float')

df['Metric2'] = pd.Series(dtype='float')

for index,row in value_dict.iterrows():

df.loc[df['Id']==row['Id'],'Metric1'] = Function1(row['RawData'])

df.loc[df['Id']==row['Id'],'Metric2'] = Function2(row['RawData'])

return df

Any help would be much appreciated.