- 23007 Views

- 9 replies

- 4 kudos

Resolved! How to get usage statistics from Databricks or SQL Databricks?

Hi, I am looking for a way to get usage statistics from Databricks (Data Science & Engineering and SQL persona). For example: I created a table. I want to know how many times a specific user queried that table.How many times a pipeline was trigge...

- 23007 Views

- 9 replies

- 4 kudos

- 4 kudos

You can use System Tables, now available in Unity Catalog metastore, to create the views you described. https://docs.databricks.com/en/admin/system-tables/index.html

- 4 kudos

- 18227 Views

- 4 replies

- 3 kudos

Connect to Databricks SQL Endpoint using Programming language

Hi, I would like to know whether there is a feasibility/options available to connect to databricks sql endpoint using a programming language like java/scala/c#. I can see JDBC URL, but would like to whether it can be considered as any other jdbc conn...

- 18227 Views

- 4 replies

- 3 kudos

- 3 kudos

I found a similar question on Stackoverflow https://stackoverflow.com/questions/77477103/ow-to-properly-connect-to-azure-databricks-warehouse-from-c-sharp-net-using-jdb

- 3 kudos

- 3224 Views

- 1 replies

- 2 kudos

How to prevent users from scheduling SQL queries?

We have noticed that users can schedule SQL queries, but currently we haven't found a way to find these scheduled queries (this does not show up in the jobs workplane). Therefore, we don't know that people scheduled this. The only way is to look at t...

- 3224 Views

- 1 replies

- 2 kudos

- 2 kudos

@Paulo Rijnberg :In Databricks, you can use the following approaches to prevent users from scheduling SQL queries and to receive notifications when such queries are scheduled:Cluster-level permissionsJobs APINotification hooksAudit logs and monitori...

- 2 kudos

- 8739 Views

- 6 replies

- 5 kudos

Migration of Databricks Jobs, SQL dasboards and Alerts from lower environment to higher environment?

I want to move Databricks Jobs, SQL dasboards, Queries and Alerts from lower environment to higher environment, how we can move?

- 8739 Views

- 6 replies

- 5 kudos

- 5 kudos

Hi @Shubham Agagwral Thank you for posting your question in our community! We are happy to assist you.To help us provide you with the most accurate information, could you please take a moment to review the responses and select the one that best answ...

- 5 kudos

- 3349 Views

- 1 replies

- 3 kudos

How to change compression codec of sql warehouse written files?

Hi, I'm currently starting to use SQL Warehouse, and we have most of our lake in a compression different than snappy. How can I set the SQL warehouse to use a compression like gzip, zstd, on CREATE, INSERT, etc? Tried this: set spark.sql.parquet.comp...

- 3349 Views

- 1 replies

- 3 kudos

- 3 kudos

Hi @Alejandro Martinez Great to meet you, and thanks for your question! Let's see if your peers in the community have an answer to your question. Thanks.

- 3 kudos

- 6621 Views

- 1 replies

- 9 kudos

Refreshing SQL DashboardYou can schedule the dashboard to automatically refresh at an interval.At the top of the page, click Schedule.If the dashboard...

Refreshing SQL DashboardYou can schedule the dashboard to automatically refresh at an interval.At the top of the page, click Schedule.If the dashboard already has a schedule, you see Scheduled instead of Schedule.Select an interval, such as Every 1 h...

- 6621 Views

- 1 replies

- 9 kudos

- 7801 Views

- 1 replies

- 0 kudos

Spark UI SQL/Dataframe tab missing queries

Hi, Recently, I am having some problems viewing the query plans in the Spark UI SQL/Dataframe tab. I would expect to see large query plans in the SQL tab where we can observe the details of the query such as the rows read/written/shuffled. Howeve...

- 7801 Views

- 1 replies

- 0 kudos

- 0 kudos

@Koray Beyaz :This issue may be related to a change in the default behavior of the Spark UI in recent versions of Databricks Runtime. In earlier versions, the Spark UI would display the full query plan for SQL and DataFrame operations in the SQL/Dat...

- 0 kudos

- 3463 Views

- 3 replies

- 2 kudos

Resolved! About SQL workspace option

I can't find SQL workspace option in my free community edition.

- 3463 Views

- 3 replies

- 2 kudos

- 2 kudos

Hi @Machireddy Nikitha Thank you for posting your question in our community! We are happy to assist you.To help us provide you with the most accurate information, could you please take a moment to review the responses and select the one that best an...

- 2 kudos

- 7051 Views

- 3 replies

- 9 kudos

Resolved! Databricks SQL Endpoint start times

When I first login and start using Databricks SQL, the endpoints always take a while to start. Is there anything I can do to improve the cold start experience on the platform?

- 7051 Views

- 3 replies

- 9 kudos

- 9 kudos

Could you share your specific use case? May I help you

- 9 kudos

- 5931 Views

- 1 replies

- 2 kudos

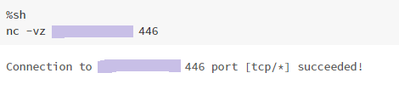

Resolved! DB2 JDBC connection error

Hello. I am trying to establish a connection from Databricks to our DB2 AS400 server. Below is my code: CREATE TABLE IF NOT EXISTS table_nameUSING JDBCOPTIONS (DRIVER = "com.ibm.as400.access.AS400JDBCDriver", URL = "jdbc:as400://<IP>:446;prompt=false...

- 5931 Views

- 1 replies

- 2 kudos

- 7961 Views

- 3 replies

- 0 kudos

What is the databricks SQL equivalent string_agg in Postgres SQL?

Hello, I am starting to use databricks and have some handy functions with Postgres SQL that I am struggling to find an equivalent in databricks. The function is string_agg. It is used to concatenate a list of strings with a given delimiter. More...

- 7961 Views

- 3 replies

- 0 kudos

- 0 kudos

Hey there @Julie Calhoun Hope all is well! Just wanted to check in if you were able to resolve your issue and would you be happy to share the solution or mark an answer as best? Else please let us know if you need more help. We'd love to hear from y...

- 0 kudos

- 7419 Views

- 7 replies

- 3 kudos

Does Databricks SQL support Azure SQL DB?

Hi, I could create spark tables based on SQL server (Azure SQL actually), and query the tables from a notebook. On another hand, I can see the tables listed in the hive metastore in Databricks SQL. However when I try to query the tables there, it say...

- 7419 Views

- 7 replies

- 3 kudos

- 3 kudos

@Kaniz Fatma @Prabakar Ammeappin I do not know if you aware of what @bhumi shamra published above? this comments are irrelevant ..

- 3 kudos

- 4417 Views

- 3 replies

- 1 kudos

Databricks SQL connector for NodeJS

Hi, Can we use NodeJS code run SQL on Databricks cluster similar to. I have found a similar example with Python https://pypi.org/project/databricks-sql-connector. Can same be done by using JavaScript / NodeJS ? Thanks

- 4417 Views

- 3 replies

- 1 kudos

- 1 kudos

Hey there @Subhramalya Bose Hope all is well! Just wanted to check in if you were able to resolve your issue and would you be happy to share the solution or mark an answer as best? It would be really helpful for the other members too.We'd love to he...

- 1 kudos

- 6496 Views

- 6 replies

- 1 kudos

SQL endpoint vCPU on Azure

How do I change the vCPU type for SQL Endpoint on Azure Databricks? Currently it picks as default DSv4 CPUs which reaches its quota limit although I have unused quota in other vCPU types. I faced similar issue while using Delta live tables but I was ...

- 6496 Views

- 6 replies

- 1 kudos

- 1 kudos

Hi @Tarique Anwar , I don't think changing the vCPU type, for now, is possible. However, I can check with the product team on this.https://docs.microsoft.com/en-us/azure/databricks//sql/admin/sql-endpoints#required-azure-vcpu-quota

- 1 kudos

- 3827 Views

- 3 replies

- 1 kudos

Row Limit for Databricks SQL

What is the download row limit for Databricks SQL? Can we increase it?

- 3827 Views

- 3 replies

- 1 kudos

- 1 kudos

https://doramasflix.mx/search.php?kwd=f4+thailand+cap+3+sub+espa%C3%B1ol

- 1 kudos

-

Azure

3 -

Azure databricks

4 -

Cluster

3 -

Databricks Cluster

2 -

Databricks SQL

18 -

DBSQL

12 -

DLT

3 -

Mysql

2 -

Powerbi

6 -

Python

6 -

SQL

31 -

SQL Dashboard

2 -

Sql Warehouse

5 -

Unity Catalogue

1