Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Get Started Discussions

Start your journey with Databricks by joining discussions on getting started guides, tutorials, and introductory topics. Connect with beginners and experts alike to kickstart your Databricks experience.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Get Started Discussions

- Re: Getting HTML sign I page as api response from ...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

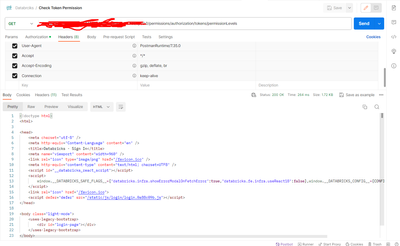

Getting HTML sign I page as api response from databricks api with statuscode 200

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-28-2023 01:55 AM

Response:

<!doctype html>

<!doctype html>

<html>

<head>

<meta charset="utf-8" />

<meta http-equiv="Content-Language" content="en" />

<title>Databricks - Sign In</title>

<meta name="viewport" content="width=960" />

<link rel="icon" type="image/png" href="/favicon.ico" />

<meta http-equiv="content-type" content="text/html; charset=UTF8" />

<script id="__databricks_react_script"></script>

<script>

window.__DATABRICKS_SAFE_FLAGS__={"databricks.infra.showErrorModalOnFetchError":true,"databricks.fe.infra.useReact18":false},window.__DATABRICKS_CONFIG__={CONFIG_PLACEHOLDER_KEY:null}

</script>

<link rel="icon" href="/favicon.ico">

<script defer="defer" src="/static/js/login/login.0a88c09b.js"></script>

</head>

<body class="light-mode">

<uses-legacy-bootstrap>

<div id="login-page"></div>

</uses-legacy-bootstrap>

</body>

</html>

Labels:

- Labels:

-

Access Data

-

API Documentation

12 REPLIES 12

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-04-2024 11:02 AM

Even i am receiving the same.

I have performed following steps:

1. created SP on Azure.

2. Granted contributor role on azure resource where DBx workspace is created.

3. Gerated token using, az account get-access-token --resource 2ff814a6-3304-4ab8-85cb-cd0e6f879c1d.

4. Used this token as bearer token for calling DBx create token api.

5. But in the response i get status code as 200 and html code.

In my scenario i am calling a powershell inline script in azure ci pipeline. Also i tried to replicated the same process via postman, i revceive the same error.

Note: If i use all the above information and execute the command locally on power shell, it works fine and returns me token.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-04-2024 08:28 PM

you need set DATABRICKS_TOKEN in header

curl --request GET "https://${DATABRICKS_HOST}/api/2.0/clusters/get" \

--header "Authorization: Bearer ${DATABRICKS_TOKEN}" \

--data '{ "cluster_id": "1234-567890-a12bcde3" }'Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-04-2024 11:01 PM

Hello @feiyun0112 ,

Thanks for the response. I am already using header in my cURL call and also i tried following command using power shell.

Invoke-WebRequest -Uri "https://$databricksWorkspaceUrl/api/2.0/token/create" -Body ($json | ConvertTo-Json) -ContentType "application/json" -Method Post -Headers $headers

This works fine when i trigger using local powershell cli but fails when i trigger using azure CI pipelines or postman

This works fine when i trigger using local powershell cli but fails when i trigger using azure CI pipelines or postman

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-18-2024 12:47 PM

@TestuserAva

Can you please share your approach to tackle this problem in case if you solved.

For me it works using powershell Invoke-WebRequest but not using postman.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-28-2024 11:30 AM

Hey all! I'm having the exact same problem. Did you manage to make it work @Abhishek10745 @TestuserAva ?

Could you please share the solution if you did? Thanks!

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-28-2024 01:06 PM

Hello @SJR ,

In the scenario which i mentioned in the previous comment, my ci pipeline was using a pool or scaleset which did not have access to this azure databricks service. Hence, when my service principal tried to create PAT token using databricks api (running on incorrect scaleset / pool) , it did not allow me to login hence i could not perform any operation using databricks api.

When i used the correct scaleset/pool which was give access to this azure databricks service, it worked.

Hope this helps you to investigate in correct direction.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-29-2024 07:28 AM

Hello @Abhishek10745

It was just like you said! We have a completely private instance of Databricks and the DevOps Pipeline that I was using didin't have access to the private vnet. Switching pools solved the problem. Thanks for all the help!

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-14-2024 11:25 AM

Hi @Abhishek10745 or @SJR ,

I am having exact problem and our databricks workspace is under Vnet. Can you please explain the process of switching pools?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-14-2024 12:50 PM

Hello @schunduri ,

Probably your DevOps team might have dedicated scaleset or clusters that must have permission on your vnet.

In CI pipeline try to use the name of that scaleset created by ur DevOps.

In my case our cloud flavour was Azure that is why I am using word scaleset on which CI pipelines run.

Regards,

Abhishek Kalekar

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-14-2024 12:55 PM

Thank you @Abhishek10745 , We are also using Azure DevOps and Azure Databricks. I will check with our internal DevOps team.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-14-2024 12:23 PM

Hey @schunduri, not entirely sure because our SRE did the change, but the machine the pipeline runs on must be within the same vnet as your DBKS workspace.

If you need more guidance, I could try and check what we did but our SRE left the company since then and it's been a while.

Let me know and best of luck!

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-14-2024 12:33 PM

Thank you @SJR for a quick response. I would really appreciate if you could find the details.

Announcements

Related Content

- Urgent: Need to Switch Exam Format from Onsite to Online Proctored Within 48 Hours in Certifications

- Databricks Exam got suspended due to a power cut in Certifications

- Request for 50% Certification Voucher Discount in Certifications

- Permission Inquiry for Databricks Course Content in Training offerings

- Faced problems while taking Databricks Data Engineer Associate exam in Get Started Discussions