Hi,

https://notebooks.databricks.com/demos/llm-rag-chatbot/index.html

Following this tutorial I'm trying to serve an endpoint with DBRX model connected to my data in Vector Db.

Without any problem I can log my model in Databricks with MLFlow and call the model locally form notebooks but when I try to serve the endpoint it still fails after about 35-40 minutes with message:

OperationFailed: failed to reach NOT_UPDATING, got EndpointStateConfigUpdate.UPDATE_FAILED: current status: EndpointStateConfigUpdate.UPDATE_FAILED

In the create_and_wait() method I set the timeout parameter for two hours to prevent stopping the method after default 20 minutes like so:

w.serving_endpoints.create_and_wait(name=serving_endpoint_name, config=endpoint_config, timeout=timedelta(hours=2))

and the value is working properly but there must be another issue causing timeout error.

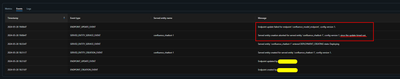

Screenshots from Serving tab in Databricks:

In the service logs I can see also some exceptions rised by conda:

Any idea how to solve the issue?