Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Technical Blog

Explore in-depth articles, tutorials, and insights on data analytics and machine learning in the Databricks Technical Blog. Stay updated on industry trends, best practices, and advanced techniques.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Technical Blog

- 5 Common Pitfalls to Avoid when Migrating to Unity...

Databricks Employee

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

11-30-2023

07:11 AM

Transitioning to Unity Catalog in the Databricks ecosystem is a critical move for better data governance and operational efficiency. Unity Catalog streamlines data management, ensuring a safe and organized data hub. However, like all tech shifts, migration demands careful planning to avoid pitfalls. In this blog we will pinpoint the five most common challenges and pitfalls, and offer solutions following Databricks best practices for a smooth migration to Unity Catalog.

1. Mismanagement of Metastores

Unity Catalog, with one metastore per region, is key for structured data differentiation across regions. Misconfiguring metastores can introduce operational issues. Databricks' Unity Catalog tackles challenges tied to traditional metastores like Hive and Glue. Centralized control, auditing, lineage, and data discovery make it ideal for managing data and AI assets across clouds.

Recommendation

- Metastores, the apex of the governance hierarchy, manage data assets and permissions. Stick to one metastore per region and use Databricks-managed Delta Sharing for data sharing across regions. This setup guarantees regional data isolation beginning at the catalog level, ensures operational consistency, prevents data inconsistencies, and follows Databricks' strong data governance guidelines.

- Make sure you understand the essentials of data governance, and tailor a model for your organization.

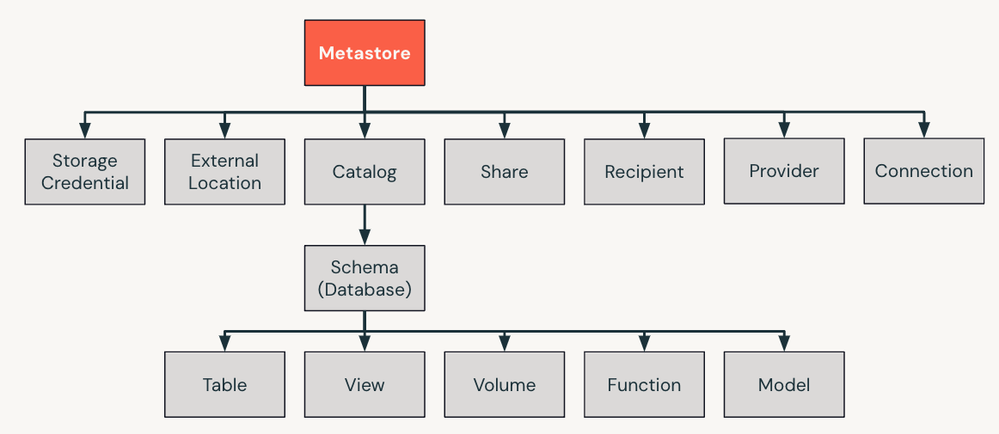

- Unity Catalog's structure comprises Metastores, Catalogs, Schemas, Tables, Views, Rows, Columns, and, more recently, Volumes and Models, each with specific roles and permissions.

- Catalogs, pivotal for data isolation, often mirroring organizational or software development lifecycle (SDLC) patterns. Create catalogs or schemas that align with your organization or SDLC for robust data governance.

- Keep distinct data storage based on organizational or legal demands. Designate managed storage location at catalog and schema levels, enforcing data isolation and governance. Notably, the metastore doesn't require a defined root storage, enabling strict segregation of data between different catalogs.

- Use Unity Catalog to bind catalogs to workspaces, allowing data access only in defined areas. Understand privileges and roles in the data structure for precise access control.

- For smooth data sharing, employ Delta Sharing. Ensure users can't access a metastore's direct storage, upholding Unity Catalog's controls and audit trails.

By carefully configuring your metastore and implementing strategic data governance, you can minimize risks and achieve a seamless transition to Unity Catalog.

2. Inadequate Access Control and Permissions Configuration

Unity Catalog's efficient data management hinges on accurate roles and access controls. Unity Catalog spells out several admin roles at varied hierarchy levels. Correct permissions setup at table, schema, and catalog stages is essential for data safeguarding and access governance.

Recommendation

- Take time to understand Databricks' data governance fundamentals, adopting a tiered permissions structure encompassing metastore, catalog, and table tiers. Aligning permissions effectively with a focus on groups enhances the access control plan, improving data security while designing your data isolation strategy in accordance with organizational or legal guidelines. This includes implementing distinct access directives, dedicated data handling, and isolated data storage accessible only in specified areas.

- To enable read-only data access, follow this permissions order:

- SELECT permission for tables or views.

- USE SCHEMA permission for the parent schema.

- USE CATALOG permission for the parent catalog

- Tap into Unity Catalog's security framework to shape user/group play areas, enabling table creation in separate schemas sans external data exchange.

- Remember, only Shared Clusters support Row Level Security and Column Level Masks.

- Limit dynamic view application unless crucial.

- Favor Row Filters and Column Level Masks. For complex filters or masks, turn to lookup tables.

- Prefer using groups rather than individual users when granting access in dynamic views and RLS/CLM, and modularize column masks for replication.

See Getting Started With Databricks Groups and Permissions for more details.

Leverage Databricks' data isolation tools via Unity Catalog to counteract data governance breaches. Unity Catalog offers a range of data isolation options, upholding an integrated data governance framework. Whether a unified or split data governance approach is desired, Databricks tailors to both, assuring compliant, limited data entry.

3: Overlooking the Need to Leverage SCIM for Identity Provider Integration

Maintaining a consistent SCIM (System for Cross-domain Identity Management) integration within the Account Console is crucial. This ensures a standardized representation of principals across workspaces, minimizing access issues.

Recommendation

- Implement SCIM provisioning in Databricks by setting up both your Identity Provider (IdP) and Databricks. SCIM aids automated user provisioning, enabling swift management of Databricks user identities through your IdP. You can deploy this through a SCIM provisioning connector in your IdP or the Identity and Access Management SCIM APIs, and keep SCIM integration active in the Account Console to standardize principals across workspaces, streamlining Unity Catalog operations and making onboarding and off-boarding more efficient.

- Opt for account-level SCIM provisioning unless there are specific requirements for workspace-level provisioning:

Account-level SCIM provisioning

The go-to method. Set up a single SCIM connector between your identity provider and Databricks account. Here, user and group workspace assignments are managed within Databricks. Activate federation in workspaces for efficient user workspace assignments.

Workspace-level SCIM provisioning

Used when workspaces aren't federation-ready or have varied setups. But for federation-ready workspaces, this method becomes repetitive.

If using workspace-level SCIM for federation-ready workspaces, think about shifting to account-level provisioning and turn off the workspace-specific connector.

In mixed setups or when moving from workspace to account-level provisioning, stick to the provided guidelines to guarantee a smooth transition and uphold Unity Catalog's operational efficiency.

4: Not Distinguishing Between Managed and External Tables

Deciding between managed and external tables in Unity Catalog is pivotal for streamlined data handling. Databricks offers two table categories: managed and external (unmanaged) tables. Grasping their distinct characteristics is key for adept data management.

Managed Tables

Databricks' managed tables offer an integrated experience, placing both metadata and actual data under Delta Lake or Unity Catalog's purview. They shine during new feature rollouts, courtesy of their innate performance boosts. For instance, crafting a managed table looks like:

CREATE TABLE my_table (

id INT,

name STRING

)

USING DELTA;

Their storage locations remain hidden, simplifying the backend complexity and ensuring a smooth setup. While these tables excel with features like Predictive Optimization, they currently champion the delta format in Unity Catalog.

External Tables

On the flip side, external tables exhibit greater flexibility, especially when connecting with data beyond Databricks:

CREATE TABLE my_table

USING DELTA

LOCATION '/folder/delta/my_table';

They're paramount for direct data engagements outside Databricks or when aligning with specific storage norms. Catering to various data formats, such as Parquet and Avro, external tables also help curb storage expenses and champion storage compartmentalization for regulatory adherence. In contrast to managed tables, erasing an external table erases only the metadata; the core data remains, necessitating distinct cloud deletion.

Recommendation

- Lean on managed tables for standard data tasks in Unity Catalog where possible; this will allow you to take advantage of certain features.

- Turn to external tables for direct off-Databricks data liaisons or precise storage mandates. Grasping the unique strengths and roles of each table type enhances utility and sidesteps potential pitfalls.

5: Mismanaging External Locations and Volumes

Unity Catalog's robust data architecture rests on understanding and efficiently managing permissions, as well as the layout of external locations and volumes. Both elements play pivotal roles in registering external tables, supporting managed locations, and ensuring smooth operations. The rule of thumb to keep in mind is that external locations are managed by admins and used to map cloud storage.

Permissions on External Locations

External Locations meld a cloud ecosystem's filesystem with requisite access credentials. Within Unity Catalog, they're viewed as core entities bearing assignable permissions, such as READ/WRITE/CREATE TABLE. These permissions influence all sub-entities, save for external tables. As an external table is crafted within an External Location, its access governance becomes independent, demanding distinct table permissions.

Creating External Locations

Externals chiefly underpin managed locales and pave the way for external tables and volumes. Ideally, situate them at a storage container's base to sidestep overlaps and forge a coherent data structure.

Delineating External Locations and Volumes

While External Locations act as containers holding various volumes, External Volumes pertain to schemas and can be tethered to select workspaces using Databricks' catalog compartmentalization. External Locations are tapped to enlist tables and volumes, and to skim through cloud files prior to initializing an external table or volume with apt permissions. In contrast, External Volumes are tailored to carve out raw data caches, data uptake phases, and storage for varied data endeavors.

Recommendation

- Steer clear of initiating volumes or tables right at the root of an external location to dodge conflicts. Instead, create volumes within an external location's sub-directory.

- Try to avoid WRITE FILES permissions on external locales. Typically, READ FILES is ample.

- Harness external volumes when data is in transit, as seen in autoloader or COPY INTO. For on-site table generation, leverage external locations.

- Prefer managed Volumes when birthing tables from files devoid of COPY INTO or CTAS usage. This strategy nurtures a methodical and agile Unity Catalog, minimizing potential snags.

Conclusion

Pivoting to Unity Catalog demands a grasp of of the system and proven management methods. By tackling the top five challenges listed in this article, organizations can declutter migration and craft a sensible, fortified, and navigable data blueprint. Marrying these insights with Databricks' tried-and-true approaches guarantees a successful Unity Catalog transition, establishing your enterprise on the cutting edge of data governance.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Related Content

- 🌟 Community Pulse: Your Weekly Roundup! June 01 – 07, 2026 in Announcements

- From Tableau to Databricks: Migrating KPI Dashboards with Metric Views in Community Articles

- Define KPIs Once with Unity Catalog Business Semantics in Community Articles

- Databricks Serverless Migration: A Practical Production Playbook in Technical Blog

- Do You Still Need a Separate Cloud Data Warehouse? Building an Open Lakehouse for High Performance in Technical Blog