Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Technical Blog

Explore in-depth articles, tutorials, and insights on data analytics and machine learning in the Databricks Technical Blog. Stay updated on industry trends, best practices, and advanced techniques.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Technical Blog

- Distributed Fine Tuning of LLMs on Databricks Lake...

Databricks Employee

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

08-31-2023

08:18 AM

Authors: Anastasia Prokaieva and Puneet Jain

The aim of this blog is to show the end-to-end process of conversion from vanilla Hugging Face 🤗 to Ray AIR 🤗 on Databricks, without changing the training logic unless necessary. We have seen many customers struggling with fine-tuning their LLM on smaller GPU instances such as A10 or V100, and so we decided to release this example using the most commonly available GPU instances across all regions on Databricks, without using the A100 instance type.

Within this blog we going to cover:

- Set up Ray Spark on Databricks

- Load data from Delta into the Hugging Face data loader

- Set your preprocess with Ray AIR

- Run distributed training with Ray AIR

- How to use Ray Dashboard

Databricks announced support for Ray on the Apache Spark cluster recently this year - a prominent compute framework for running scalable AI and Python workloads in order to dramatically simplify model development across both platforms.

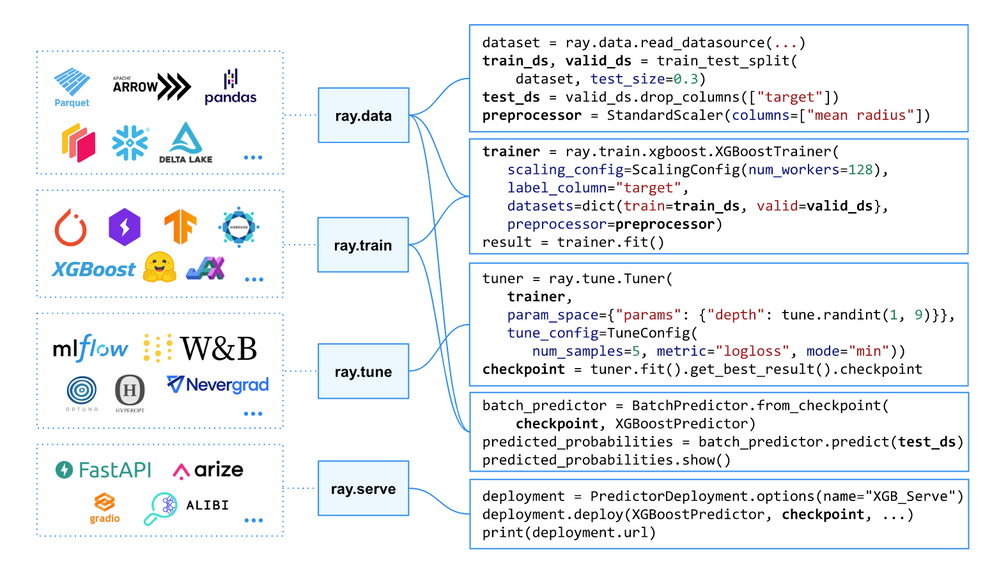

Ray AI Runtime (AIR) is a scalable and unified toolkit for ML applications. AIR enables simple scaling of individual workloads, end-to-end workflows, and popular ecosystem frameworks. Ray AIR aims to simplify the ecosystem of machine learning frameworks, platforms, and tools. One of the reasons we have selected Ray AIR for this task on top seamless integration with Apache Spark on Databricks but also because it’s fully open-source and it enables swapping between popular frameworks which is very important for Deep Learning users while switching between PyTorch and Hugging Face.

AIR provides a unified API for the ML ecosystem. This diagram shows how AIR enables an ecosystem of libraries to be run at scale in just a few lines of code.

The reason for choosing Ray AIR is that it is playing a role of a wrapper between various frameworks, which allows users simply move from one approach to another. You’ll see in part 3 of this series, we are going to fine-tune Pythia12B and Falcon7B without changing our main code from fine-tuning BERT or Roberta models, and we would just add new configuration files and parameters.

This simplicity was one of the reasons we decided to use Ray AIR. Something to keep in mind, the same training could be done without using Ray or RayAIR, such as using native PyTorch DDP or FSDP capabilities or using Accelerate from Hugging Face.

Use cases

For this blog we have selected 2 datasets and 2 models to demonstrate the simplicity and flexibility that RayAIR brings when we are trying to find the best model for a use case.

In Part 1 of the series, we are going to focus on the 2 most common use cases - Sequence Classification (simply known as Classification) and Token Classification(known as Named Entity Recognition) - the difference between both is that you require to classify the classification of the whole corpus that passed to the model(e.g. you want to know a sentiment of a tweet <The XX has broken again rules>, would become <negative>), or you required to classify token(e.g. <Anna is going to the Los Angeles California> might be mapped into<I-PER, O, I-LOC, I-LOC, I-LOC >, etc and they can be later classified as well, for example, check more about NER naming convention here) inside the corpus. If you have your own tags for the token, this is not a problem, you would need to adapt your tokenizer, here you can find an example and a class from the HF.

For this series, we’ve selected 2 popular datasets from Hugging Face with descriptions given below:

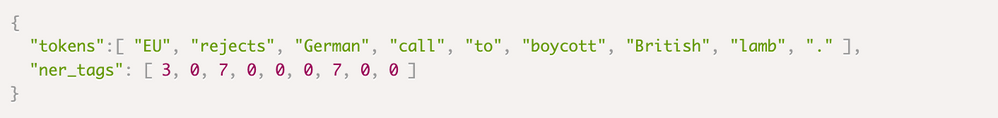

CoNLL-2003 is a widely used dataset and evaluation benchmark in the field of natural language processing (NLP) and named entity recognition (NER). The data is a collection of news wire articles from the Reuters Corpus. The annotation has been done by people of the University of Antwerp. It focuses on four types of named entities: persons, locations, organizations, and names of miscellaneous entities that do not belong to the previous three groups. Below is an example of the format:

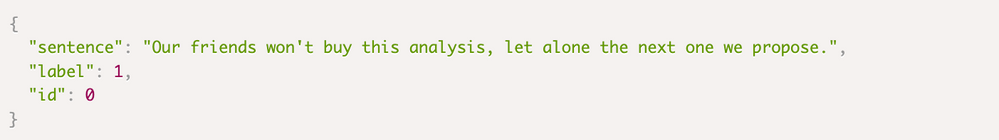

The CoLA (Corpus of Linguistic Acceptability) dataset consists of English sentences labeled for grammatical acceptability. Each sentence is annotated with a binary label indicating whether it is linguistically acceptable or not. It forms one of the tasks used in the GLUE Benchmark. Here is an example of the cola format:

Let’s begin

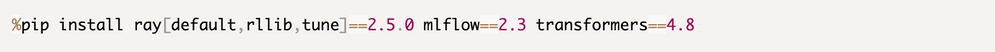

Before even starting loading your dataset we need to install a few libraries and set our Ray cluster. Here we are using Databricks 13.1 ML runtime, where we already have a new version of the MLFlow 2.3 that has a new Transformer Flavor.

If you are using a DBR ML runtime lower than 13.1 you will have to install MLFlow 2.3 as well:

Other libraries are already preinstalled on the Databricks Machine Learning cluster, so you would not have to install them.

Setting Ray

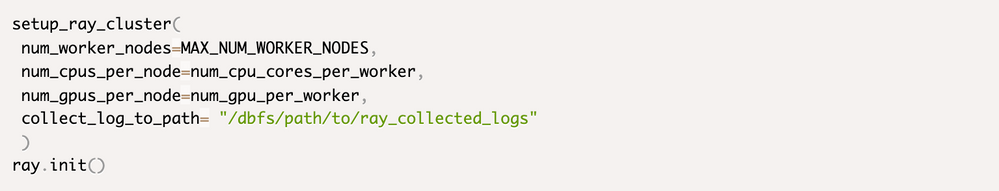

In order to set up a Ray cluster on Databricks after the full integration of Ray on top of Spark on the platform you would need only a few tiny things:

- Set a few variables such as num_cpu_cores_per_worker and num_gpu_per_worker

- Run an init function from Ray to set up a Ray Cluster

Once run you will have the following prompt appearing:

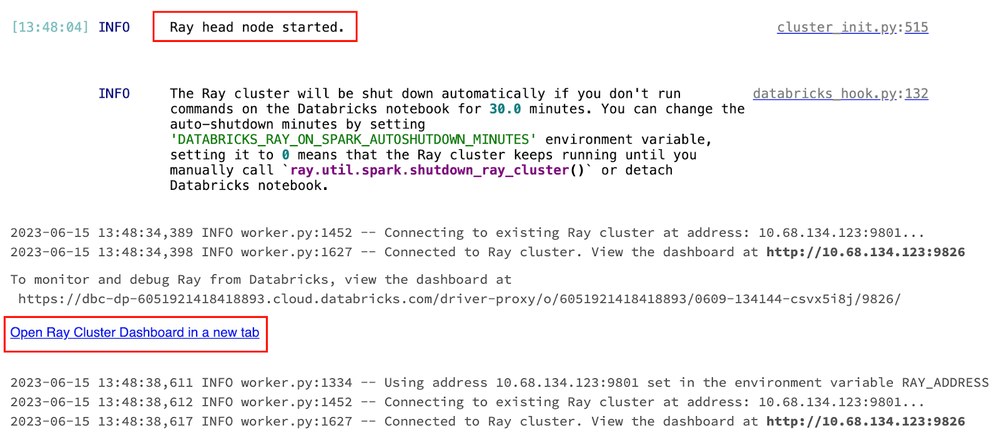

After the function finishes running it will show a link to open ray the dashboard (see the highlighted hyperlink on the image below):

Output within a Notebook cell once the Ray was initiated and the link to the Interactive Dashboard is given

And you are set to go!

Loading and preprocessing data

Typical LLM Pipeline example

In order to apply any LLM model to your own data you would need to follow the following steps:

- Load your original data

- Clean if necessary

- Apply a tokenizer to your data

- Apply a pre-trained model to your data

- Apply post-processing if required

All those steps can be incorporated into a single pipeline, Hugging Face offers Pipelines for inference. But why we are talking about inference before even training? Because you still need to apply pre-processing steps before fine-tuning a model on your data. For the classification use case, the dataset is ready to be consumed but for the Token Classification, we had to apply a transformation in order to correctly associate tokenized input data with its original tokens. We attach a script under utils that helps you to properly do that.

There are multiple options on how to read data, we are going to talk about the two most common options from Delta and directly from the HF Datasets.

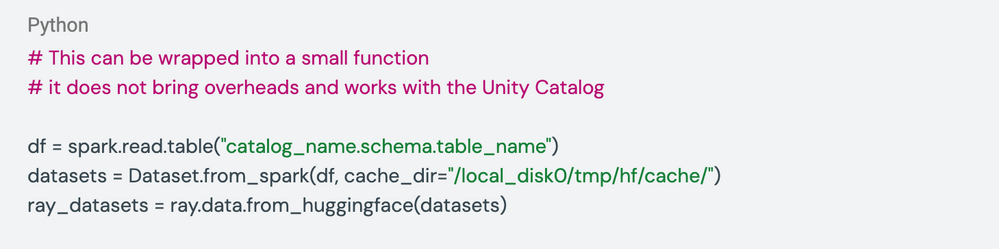

From Delta

Hugging Face gets first-class Spark support has been recently announced. This allows users to use Spark to efficiently load and transform data for training or fine-tuning a model, then easily map their Spark dataframe into a Hugging Face dataset for super simple integration into a training pipeline. This combines cost savings and speed from Spark and optimizations like memory mapping and smart caching from Hugging Face datasets.

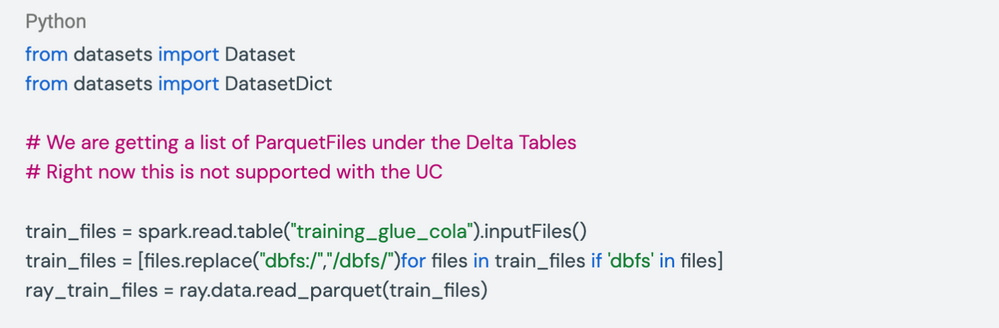

From memory

Let’s say you are getting your data from the Hugging Face Hub or somewhere else, this would be downloaded directly into your Disk, and will be available while your Cluster is on (you can, of course, download it and store on the DBFS and load it back into the memory back). The same could work with reading your files from Parquet or with the deltaRay incubator project. Here we are showing how to read from a list of parquet files:

Training and Scoring

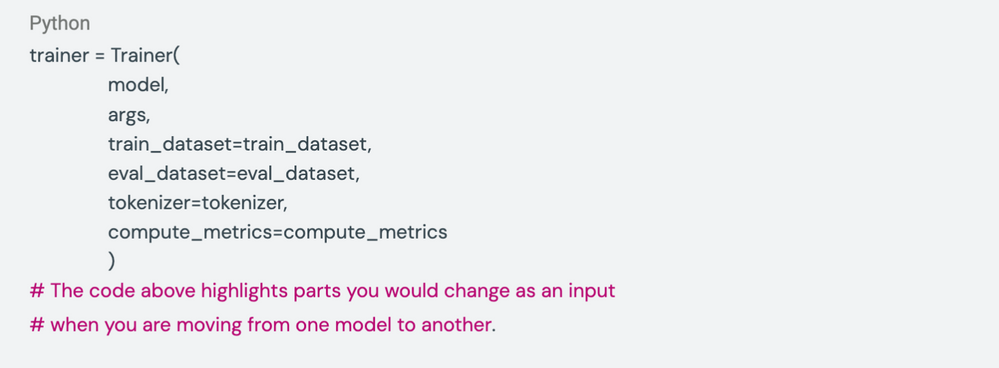

In order to fine-tune your model from HF you would need to construct a Trainer and it usually contains the model, arguments(parameters) you want to train your model with, and a tokenizer that will create embeddings corresponding to the family of the model, metrics you want to evaluate your model on and input data.

Keep in my that it’s recommended to not mix tokenizers(embeddings) from different family models because they vary. Your compute metrics are often better to take from a dataset that corresponds to your use cases from the HF Hub.

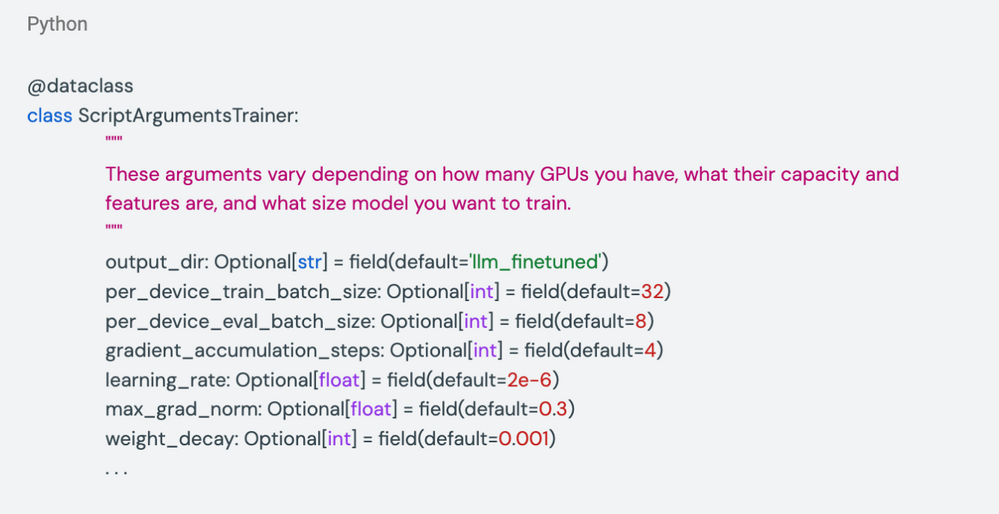

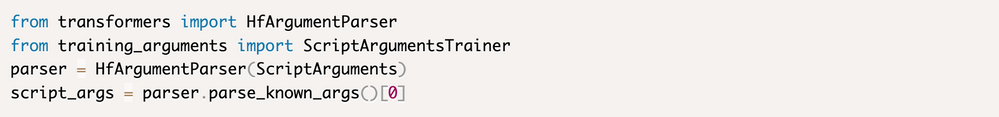

Now let’s talk a bit more about the Arguments to train your model with, there are various ways to pass your arguments to the HF Trainer. Here we are using a Python data class to create our default parameters and placing them in an external Python file.

It’s very simple to use those arguments later on. First of all, HF has a HfArgumentParser that you can read and pass directly to the HF Trainer

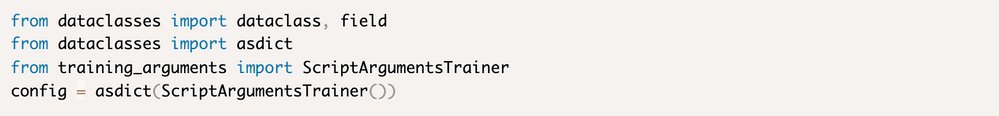

Another way of doing this would be to directly read the ScriptArguments class and convert it into a dictionary("dict") and pass your preselected arguments to the trainer.

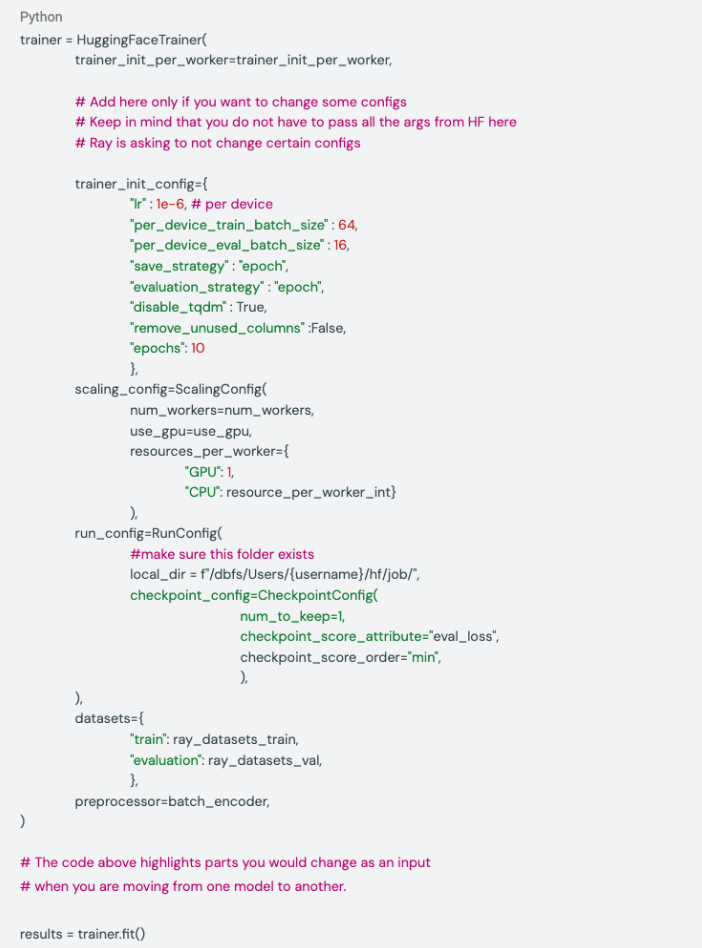

Config for HF Trainer with RayAIR

Let’s talk about the configuration file for the Hugging Face Trainer Class, that Ray AIR inherits within HuggingFaceTrainer, we are not going to talk about each of the parameters used during the training but will pay attention to some new configurations that you would not have seen yet.

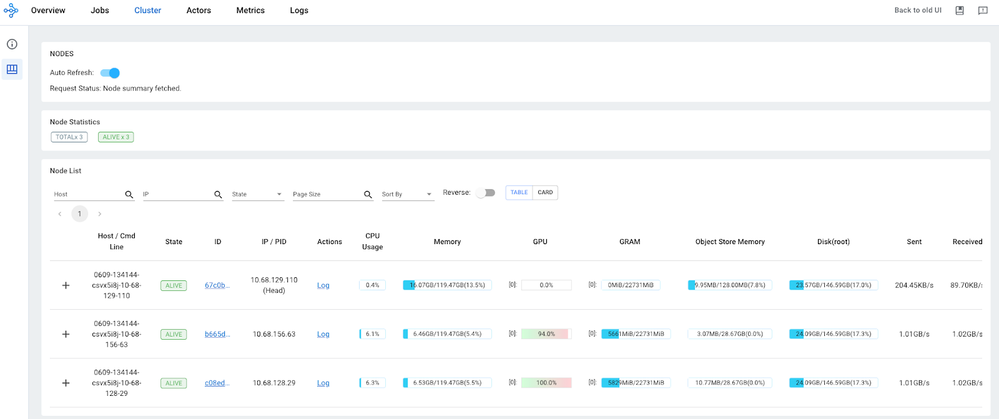

When you set everything the trainer is evoked you can go and check your GPU utilization under the Ray Dashboard(the link to the dashboard is given when you first run setup_ray_cluster. Ray Dashboard is interactive so you will be able to monitor your resource usage in almost real-time. This can help to understand whether your cluster configuration is sufficient or not and to properly catch OOM on your GPU and CPU.

Ray Dashboard Cluster Tab, while the model training is enabled.

Above you have an example of a Ray Dashboard with the GPU usage while we were training, you have a similar capability starting from DBR 13.2 ML under your cluster configuration go to the Metrics Page.

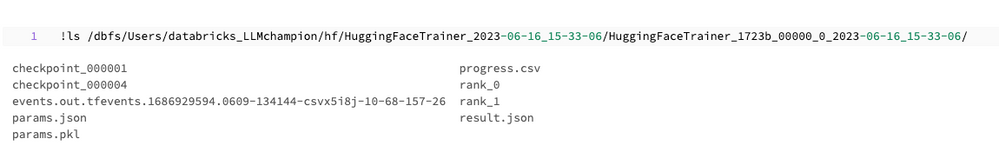

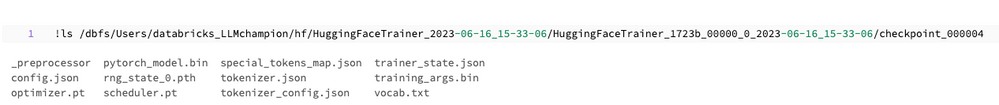

Checkpoints

After the trainer is finished it’s very simple to get your results back, they will be all under the checkpoint folder you’ve provided. Sometimes it may happen that your notebook has crashed and your result variable is gone, but if your cluster is still on, your checkpoints are alive! Here is an example of what contains a checkpoint(everything you need to put your model in production):

Conclusions

In this first part, we have demonstrated to you how to load your data from Delta Lake or from the memory and use your datasets to fine-tune a Huggig Face model using the RayAIR framework and Apache Spark.

If you liked this blog please check this Git Repository with the code that supports this series of blogs.

The next part will cover how to:

- Track and log your LLM with MLFlow

- Predict on test data with Ray AIR

- Serve your model with Real-Time Endpoint on Databricks using CPU and GPU!

- Talk about issues we encountered and solutions we found

See you soon!

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Related Content

- Do You Still Need a Separate Cloud Data Warehouse? Building an Open Lakehouse for High Performance in Technical Blog

- Databricks Lake base Evolution - Mandatory Shift from Provisioned Instances to Elastic Autoscaling in Lakebase Articles

- 🌟 Community Pulse: Your Weekly Roundup! May 11 – 17, 2026 in Announcements

- The Top 10 Best Practices for AI/BI Dashboards Performance Optimization (Part 2) in Technical Blog

- The Top 10 Best Practices for AI/BI Dashboards Performance Optimization (Part 1) in Technical Blog